Spaces:

Runtime error

A newer version of the Gradio SDK is available:

5.6.0

Demo

We provide an easy-to-use API for the demo and application purpose in ocr.py script.

The API can be called through command line (CL) or by calling it from another python script.

Example 1: Text Detection

Instruction: Perform detection inference on an image with the TextSnake recognition model, export the result in a json file (default) and save the visualization file.

- CL interface:

python mmocr/utils/ocr.py demo/demo_text_det.jpg --output demo/det_out.jpg --det TextSnake --recog None --export demo/

- Python interface:

from mmocr.utils.ocr import MMOCR

# Load models into memory

ocr = MMOCR(det='TextSnake', recog=None)

# Inference

results = ocr.readtext('demo/demo_text_det.jpg', output='demo/det_out.jpg', export='demo/')

Example 2: Text Recognition

Instruction: Perform batched recognition inference on a folder with hundreds of image with the CRNN_TPS recognition model and save the visualization results in another folder. Batch size is set to 10 to prevent out of memory CUDA runtime errors.

- CL interface:

python mmocr/utils/ocr.py %INPUT_FOLDER_PATH% --det None --recog CRNN_TPS --batch-mode --single-batch-size 10 --output %OUPUT_FOLDER_PATH%

- Python interface:

from mmocr.utils.ocr import MMOCR

# Load models into memory

ocr = MMOCR(det=None, recog='CRNN_TPS')

# Inference

results = ocr.readtext(%INPUT_FOLDER_PATH%, output = %OUTPUT_FOLDER_PATH%, batch_mode=True, single_batch_size = 10)

Example 3: Text Detection + Recognition

Instruction: Perform ocr (det + recog) inference on the demo/demo_text_det.jpg image with the PANet_IC15 (default) detection model and SAR (default) recognition model, print the result in the terminal and show the visualization.

- CL interface:

python mmocr/utils/ocr.py demo/demo_text_ocr.jpg --print-result --imshow

:::{note}

When calling the script from the command line, the script assumes configs are saved in the configs/ folder. User can customize the directory by specifying the value of config_dir.

:::

- Python interface:

from mmocr.utils.ocr import MMOCR

# Load models into memory

ocr = MMOCR()

# Inference

results = ocr.readtext('demo/demo_text_ocr.jpg', print_result=True, imshow=True)

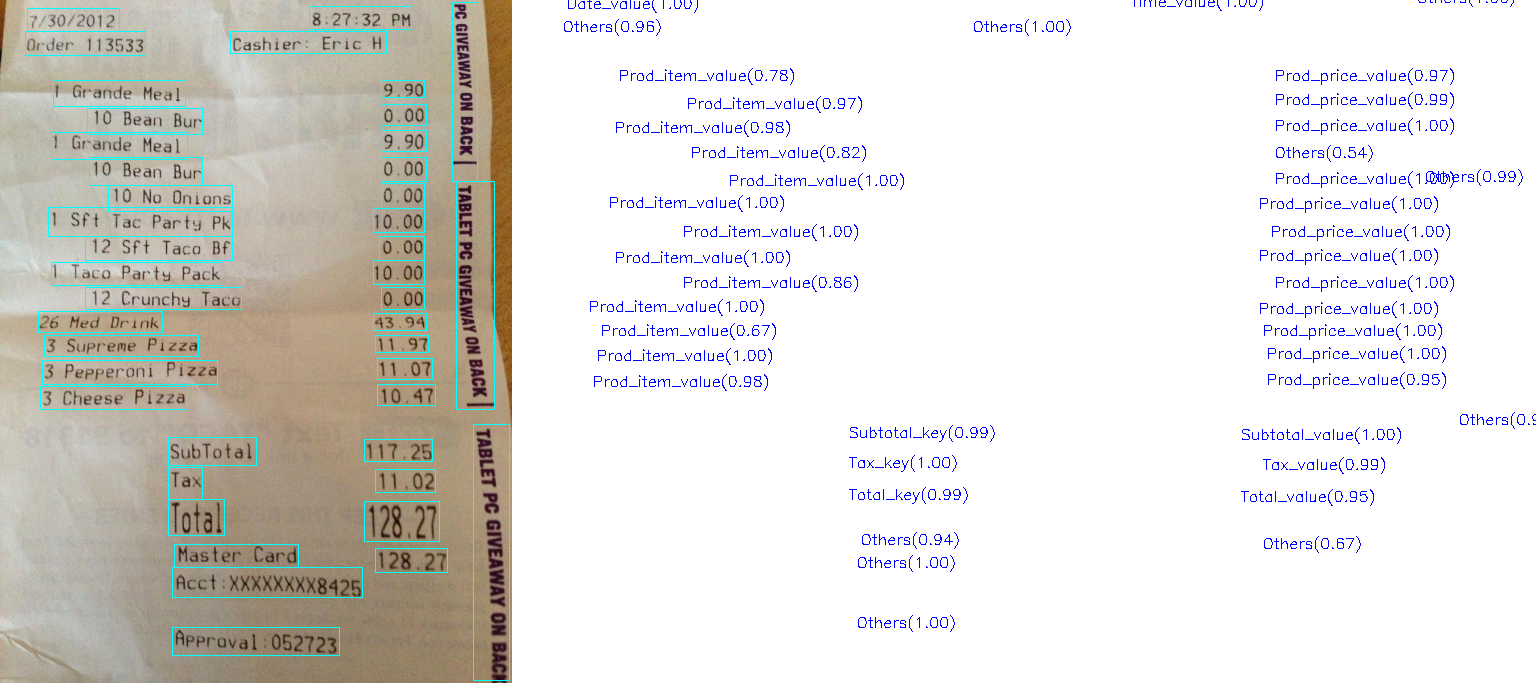

Example 4: Text Detection + Recognition + Key Information Extraction

Instruction: Perform end-to-end ocr (det + recog) inference first with PS_CTW detection model and SAR recognition model, then run KIE inference with SDMGR model on the ocr result and show the visualization.

- CL interface:

python mmocr/utils/ocr.py demo/demo_kie.jpeg --det PS_CTW --recog SAR --kie SDMGR --print-result --imshow

:::{note}

Note: When calling the script from the command line, the script assumes configs are saved in the configs/ folder. User can customize the directory by specifying the value of config_dir.

:::

- Python interface:

from mmocr.utils.ocr import MMOCR

# Load models into memory

ocr = MMOCR(det='PS_CTW', recog='SAR', kie='SDMGR')

# Inference

results = ocr.readtext('demo/demo_kie.jpeg', print_result=True, imshow=True)

API Arguments

The API has an extensive list of arguments that you can use. The following tables are for the python interface.

MMOCR():

| Arguments | Type | Default | Description |

|---|---|---|---|

det |

see models | PANet_IC15 | Text detection algorithm |

recog |

see models | SAR | Text recognition algorithm |

kie [1] |

see models | None | Key information extraction algorithm |

config_dir |

str | configs/ | Path to the config directory where all the config files are located |

det_config |

str | None | Path to the custom config file of the selected det model |

det_ckpt |

str | None | Path to the custom checkpoint file of the selected det model |

recog_config |

str | None | Path to the custom config file of the selected recog model |

recog_ckpt |

str | None | Path to the custom checkpoint file of the selected recog model |

kie_config |

str | None | Path to the custom config file of the selected kie model |

kie_ckpt |

str | None | Path to the custom checkpoint file of the selected kie model |

device |

str | None | Device used for inference, accepting all allowed strings by torch.device. E.g., 'cuda:0' or 'cpu'. |

[1]: kie is only effective when both text detection and recognition models are specified.

:::{note}

User can use default pretrained models by specifying det and/or recog, which is equivalent to specifying their corresponding *_config and *_ckpt. However, manually specifying *_config and *_ckpt will always override values set by det and/or recog. Similar rules also apply to kie, kie_config and kie_ckpt.

:::

readtext()

| Arguments | Type | Default | Description |

|---|---|---|---|

img |

str/list/tuple/np.array | required | img, folder path, np array or list/tuple (with img paths or np arrays) |

output |

str | None | Output result visualization - img path or folder path |

batch_mode |

bool | False | Whether use batch mode for inference [1] |

det_batch_size |

int | 0 | Batch size for text detection (0 for max size) |

recog_batch_size |

int | 0 | Batch size for text recognition (0 for max size) |

single_batch_size |

int | 0 | Batch size for only detection or recognition |

export |

str | None | Folder where the results of each image are exported |

export_format |

str | json | Format of the exported result file(s) |

details |

bool | False | Whether include the text boxes coordinates and confidence values |

imshow |

bool | False | Whether to show the result visualization on screen |

print_result |

bool | False | Whether to show the result for each image |

merge |

bool | False | Whether to merge neighboring boxes [2] |

merge_xdist |

float | 20 | The maximum x-axis distance to merge boxes |

[1]: Make sure that the model is compatible with batch mode.

[2]: Only effective when the script is running in det + recog mode.

All arguments are the same for the cli, all you need to do is add 2 hyphens at the beginning of the argument and replace underscores by hyphens.

(Example: det_batch_size becomes --det-batch-size)

For bool type arguments, putting the argument in the command stores it as true.

(Example: python mmocr/utils/ocr.py demo/demo_text_det.jpg --batch_mode --print_result

means that batch_mode and print_result are set to True)

Models

Text detection:

| Name | Reference | batch_mode inference support |

|---|---|---|

| DB_r18 | link | :x: |

| DB_r50 | link | :x: |

| DRRG | link | :x: |

| FCE_IC15 | link | :x: |

| FCE_CTW_DCNv2 | link | :x: |

| MaskRCNN_CTW | link | :x: |

| MaskRCNN_IC15 | link | :x: |

| MaskRCNN_IC17 | link | :x: |

| PANet_CTW | link | :heavy_check_mark: |

| PANet_IC15 | link | :heavy_check_mark: |

| PS_CTW | link | :x: |

| PS_IC15 | link | :x: |

| TextSnake | link | :heavy_check_mark: |

Text recognition:

| Name | Reference | batch_mode inference support |

|---|---|---|

| ABINet | link | :heavy_check_mark: |

| CRNN | link | :x: |

| SAR | link | :heavy_check_mark: |

| SAR_CN * | link | :heavy_check_mark: |

| NRTR_1/16-1/8 | link | :heavy_check_mark: |

| NRTR_1/8-1/4 | link | :heavy_check_mark: |

| RobustScanner | link | :heavy_check_mark: |

| SATRN | link | :heavy_check_mark: |

| SATRN_sm | link | :heavy_check_mark: |

| SEG | link | :x: |

| CRNN_TPS | link | :heavy_check_mark: |

:::{warning}

SAR_CN is the only model that supports Chinese character recognition and it requires a Chinese dictionary. Please download the dictionary from here for a successful run.

:::

Key information extraction:

| Name | Reference | batch_mode support |

|---|---|---|

| SDMGR | link | :heavy_check_mark: |

Additional info

- To perform det + recog inference (end2end ocr), both the

detandrecogarguments must be defined. - To perform only detection set the

recogargument toNone. - To perform only recognition set the

detargument toNone. detailsargument only works with end2end ocr.det_batch_sizeandrecog_batch_sizearguments define the number of images you want to forward to the model at the same time. For maximum speed, set this to the highest number you can. The max batch size is limited by the model complexity and the GPU VRAM size.

If you have any suggestions for new features, feel free to open a thread or even PR :)