Spaces:

Running

on

Zero

VGGSfM: Visual Geometry Grounded Deep Structure From Motion

Meta AI Research, GenAI; University of Oxford, VGG

Jianyuan Wang, Nikita Karaev, Christian Rupprecht, David Novotny

[Paper] [Project Page] [Version 2.0]

Updates:

[Jun 25, 2024] Upgrade to VGGSfM 2.0! More memory efficient, more robust, more powerful, and easier to start!

[Apr 23, 2024] Release the code and model weight for VGGSfM v1.1.

Installation

We provide a simple installation script that, by default, sets up a conda environment with Python 3.10, PyTorch 2.1, and CUDA 12.1.

source install.sh

This script installs official pytorch3d, accelerate, lightglue, pycolmap, and visdom. Besides, it will also (optionally) install poselib using the python wheel under the folder wheels, which is compiled by us instead of the official poselib team.

Demo

1. Download Model

To get started, you need to first download the checkpoint. We provide the checkpoint for v2.0 model by Hugging Face and Google Drive.

2. Run the Demo

Now time to enjoy your 3D reconstruction! You can start by our provided examples, such as:

python demo.py SCENE_DIR=examples/cake resume_ckpt=/PATH/YOUR/CKPT

python demo.py SCENE_DIR=examples/british_museum query_frame_num=2 resume_ckpt=/PATH/YOUR/CKPT

python demo.py SCENE_DIR=examples/apple query_frame_num=5 max_query_pts=1600 resume_ckpt=/PATH/YOUR/CKPT

All default settings for the flags are specified in cfgs/demo.yaml. For example, we have modified the values of query_frame_num and max_query_pts from the default settings of 3 and 4096 to 5 and 1600, respectively, to ensure a 32 GB GPU can work for examples/apple.

The reconstruction result (camera parameters and 3D points) will be automatically saved in the COLMAP format at output/seq_name. You can use the COLMAP GUI to view them.

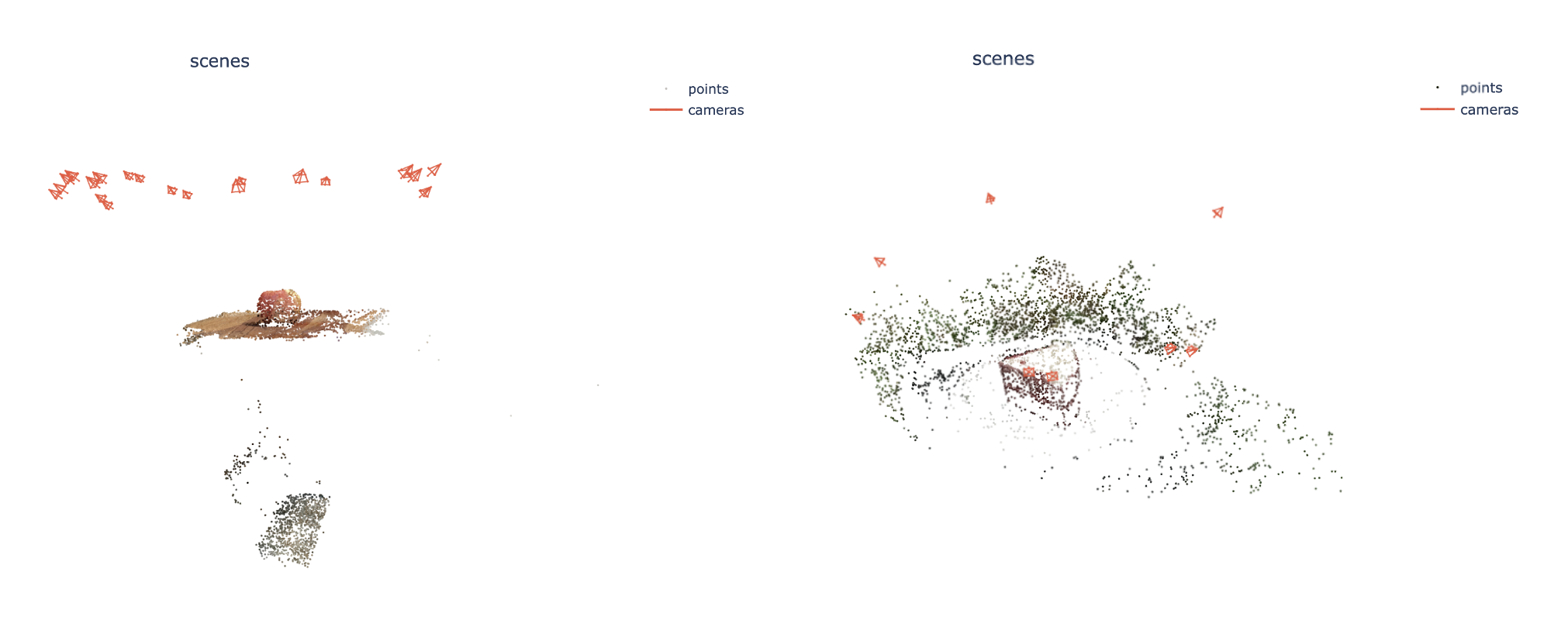

If you want to visualize it more easily, we provide an approach supported by visdom. To begin using Visdom, start the server by entering visdom in the command line. Once the server is running, access Visdom by navigating to http://localhost:8097 in your web browser. Now every reconstruction will be visualized and saved to the visdom server by enabling visualize=True:

python demo.py visualize=True ...(other flags)

By doing so, you should see an interface such as:

3. Try your own data

You only need to specify the address of your data, such as:

python demo.py SCENE_DIR=examples/YOUR_FOLDER ...(other flags)

Please ensure that the images are stored in YOUR_FOLDER/images. This folder should contain only the images. Check the examples folder for the desired data structure.

Have fun and feel free to create an issue if you meet any problem. SfM is always about corner/hard cases. I am happy to help. If you prefer not to share your images publicly, please send them to me by email.

FAQ

- What should I do if I encounter an out-of-memory error?

To resolve an out-of-memory error, you can start by reducing the number of max_query_pts from the default 4096 to a lower value. If necessary, consider decreasing the query_frame_num. Be aware that these adjustments may result in a sparser point cloud and could potentially impact the accuracy of the reconstruction.

Testing

We are still preparing the testing script for VGGSfM v2. However, you can use our code for VGGSfM v1.1 to reproduce our benchmark results in the paper. Please refer to the branch v1.1.

Acknowledgement

We are highly inspired by colmap, pycolmap, posediffusion, cotracker, and kornia.

License

See the LICENSE file for details about the license under which this code is made available.

Citing VGGSfM

If you find our repository useful, please consider giving it a star ⭐ and citing our paper in your work:

@article{wang2023vggsfm,

title={VGGSfM: Visual Geometry Grounded Deep Structure From Motion},

author={Wang, Jianyuan and Karaev, Nikita and Rupprecht, Christian and Novotny, David},

journal={arXiv preprint arXiv:2312.04563},

year={2023}

}