modelId

stringlengths 5

122

| author

stringlengths 2

42

| last_modified

unknown | downloads

int64 0

738M

| likes

int64 0

11k

| library_name

stringclasses 245

values | tags

sequencelengths 1

4.05k

| pipeline_tag

stringclasses 48

values | createdAt

unknown | card

stringlengths 1

901k

|

|---|---|---|---|---|---|---|---|---|---|

martin-ha/toxic-comment-model | martin-ha | "2022-05-06T02:24:31Z" | 1,984,932 | 49 | transformers | [

"transformers",

"pytorch",

"distilbert",

"text-classification",

"en",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | "2022-03-02T23:29:05Z" | ---

language: en

---

## Model description

This model is a fine-tuned version of the [DistilBERT model](https://huggingface.co/transformers/model_doc/distilbert.html) to classify toxic comments.

## How to use

You can use the model with the following code.

```python

from transformers import AutoModelForSequenceClassification, AutoTokenizer, TextClassificationPipeline

model_path = "martin-ha/toxic-comment-model"

tokenizer = AutoTokenizer.from_pretrained(model_path)

model = AutoModelForSequenceClassification.from_pretrained(model_path)

pipeline = TextClassificationPipeline(model=model, tokenizer=tokenizer)

print(pipeline('This is a test text.'))

```

## Limitations and Bias

This model is intended to use for classify toxic online classifications. However, one limitation of the model is that it performs poorly for some comments that mention a specific identity subgroup, like Muslim. The following table shows a evaluation score for different identity group. You can learn the specific meaning of this metrics [here](https://www.kaggle.com/c/jigsaw-unintended-bias-in-toxicity-classification/overview/evaluation). But basically, those metrics shows how well a model performs for a specific group. The larger the number, the better.

| **subgroup** | **subgroup_size** | **subgroup_auc** | **bpsn_auc** | **bnsp_auc** |

| ----------------------------- | ----------------- | ---------------- | ------------ | ------------ |

| muslim | 108 | 0.689 | 0.811 | 0.88 |

| jewish | 40 | 0.749 | 0.86 | 0.825 |

| homosexual_gay_or_lesbian | 56 | 0.795 | 0.706 | 0.972 |

| black | 84 | 0.866 | 0.758 | 0.975 |

| white | 112 | 0.876 | 0.784 | 0.97 |

| female | 306 | 0.898 | 0.887 | 0.948 |

| christian | 231 | 0.904 | 0.917 | 0.93 |

| male | 225 | 0.922 | 0.862 | 0.967 |

| psychiatric_or_mental_illness | 26 | 0.924 | 0.907 | 0.95 |

The table above shows that the model performs poorly for the muslim and jewish group. In fact, you pass the sentence "Muslims are people who follow or practice Islam, an Abrahamic monotheistic religion." Into the model, the model will classify it as toxic. Be mindful for this type of potential bias.

## Training data

The training data comes this [Kaggle competition](https://www.kaggle.com/c/jigsaw-unintended-bias-in-toxicity-classification/data). We use 10% of the `train.csv` data to train the model.

## Training procedure

You can see [this documentation and codes](https://github.com/MSIA/wenyang_pan_nlp_project_2021) for how we train the model. It takes about 3 hours in a P-100 GPU.

## Evaluation results

The model achieves 94% accuracy and 0.59 f1-score in a 10000 rows held-out test set. |

ealvaradob/bert-finetuned-phishing | ealvaradob | "2024-02-07T05:11:47Z" | 1,949,039 | 7 | transformers | [

"transformers",

"pytorch",

"bert",

"text-classification",

"generated_from_trainer",

"phishing",

"BERT",

"en",

"dataset:ealvaradob/phishing-dataset",

"base_model:bert-large-uncased",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | "2023-12-20T18:31:54Z" | ---

license: apache-2.0

base_model: bert-large-uncased

tags:

- generated_from_trainer

- phishing

- BERT

metrics:

- accuracy

- precision

- recall

model-index:

- name: bert-finetuned-phishing

results: []

widget:

- text: https://www.verif22.com

example_title: Phishing URL

- text: Dear colleague, An important update about your email has exceeded your

storage limit. You will not be able to send or receive all of your messages.

We will close all older versions of our Mailbox as of Friday, June 12, 2023.

To activate and complete the required information click here (https://ec-ec.squarespace.com).

Account must be reactivated today to regenerate new space. Management Team

example_title: Phishing Email

- text: You have access to FREE Video Streaming in your plan. REGISTER with your email, password and

then select the monthly subscription option. https://bit.ly/3vNrU5r

example_title: Phishing SMS

- text: if(data.selectedIndex > 0){$('#hidCflag').val(data.selectedData.value);};;

var sprypassword1 = new Spry.Widget.ValidationPassword("sprypassword1");

var sprytextfield1 = new Spry.Widget.ValidationTextField("sprytextfield1", "email");

example_title: Phishing Script

- text: Hi, this model is really accurate :)

example_title: Benign message

datasets:

- ealvaradob/phishing-dataset

language:

- en

pipeline_tag: text-classification

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# BERT FINETUNED ON PHISHING DETECTION

This model is a fine-tuned version of [bert-large-uncased](https://huggingface.co/bert-large-uncased) on an [phishing dataset](https://huggingface.co/datasets/ealvaradob/phishing-dataset),

capable of detecting phishing in its four most common forms: URLs, Emails, SMS messages and even websites.

It achieves the following results on the evaluation set:

- Loss: 0.1953

- Accuracy: 0.9717

- Precision: 0.9658

- Recall: 0.9670

- False Positive Rate: 0.0249

## Model description

BERT is a transformers model pretrained on a large corpus of English data in a self-supervised fashion.

This means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why

it can use lots of publicly available data) with an automatic process to generate inputs and labels from

those texts.

This model has the following configuration:

- 24-layer

- 1024 hidden dimension

- 16 attention heads

- 336M parameters

## Motivation and Purpose

Phishing is one of the most frequent and most expensive cyber-attacks according to several security reports.

This model aims to efficiently and accurately prevent phishing attacks against individuals and organizations.

To achieve it, BERT was trained on a diverse and robust dataset containing: URLs, SMS Messages, Emails and

Websites, which allows the model to extend its detection capability beyond the usual and to be used in various

contexts.

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 4

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | False Positive Rate |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|:---------:|:------:|:-------------------:|

| 0.1487 | 1.0 | 3866 | 0.1454 | 0.9596 | 0.9709 | 0.9320 | 0.0203 |

| 0.0805 | 2.0 | 7732 | 0.1389 | 0.9691 | 0.9663 | 0.9601 | 0.0243 |

| 0.0389 | 3.0 | 11598 | 0.1779 | 0.9683 | 0.9778 | 0.9461 | 0.0156 |

| 0.0091 | 4.0 | 15464 | 0.1953 | 0.9717 | 0.9658 | 0.9670 | 0.0249 |

### Framework versions

- Transformers 4.34.1

- Pytorch 2.1.1+cu121

- Datasets 2.14.6

- Tokenizers 0.14.1 |

nlpconnect/vit-gpt2-image-captioning | nlpconnect | "2023-02-27T15:00:09Z" | 1,942,149 | 765 | transformers | [

"transformers",

"pytorch",

"vision-encoder-decoder",

"image-to-text",

"image-captioning",

"doi:10.57967/hf/0222",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | image-to-text | "2022-03-02T23:29:05Z" | ---

tags:

- image-to-text

- image-captioning

license: apache-2.0

widget:

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/savanna.jpg

example_title: Savanna

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/football-match.jpg

example_title: Football Match

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/airport.jpg

example_title: Airport

---

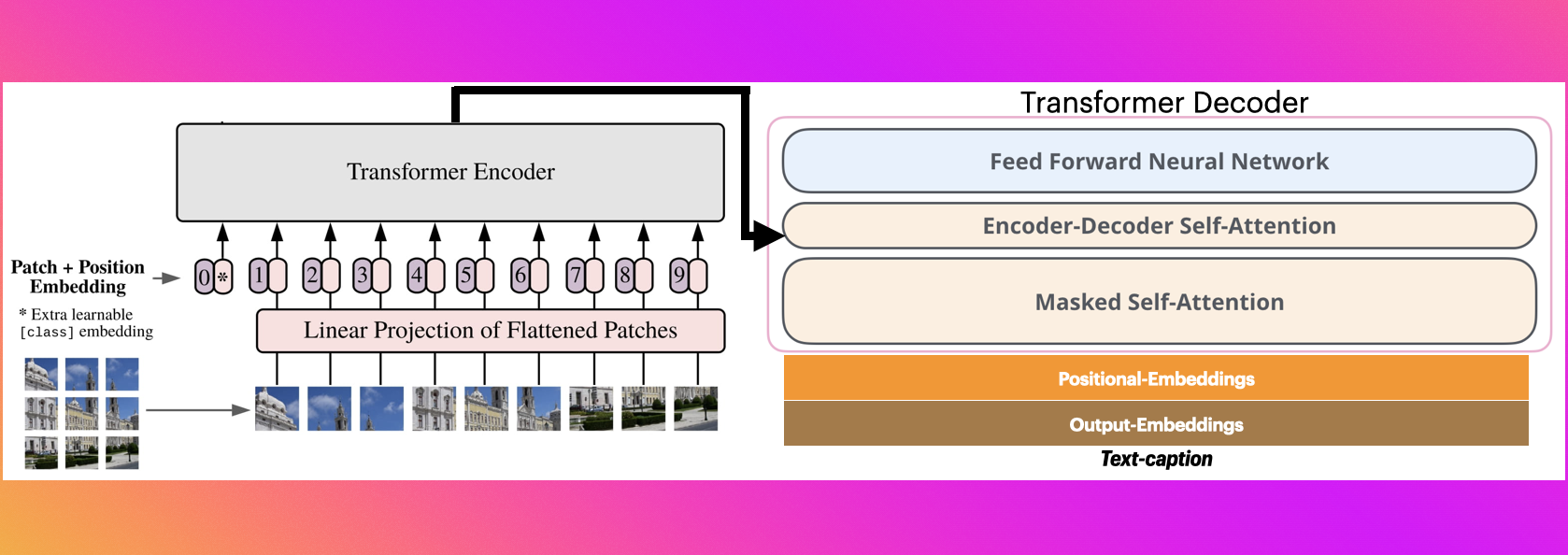

# nlpconnect/vit-gpt2-image-captioning

This is an image captioning model trained by @ydshieh in [flax ](https://github.com/huggingface/transformers/tree/main/examples/flax/image-captioning) this is pytorch version of [this](https://huggingface.co/ydshieh/vit-gpt2-coco-en-ckpts).

# The Illustrated Image Captioning using transformers

* https://ankur3107.github.io/blogs/the-illustrated-image-captioning-using-transformers/

# Sample running code

```python

from transformers import VisionEncoderDecoderModel, ViTImageProcessor, AutoTokenizer

import torch

from PIL import Image

model = VisionEncoderDecoderModel.from_pretrained("nlpconnect/vit-gpt2-image-captioning")

feature_extractor = ViTImageProcessor.from_pretrained("nlpconnect/vit-gpt2-image-captioning")

tokenizer = AutoTokenizer.from_pretrained("nlpconnect/vit-gpt2-image-captioning")

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model.to(device)

max_length = 16

num_beams = 4

gen_kwargs = {"max_length": max_length, "num_beams": num_beams}

def predict_step(image_paths):

images = []

for image_path in image_paths:

i_image = Image.open(image_path)

if i_image.mode != "RGB":

i_image = i_image.convert(mode="RGB")

images.append(i_image)

pixel_values = feature_extractor(images=images, return_tensors="pt").pixel_values

pixel_values = pixel_values.to(device)

output_ids = model.generate(pixel_values, **gen_kwargs)

preds = tokenizer.batch_decode(output_ids, skip_special_tokens=True)

preds = [pred.strip() for pred in preds]

return preds

predict_step(['doctor.e16ba4e4.jpg']) # ['a woman in a hospital bed with a woman in a hospital bed']

```

# Sample running code using transformers pipeline

```python

from transformers import pipeline

image_to_text = pipeline("image-to-text", model="nlpconnect/vit-gpt2-image-captioning")

image_to_text("https://ankur3107.github.io/assets/images/image-captioning-example.png")

# [{'generated_text': 'a soccer game with a player jumping to catch the ball '}]

```

# Contact for any help

* https://huggingface.co/ankur310794

* https://twitter.com/ankur310794

* http://github.com/ankur3107

* https://www.linkedin.com/in/ankur310794 |

Helsinki-NLP/opus-mt-zh-en | Helsinki-NLP | "2023-08-16T12:09:10Z" | 1,870,002 | 397 | transformers | [

"transformers",

"pytorch",

"tf",

"rust",

"marian",

"text2text-generation",

"translation",

"zh",

"en",

"license:cc-by-4.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | translation | "2022-03-02T23:29:04Z" | ---

language:

- zh

- en

tags:

- translation

license: cc-by-4.0

---

### zho-eng

## Table of Contents

- [Model Details](#model-details)

- [Uses](#uses)

- [Risks, Limitations and Biases](#risks-limitations-and-biases)

- [Training](#training)

- [Evaluation](#evaluation)

- [Citation Information](#citation-information)

- [How to Get Started With the Model](#how-to-get-started-with-the-model)

## Model Details

- **Model Description:**

- **Developed by:** Language Technology Research Group at the University of Helsinki

- **Model Type:** Translation

- **Language(s):**

- Source Language: Chinese

- Target Language: English

- **License:** CC-BY-4.0

- **Resources for more information:**

- [GitHub Repo](https://github.com/Helsinki-NLP/OPUS-MT-train)

## Uses

#### Direct Use

This model can be used for translation and text-to-text generation.

## Risks, Limitations and Biases

**CONTENT WARNING: Readers should be aware this section contains content that is disturbing, offensive, and can propagate historical and current stereotypes.**

Significant research has explored bias and fairness issues with language models (see, e.g., [Sheng et al. (2021)](https://aclanthology.org/2021.acl-long.330.pdf) and [Bender et al. (2021)](https://dl.acm.org/doi/pdf/10.1145/3442188.3445922)).

Further details about the dataset for this model can be found in the OPUS readme: [zho-eng](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/zho-eng/README.md)

## Training

#### System Information

* helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535

* transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b

* port_machine: brutasse

* port_time: 2020-08-21-14:41

* src_multilingual: False

* tgt_multilingual: False

#### Training Data

##### Preprocessing

* pre-processing: normalization + SentencePiece (spm32k,spm32k)

* ref_len: 82826.0

* dataset: [opus](https://github.com/Helsinki-NLP/Opus-MT)

* download original weights: [opus-2020-07-17.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/zho-eng/opus-2020-07-17.zip)

* test set translations: [opus-2020-07-17.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/zho-eng/opus-2020-07-17.test.txt)

## Evaluation

#### Results

* test set scores: [opus-2020-07-17.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/zho-eng/opus-2020-07-17.eval.txt)

* brevity_penalty: 0.948

## Benchmarks

| testset | BLEU | chr-F |

|-----------------------|-------|-------|

| Tatoeba-test.zho.eng | 36.1 | 0.548 |

## Citation Information

```bibtex

@InProceedings{TiedemannThottingal:EAMT2020,

author = {J{\"o}rg Tiedemann and Santhosh Thottingal},

title = {{OPUS-MT} — {B}uilding open translation services for the {W}orld},

booktitle = {Proceedings of the 22nd Annual Conferenec of the European Association for Machine Translation (EAMT)},

year = {2020},

address = {Lisbon, Portugal}

}

```

## How to Get Started With the Model

```python

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM

tokenizer = AutoTokenizer.from_pretrained("Helsinki-NLP/opus-mt-zh-en")

model = AutoModelForSeq2SeqLM.from_pretrained("Helsinki-NLP/opus-mt-zh-en")

```

|

assemblyai/bert-large-uncased-sst2 | assemblyai | "2021-06-14T22:04:39Z" | 1,844,046 | 0 | transformers | [

"transformers",

"pytorch",

"bert",

"text-classification",

"arxiv:1810.04805",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | "2022-03-02T23:29:05Z" | # BERT-Large-Uncased for Sentiment Analysis

This model is a fine-tuned version of [bert-large-uncased](https://huggingface.co/bert-large-uncased) originally released in ["BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding"](https://arxiv.org/abs/1810.04805) and trained on the [Stanford Sentiment Treebank v2 (SST2)](https://nlp.stanford.edu/sentiment/); part of the [General Language Understanding Evaluation (GLUE)](https://gluebenchmark.com) benchmark. This model was fine-tuned by the team at [AssemblyAI](https://www.assemblyai.com) and is released with the [corresponding blog post]().

## Usage

To download and utilize this model for sentiment analysis please execute the following:

```python

import torch.nn.functional as F

from transformers import AutoTokenizer, AutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained("assemblyai/bert-large-uncased-sst2")

model = AutoModelForSequenceClassification.from_pretrained("assemblyai/bert-large-uncased-sst2")

tokenized_segments = tokenizer(["AssemblyAI is the best speech-to-text API for modern developers with performance being second to none!"], return_tensors="pt", padding=True, truncation=True)

tokenized_segments_input_ids, tokenized_segments_attention_mask = tokenized_segments.input_ids, tokenized_segments.attention_mask

model_predictions = F.softmax(model(input_ids=tokenized_segments_input_ids, attention_mask=tokenized_segments_attention_mask)['logits'], dim=1)

print("Positive probability: "+str(model_predictions[0][1].item()*100)+"%")

print("Negative probability: "+str(model_predictions[0][0].item()*100)+"%")

```

For questions about how to use this model feel free to contact the team at [AssemblyAI](https://www.assemblyai.com)! |

ai-forever/sbert_large_nlu_ru | ai-forever | "2024-06-13T07:37:03Z" | 1,757,508 | 49 | transformers | [

"transformers",

"safetensors",

"bert",

"feature-extraction",

"PyTorch",

"Transformers",

"ru",

"endpoints_compatible",

"region:us"

] | feature-extraction | "2022-03-02T23:29:05Z" | ---

language:

- ru

tags:

- PyTorch

- Transformers

---

# BERT large model (uncased) for Sentence Embeddings in Russian language.

The model is described [in this article](https://habr.com/ru/company/sberdevices/blog/527576/)

For better quality, use mean token embeddings.

## Usage (HuggingFace Models Repository)

You can use the model directly from the model repository to compute sentence embeddings:

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

sum_embeddings = torch.sum(token_embeddings * input_mask_expanded, 1)

sum_mask = torch.clamp(input_mask_expanded.sum(1), min=1e-9)

return sum_embeddings / sum_mask

#Sentences we want sentence embeddings for

sentences = ['Привет! Как твои дела?',

'А правда, что 42 твое любимое число?']

#Load AutoModel from huggingface model repository

tokenizer = AutoTokenizer.from_pretrained("ai-forever/sbert_large_nlu_ru")

model = AutoModel.from_pretrained("ai-forever/sbert_large_nlu_ru")

#Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, max_length=24, return_tensors='pt')

#Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

#Perform pooling. In this case, mean pooling

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

```

# Authors

+ [SberDevices](https://sberdevices.ru/) Team.

+ Aleksandr Abramov: [HF profile](https://huggingface.co/Andrilko), [Github](https://github.com/Ab1992ao), [Kaggle Competitions Master](https://www.kaggle.com/andrilko);

+ Denis Antykhov: [Github](https://github.com/gaphex); |

ProsusAI/finbert | ProsusAI | "2023-05-23T12:43:35Z" | 1,736,176 | 575 | transformers | [

"transformers",

"pytorch",

"tf",

"jax",

"bert",

"text-classification",

"financial-sentiment-analysis",

"sentiment-analysis",

"en",

"arxiv:1908.10063",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | text-classification | "2022-03-02T23:29:04Z" | ---

language: "en"

tags:

- financial-sentiment-analysis

- sentiment-analysis

widget:

- text: "Stocks rallied and the British pound gained."

---

FinBERT is a pre-trained NLP model to analyze sentiment of financial text. It is built by further training the BERT language model in the finance domain, using a large financial corpus and thereby fine-tuning it for financial sentiment classification. [Financial PhraseBank](https://www.researchgate.net/publication/251231107_Good_Debt_or_Bad_Debt_Detecting_Semantic_Orientations_in_Economic_Texts) by Malo et al. (2014) is used for fine-tuning. For more details, please see the paper [FinBERT: Financial Sentiment Analysis with Pre-trained Language Models](https://arxiv.org/abs/1908.10063) and our related [blog post](https://medium.com/prosus-ai-tech-blog/finbert-financial-sentiment-analysis-with-bert-b277a3607101) on Medium.

The model will give softmax outputs for three labels: positive, negative or neutral.

---

About Prosus

Prosus is a global consumer internet group and one of the largest technology investors in the world. Operating and investing globally in markets with long-term growth potential, Prosus builds leading consumer internet companies that empower people and enrich communities. For more information, please visit www.prosus.com.

Contact information

Please contact Dogu Araci dogu.araci[at]prosus[dot]com and Zulkuf Genc zulkuf.genc[at]prosus[dot]com about any FinBERT related issues and questions.

|

timm/efficientnet_b3.ra2_in1k | timm | "2023-04-27T21:10:28Z" | 1,723,022 | 3 | timm | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2110.00476",

"arxiv:1905.11946",

"license:apache-2.0",

"region:us"

] | image-classification | "2022-12-12T23:56:39Z" | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-1k

---

# Model card for efficientnet_b3.ra2_in1k

A EfficientNet image classification model. Trained on ImageNet-1k in `timm` using recipe template described below.

Recipe details:

* RandAugment `RA2` recipe. Inspired by and evolved from EfficientNet RandAugment recipes. Published as `B` recipe in [ResNet Strikes Back](https://arxiv.org/abs/2110.00476).

* RMSProp (TF 1.0 behaviour) optimizer, EMA weight averaging

* Step (exponential decay w/ staircase) LR schedule with warmup

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 12.2

- GMACs: 1.6

- Activations (M): 21.5

- Image size: train = 288 x 288, test = 320 x 320

- **Papers:**

- EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks: https://arxiv.org/abs/1905.11946

- ResNet strikes back: An improved training procedure in timm: https://arxiv.org/abs/2110.00476

- **Dataset:** ImageNet-1k

- **Original:** https://github.com/huggingface/pytorch-image-models

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('efficientnet_b3.ra2_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'efficientnet_b3.ra2_in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 24, 144, 144])

# torch.Size([1, 32, 72, 72])

# torch.Size([1, 48, 36, 36])

# torch.Size([1, 136, 18, 18])

# torch.Size([1, 384, 9, 9])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'efficientnet_b3.ra2_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 1536, 9, 9) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@inproceedings{tan2019efficientnet,

title={Efficientnet: Rethinking model scaling for convolutional neural networks},

author={Tan, Mingxing and Le, Quoc},

booktitle={International conference on machine learning},

pages={6105--6114},

year={2019},

organization={PMLR}

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

```bibtex

@inproceedings{wightman2021resnet,

title={ResNet strikes back: An improved training procedure in timm},

author={Wightman, Ross and Touvron, Hugo and Jegou, Herve},

booktitle={NeurIPS 2021 Workshop on ImageNet: Past, Present, and Future}

}

```

|

EmergentMethods/gliner_medium_news-v2.1 | EmergentMethods | "2024-06-18T08:33:15Z" | 1,719,437 | 34 | gliner | [

"gliner",

"pytorch",

"token-classification",

"en",

"dataset:EmergentMethods/AskNews-NER-v0",

"arxiv:2406.10258",

"license:apache-2.0",

"region:us"

] | token-classification | "2024-04-17T09:05:00Z" | ---

license: apache-2.0

datasets:

- EmergentMethods/AskNews-NER-v0

tags:

- gliner

language:

- en

pipeline_tag: token-classification

---

# Model Card for gliner_medium_news-v2.1

This model is a fine-tune of [GLiNER](https://huggingface.co/urchade/gliner_medium-v2.1) aimed at improving accuracy across a broad range of topics, especially with respect to long-context news entity extraction. As shown in the table below, these fine-tunes improved upon the base GLiNER model zero-shot accuracy by up to 7.5% across 18 benchmark datasets.

The underlying dataset, [AskNews-NER-v0](https://huggingface.co/datasets/EmergentMethods/AskNews-NER-v0) was engineered with the objective of diversifying global perspectives by enforcing country/language/topic/temporal diversity. All data used to fine-tune this model was synthetically generated. WizardLM 13B v1.2 was used for translation/summarization of open-web news articles, while Llama3 70b instruct was used for entity extraction. Both the diversification and fine-tuning methods are presented in a our paper on [ArXiv](https://arxiv.org/abs/2406.10258).

# Usage

```python

from gliner import GLiNER

model = GLiNER.from_pretrained("EmergentMethods/gliner_medium_news-v2.1")

text = """

The Chihuahua State Public Security Secretariat (SSPE) arrested 35-year-old Salomón C. T. in Ciudad Juárez, found in possession of a stolen vehicle, a white GMC Yukon, which was reported stolen in the city's streets. The arrest was made by intelligence and police analysis personnel during an investigation in the border city. The arrest is related to a previous detention on February 6, which involved armed men in a private vehicle. The detainee and the vehicle were turned over to the Chihuahua State Attorney General's Office for further investigation into the case.

"""

labels = ["person", "location", "date", "event", "facility", "vehicle", "number", "organization"]

entities = model.predict_entities(text, labels)

for entity in entities:

print(entity["text"], "=>", entity["label"])

```

Output:

```

Chihuahua State Public Security Secretariat => organization

SSPE => organization

35-year-old => number

Salomón C. T. => person

Ciudad Juárez => location

GMC Yukon => vehicle

February 6 => date

Chihuahua State Attorney General's Office => organization

```

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

The synthetic data underlying this news fine-tune was pulled from the [AskNews API](https://docs.asknews.app). We enforced diveristy across country/language/topic/time.

Countries:

Entity types:

Topics:

- **Developed by:** [Emergent Methods](https://emergentmethods.ai/)

- **Funded by:** [Emergent Methods](https://emergentmethods.ai/)

- **Shared by:** [Emergent Methods](https://emergentmethods.ai/)

- **Model type:** microsoft/deberta

- **Language(s) (NLP):** English (en) (English texts and translations from Spanish (es), Portuguese (pt), German (de), Russian (ru), French (fr), Arabic (ar), Italian (it), Ukrainian (uk), Norwegian (no), Swedish (sv), Danish (da)).

- **License:** Apache 2.0

- **Finetuned from model:** [GLiNER](https://huggingface.co/urchade/gliner_medium-v2.1)

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** To be added

- **Paper:** To be added

- **Demo:** To be added

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

As the name suggests, this model is aimed at generalist entity extraction. Although we used news to fine-tune this model, it improved accuracy across 18 benchmark datasets by up to 7.5%. This means that the broad and diversified underlying dataset has helped it to recognize and extract more entity types.

This model is shockingly compact, and can be used for high-throughput production usecases. This is another reason we have licensed this as Apache 2.0. Currently, [AskNews](https://asknews.app) is using this fine-tune for entity extraction in their system.

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

Although the goal of the dataset is to reduce bias, and improve diversity, it is still biased to western languages and countries. This limitation originates from the abilities of Llama2 for the translation and summary generations. Further, any bias originating in Llama2 training data will also be present in this dataset, since Llama2 was used to summarize the open-web articles. Further, any biases present in Llama3 will be present in the present dataaset since Llama3 was used to extract entities from the summaries.

## How to Get Started with the Model

Use the code below to get started with the model.

## Training Details

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

The training dataset is [AskNews-NER-v0](https://huggingface.co/datasets/EmergentMethods/AskNews-NER-v0).

Other training details can be found in the [companion paper](https://linktoarxiv.org).

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

- **Hardware Type:** 1xA4500

- **Hours used:** 10

- **Carbon Emitted:** 0.6 kg (According to [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute))

## Citation

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

To be added

**APA:**

To be added

## Model Authors

Elin Törnquist, Emergent Methods elin at emergentmethods.ai

Robert Caulk, Emergent Methods rob at emergentmethods.ai

## Model Contact

Elin Törnquist, Emergent Methods elin at emergentmethods.ai

Robert Caulk, Emergent Methods rob at emergentmethods.ai |

albert/albert-base-v2 | albert | "2024-02-19T10:58:14Z" | 1,683,997 | 96 | transformers | [

"transformers",

"pytorch",

"tf",

"jax",

"rust",

"safetensors",

"albert",

"fill-mask",

"en",

"dataset:bookcorpus",

"dataset:wikipedia",

"arxiv:1909.11942",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | "2022-03-02T23:29:04Z" | ---

language: en

license: apache-2.0

datasets:

- bookcorpus

- wikipedia

---

# ALBERT Base v2

Pretrained model on English language using a masked language modeling (MLM) objective. It was introduced in

[this paper](https://arxiv.org/abs/1909.11942) and first released in

[this repository](https://github.com/google-research/albert). This model, as all ALBERT models, is uncased: it does not make a difference

between english and English.

Disclaimer: The team releasing ALBERT did not write a model card for this model so this model card has been written by

the Hugging Face team.

## Model description

ALBERT is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. This means it

was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of

publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it

was pretrained with two objectives:

- Masked language modeling (MLM): taking a sentence, the model randomly masks 15% of the words in the input then run

the entire masked sentence through the model and has to predict the masked words. This is different from traditional

recurrent neural networks (RNNs) that usually see the words one after the other, or from autoregressive models like

GPT which internally mask the future tokens. It allows the model to learn a bidirectional representation of the

sentence.

- Sentence Ordering Prediction (SOP): ALBERT uses a pretraining loss based on predicting the ordering of two consecutive segments of text.

This way, the model learns an inner representation of the English language that can then be used to extract features

useful for downstream tasks: if you have a dataset of labeled sentences for instance, you can train a standard

classifier using the features produced by the ALBERT model as inputs.

ALBERT is particular in that it shares its layers across its Transformer. Therefore, all layers have the same weights. Using repeating layers results in a small memory footprint, however, the computational cost remains similar to a BERT-like architecture with the same number of hidden layers as it has to iterate through the same number of (repeating) layers.

This is the second version of the base model. Version 2 is different from version 1 due to different dropout rates, additional training data, and longer training. It has better results in nearly all downstream tasks.

This model has the following configuration:

- 12 repeating layers

- 128 embedding dimension

- 768 hidden dimension

- 12 attention heads

- 11M parameters

## Intended uses & limitations

You can use the raw model for either masked language modeling or next sentence prediction, but it's mostly intended to

be fine-tuned on a downstream task. See the [model hub](https://huggingface.co/models?filter=albert) to look for

fine-tuned versions on a task that interests you.

Note that this model is primarily aimed at being fine-tuned on tasks that use the whole sentence (potentially masked)

to make decisions, such as sequence classification, token classification or question answering. For tasks such as text

generation you should look at model like GPT2.

### How to use

You can use this model directly with a pipeline for masked language modeling:

```python

>>> from transformers import pipeline

>>> unmasker = pipeline('fill-mask', model='albert-base-v2')

>>> unmasker("Hello I'm a [MASK] model.")

[

{

"sequence":"[CLS] hello i'm a modeling model.[SEP]",

"score":0.05816134437918663,

"token":12807,

"token_str":"▁modeling"

},

{

"sequence":"[CLS] hello i'm a modelling model.[SEP]",

"score":0.03748830780386925,

"token":23089,

"token_str":"▁modelling"

},

{

"sequence":"[CLS] hello i'm a model model.[SEP]",

"score":0.033725276589393616,

"token":1061,

"token_str":"▁model"

},

{

"sequence":"[CLS] hello i'm a runway model.[SEP]",

"score":0.017313428223133087,

"token":8014,

"token_str":"▁runway"

},

{

"sequence":"[CLS] hello i'm a lingerie model.[SEP]",

"score":0.014405295252799988,

"token":29104,

"token_str":"▁lingerie"

}

]

```

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import AlbertTokenizer, AlbertModel

tokenizer = AlbertTokenizer.from_pretrained('albert-base-v2')

model = AlbertModel.from_pretrained("albert-base-v2")

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

```

and in TensorFlow:

```python

from transformers import AlbertTokenizer, TFAlbertModel

tokenizer = AlbertTokenizer.from_pretrained('albert-base-v2')

model = TFAlbertModel.from_pretrained("albert-base-v2")

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='tf')

output = model(encoded_input)

```

### Limitations and bias

Even if the training data used for this model could be characterized as fairly neutral, this model can have biased

predictions:

```python

>>> from transformers import pipeline

>>> unmasker = pipeline('fill-mask', model='albert-base-v2')

>>> unmasker("The man worked as a [MASK].")

[

{

"sequence":"[CLS] the man worked as a chauffeur.[SEP]",

"score":0.029577180743217468,

"token":28744,

"token_str":"▁chauffeur"

},

{

"sequence":"[CLS] the man worked as a janitor.[SEP]",

"score":0.028865724802017212,

"token":29477,

"token_str":"▁janitor"

},

{

"sequence":"[CLS] the man worked as a shoemaker.[SEP]",

"score":0.02581118606030941,

"token":29024,

"token_str":"▁shoemaker"

},

{

"sequence":"[CLS] the man worked as a blacksmith.[SEP]",

"score":0.01849772222340107,

"token":21238,

"token_str":"▁blacksmith"

},

{

"sequence":"[CLS] the man worked as a lawyer.[SEP]",

"score":0.01820771023631096,

"token":3672,

"token_str":"▁lawyer"

}

]

>>> unmasker("The woman worked as a [MASK].")

[

{

"sequence":"[CLS] the woman worked as a receptionist.[SEP]",

"score":0.04604868218302727,

"token":25331,

"token_str":"▁receptionist"

},

{

"sequence":"[CLS] the woman worked as a janitor.[SEP]",

"score":0.028220869600772858,

"token":29477,

"token_str":"▁janitor"

},

{

"sequence":"[CLS] the woman worked as a paramedic.[SEP]",

"score":0.0261906236410141,

"token":23386,

"token_str":"▁paramedic"

},

{

"sequence":"[CLS] the woman worked as a chauffeur.[SEP]",

"score":0.024797942489385605,

"token":28744,

"token_str":"▁chauffeur"

},

{

"sequence":"[CLS] the woman worked as a waitress.[SEP]",

"score":0.024124596267938614,

"token":13678,

"token_str":"▁waitress"

}

]

```

This bias will also affect all fine-tuned versions of this model.

## Training data

The ALBERT model was pretrained on [BookCorpus](https://yknzhu.wixsite.com/mbweb), a dataset consisting of 11,038

unpublished books and [English Wikipedia](https://en.wikipedia.org/wiki/English_Wikipedia) (excluding lists, tables and

headers).

## Training procedure

### Preprocessing

The texts are lowercased and tokenized using SentencePiece and a vocabulary size of 30,000. The inputs of the model are

then of the form:

```

[CLS] Sentence A [SEP] Sentence B [SEP]

```

### Training

The ALBERT procedure follows the BERT setup.

The details of the masking procedure for each sentence are the following:

- 15% of the tokens are masked.

- In 80% of the cases, the masked tokens are replaced by `[MASK]`.

- In 10% of the cases, the masked tokens are replaced by a random token (different) from the one they replace.

- In the 10% remaining cases, the masked tokens are left as is.

## Evaluation results

When fine-tuned on downstream tasks, the ALBERT models achieve the following results:

| | Average | SQuAD1.1 | SQuAD2.0 | MNLI | SST-2 | RACE |

|----------------|----------|----------|----------|----------|----------|----------|

|V2 |

|ALBERT-base |82.3 |90.2/83.2 |82.1/79.3 |84.6 |92.9 |66.8 |

|ALBERT-large |85.7 |91.8/85.2 |84.9/81.8 |86.5 |94.9 |75.2 |

|ALBERT-xlarge |87.9 |92.9/86.4 |87.9/84.1 |87.9 |95.4 |80.7 |

|ALBERT-xxlarge |90.9 |94.6/89.1 |89.8/86.9 |90.6 |96.8 |86.8 |

|V1 |

|ALBERT-base |80.1 |89.3/82.3 | 80.0/77.1|81.6 |90.3 | 64.0 |

|ALBERT-large |82.4 |90.6/83.9 | 82.3/79.4|83.5 |91.7 | 68.5 |

|ALBERT-xlarge |85.5 |92.5/86.1 | 86.1/83.1|86.4 |92.4 | 74.8 |

|ALBERT-xxlarge |91.0 |94.8/89.3 | 90.2/87.4|90.8 |96.9 | 86.5 |

### BibTeX entry and citation info

```bibtex

@article{DBLP:journals/corr/abs-1909-11942,

author = {Zhenzhong Lan and

Mingda Chen and

Sebastian Goodman and

Kevin Gimpel and

Piyush Sharma and

Radu Soricut},

title = {{ALBERT:} {A} Lite {BERT} for Self-supervised Learning of Language

Representations},

journal = {CoRR},

volume = {abs/1909.11942},

year = {2019},

url = {http://arxiv.org/abs/1909.11942},

archivePrefix = {arXiv},

eprint = {1909.11942},

timestamp = {Fri, 27 Sep 2019 13:04:21 +0200},

biburl = {https://dblp.org/rec/journals/corr/abs-1909-11942.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

``` |

ashawkey/stable-zero123-diffusers | ashawkey | "2023-12-14T03:11:38Z" | 1,629,955 | 7 | diffusers | [

"diffusers",

"safetensors",

"arxiv:2303.11328",

"license:mit",

"diffusers:Zero123Pipeline",

"region:us"

] | null | "2023-12-14T03:04:01Z" | ---

license: mit

---

# Uses

_Note: This section is originally taken from the [Stable Diffusion v2 model card](https://huggingface.co/stabilityai/stable-diffusion-2), but applies in the same way to Zero-1-to-3._

## Direct Use

The model is intended for research purposes only. Possible research areas and tasks include:

- Safe deployment of large-scale models.

- Probing and understanding the limitations and biases of generative models.

- Generation of artworks and use in design and other artistic processes.

- Applications in educational or creative tools.

- Research on generative models.

Excluded uses are described below.

### Misuse, Malicious Use, and Out-of-Scope Use

The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes.

#### Out-of-Scope Use

The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model.

#### Misuse and Malicious Use

Using the model to generate content that is cruel to individuals is a misuse of this model. This includes, but is not limited to:

- Generating demeaning, dehumanizing, or otherwise harmful representations of people or their environments, cultures, religions, etc.

- Intentionally promoting or propagating discriminatory content or harmful stereotypes.

- Impersonating individuals without their consent.

- Sexual content without consent of the people who might see it.

- Mis- and disinformation

- Representations of egregious violence and gore

- Sharing of copyrighted or licensed material in violation of its terms of use.

- Sharing content that is an alteration of copyrighted or licensed material in violation of its terms of use.

## Limitations and Bias

### Limitations

- The model does not achieve perfect photorealism.

- The model cannot render legible text.

- Faces and people in general may not be parsed or generated properly.

- The autoencoding part of the model is lossy.

- Stable Diffusion was trained on a subset of the large-scale dataset [LAION-5B](https://laion.ai/blog/laion-5b/), which contains adult, violent and sexual content. To partially mitigate this, Stability AI has filtered the dataset using LAION's NSFW detector.

- Zero-1-to-3 was subsequently finetuned on a subset of the large-scale dataset [Objaverse](https://objaverse.allenai.org/), which might also potentially contain inappropriate content. To partially mitigate this, our demo applies a safety check to every uploaded image.

### Bias

While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases.

Stable Diffusion was primarily trained on subsets of [LAION-2B(en)](https://laion.ai/blog/laion-5b/), which consists of images that are limited to English descriptions.

Images and concepts from communities and cultures that use other languages are likely to be insufficiently accounted for.

This affects the overall output of the model, as Western cultures are often overrepresented.

Stable Diffusion mirrors and exacerbates biases to such a degree that viewer discretion must be advised irrespective of the input or its intent.

### Safety Module

The intended use of this model is with the [Safety Checker](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/safety_checker.py) in Diffusers.

This checker works by checking model inputs against known hard-coded NSFW concepts.

Specifically, the checker compares the class probability of harmful concepts in the embedding space of the uploaded input images.

The concepts are passed into the model with the image and compared to a hand-engineered weight for each NSFW concept.

## Citation

```

@misc{liu2023zero1to3,

title={Zero-1-to-3: Zero-shot One Image to 3D Object},

author={Ruoshi Liu and Rundi Wu and Basile Van Hoorick and Pavel Tokmakov and Sergey Zakharov and Carl Vondrick},

year={2023},

eprint={2303.11328},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

```

|

cross-encoder/nli-roberta-base | cross-encoder | "2021-08-05T08:41:05Z" | 1,617,760 | 12 | transformers | [

"transformers",

"pytorch",

"jax",

"roberta",

"text-classification",

"roberta-base",

"zero-shot-classification",

"en",

"dataset:multi_nli",

"dataset:snli",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | zero-shot-classification | "2022-03-02T23:29:05Z" | ---

language: en

pipeline_tag: zero-shot-classification

tags:

- roberta-base

datasets:

- multi_nli

- snli

metrics:

- accuracy

license: apache-2.0

---

# Cross-Encoder for Natural Language Inference

This model was trained using [SentenceTransformers](https://sbert.net) [Cross-Encoder](https://www.sbert.net/examples/applications/cross-encoder/README.html) class.

## Training Data

The model was trained on the [SNLI](https://nlp.stanford.edu/projects/snli/) and [MultiNLI](https://cims.nyu.edu/~sbowman/multinli/) datasets. For a given sentence pair, it will output three scores corresponding to the labels: contradiction, entailment, neutral.

## Performance

For evaluation results, see [SBERT.net - Pretrained Cross-Encoder](https://www.sbert.net/docs/pretrained_cross-encoders.html#nli).

## Usage

Pre-trained models can be used like this:

```python

from sentence_transformers import CrossEncoder

model = CrossEncoder('cross-encoder/nli-roberta-base')

scores = model.predict([('A man is eating pizza', 'A man eats something'), ('A black race car starts up in front of a crowd of people.', 'A man is driving down a lonely road.')])

#Convert scores to labels

label_mapping = ['contradiction', 'entailment', 'neutral']

labels = [label_mapping[score_max] for score_max in scores.argmax(axis=1)]

```

## Usage with Transformers AutoModel

You can use the model also directly with Transformers library (without SentenceTransformers library):

```python

from transformers import AutoTokenizer, AutoModelForSequenceClassification

import torch

model = AutoModelForSequenceClassification.from_pretrained('cross-encoder/nli-roberta-base')

tokenizer = AutoTokenizer.from_pretrained('cross-encoder/nli-roberta-base')

features = tokenizer(['A man is eating pizza', 'A black race car starts up in front of a crowd of people.'], ['A man eats something', 'A man is driving down a lonely road.'], padding=True, truncation=True, return_tensors="pt")

model.eval()

with torch.no_grad():

scores = model(**features).logits

label_mapping = ['contradiction', 'entailment', 'neutral']

labels = [label_mapping[score_max] for score_max in scores.argmax(dim=1)]

print(labels)

```

## Zero-Shot Classification

This model can also be used for zero-shot-classification:

```python

from transformers import pipeline

classifier = pipeline("zero-shot-classification", model='cross-encoder/nli-roberta-base')

sent = "Apple just announced the newest iPhone X"

candidate_labels = ["technology", "sports", "politics"]

res = classifier(sent, candidate_labels)

print(res)

``` |

microsoft/layoutlm-base-uncased | microsoft | "2024-04-16T12:16:49Z" | 1,607,026 | 37 | transformers | [

"transformers",

"pytorch",

"tf",

"safetensors",

"layoutlm",

"en",

"arxiv:1912.13318",

"license:mit",

"endpoints_compatible",

"region:us"

] | null | "2022-03-02T23:29:05Z" | ---

language: en

license: mit

---

# LayoutLM

**Multimodal (text + layout/format + image) pre-training for document AI**

[Microsoft Document AI](https://www.microsoft.com/en-us/research/project/document-ai/) | [GitHub](https://aka.ms/layoutlm)

## Model description

LayoutLM is a simple but effective pre-training method of text and layout for document image understanding and information extraction tasks, such as form understanding and receipt understanding. LayoutLM archives the SOTA results on multiple datasets. For more details, please refer to our paper:

[LayoutLM: Pre-training of Text and Layout for Document Image Understanding](https://arxiv.org/abs/1912.13318)

Yiheng Xu, Minghao Li, Lei Cui, Shaohan Huang, Furu Wei, Ming Zhou, [KDD 2020](https://www.kdd.org/kdd2020/accepted-papers)

## Training data

We pre-train LayoutLM on IIT-CDIP Test Collection 1.0\* dataset with two settings.

* LayoutLM-Base, Uncased (11M documents, 2 epochs): 12-layer, 768-hidden, 12-heads, 113M parameters **(This Model)**

* LayoutLM-Large, Uncased (11M documents, 2 epochs): 24-layer, 1024-hidden, 16-heads, 343M parameters

## Citation

If you find LayoutLM useful in your research, please cite the following paper:

``` latex

@misc{xu2019layoutlm,

title={LayoutLM: Pre-training of Text and Layout for Document Image Understanding},

author={Yiheng Xu and Minghao Li and Lei Cui and Shaohan Huang and Furu Wei and Ming Zhou},

year={2019},

eprint={1912.13318},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

gguichard/camembert-large-resaved | gguichard | "2024-04-02T11:23:04Z" | 1,551,153 | 0 | transformers | [

"transformers",

"pytorch",

"camembert",

"feature-extraction",

"endpoints_compatible",

"text-embeddings-inference",

"region:us"

] | feature-extraction | "2024-04-02T11:21:12Z" | Entry not found |

myshell-ai/MeloTTS-English | myshell-ai | "2024-03-01T17:34:55Z" | 1,532,614 | 58 | transformers | [

"transformers",

"text-to-speech",

"ko",

"license:mit",

"endpoints_compatible",

"region:us"

] | text-to-speech | "2024-02-29T14:52:43Z" | ---

license: mit

language:

- ko

pipeline_tag: text-to-speech

---

# MeloTTS

MeloTTS is a **high-quality multi-lingual** text-to-speech library by [MyShell.ai](https://myshell.ai). Supported languages include:

| Model card | Example |

| --- | --- |

| [English](https://huggingface.co/myshell-ai/MeloTTS-English-v2) (American) | [Link](https://myshell-public-repo-hosting.s3.amazonaws.com/myshellttsbase/examples/en/EN-US/speed_1.0/sent_000.wav) |

| [English](https://huggingface.co/myshell-ai/MeloTTS-English-v2) (British) | [Link](https://myshell-public-repo-hosting.s3.amazonaws.com/myshellttsbase/examples/en/EN-BR/speed_1.0/sent_000.wav) |

| [English](https://huggingface.co/myshell-ai/MeloTTS-English-v2) (Indian) | [Link](https://myshell-public-repo-hosting.s3.amazonaws.com/myshellttsbase/examples/en/EN_INDIA/speed_1.0/sent_000.wav) |

| [English](https://huggingface.co/myshell-ai/MeloTTS-English-v2) (Australian) | [Link](https://myshell-public-repo-hosting.s3.amazonaws.com/myshellttsbase/examples/en/EN-AU/speed_1.0/sent_000.wav) |

| [English](https://huggingface.co/myshell-ai/MeloTTS-English-v2) (Default) | [Link](https://myshell-public-repo-hosting.s3.amazonaws.com/myshellttsbase/examples/en/EN-Default/speed_1.0/sent_000.wav) |

| [Spanish](https://huggingface.co/myshell-ai/MeloTTS-Spanish) | [Link](https://myshell-public-repo-hosting.s3.amazonaws.com/myshellttsbase/examples/es/ES/speed_1.0/sent_000.wav) |

| [French](https://huggingface.co/myshell-ai/MeloTTS-French) | [Link](https://myshell-public-repo-hosting.s3.amazonaws.com/myshellttsbase/examples/fr/FR/speed_1.0/sent_000.wav) |

| [Chinese](https://huggingface.co/myshell-ai/MeloTTS-Chinese) (mix EN) | [Link](https://myshell-public-repo-hosting.s3.amazonaws.com/myshellttsbase/examples/zh/ZH/speed_1.0/sent_008.wav) |

| [Japanese](https://huggingface.co/myshell-ai/MeloTTS-Japanese) | [Link](https://myshell-public-repo-hosting.s3.amazonaws.com/myshellttsbase/examples/jp/JP/speed_1.0/sent_000.wav) |

| [Korean](https://huggingface.co/myshell-ai/MeloTTS-Korean/) | [Link](https://myshell-public-repo-hosting.s3.amazonaws.com/myshellttsbase/examples/kr/KR/speed_1.0/sent_000.wav) |

Some other features include:

- The Chinese speaker supports `mixed Chinese and English`.

- Fast enough for `CPU real-time inference`.

## Usage

### Without Installation

An unofficial [live demo](https://huggingface.co/spaces/mrfakename/MeloTTS) is hosted on Hugging Face Spaces.

#### Use it on MyShell

There are hundreds of TTS models on MyShell, much more than MeloTTS. See examples [here](https://github.com/myshell-ai/MeloTTS/blob/main/docs/quick_use.md#use-melotts-without-installation).

More can be found at the widget center of [MyShell.ai](https://app.myshell.ai/robot-workshop).

### Install and Use Locally

Follow the installation steps [here](https://github.com/myshell-ai/MeloTTS/blob/main/docs/install.md#linux-and-macos-install) before using the following snippet:

```python

from melo.api import TTS

# Speed is adjustable

speed = 1.0

# CPU is sufficient for real-time inference.

# You can set it manually to 'cpu' or 'cuda' or 'cuda:0' or 'mps'

device = 'auto' # Will automatically use GPU if available

# English

text = "Did you ever hear a folk tale about a giant turtle?"

model = TTS(language='EN', device=device)

speaker_ids = model.hps.data.spk2id

# American accent

output_path = 'en-us.wav'

model.tts_to_file(text, speaker_ids['EN-US'], output_path, speed=speed)

# British accent

output_path = 'en-br.wav'

model.tts_to_file(text, speaker_ids['EN-BR'], output_path, speed=speed)

# Indian accent

output_path = 'en-india.wav'

model.tts_to_file(text, speaker_ids['EN_INDIA'], output_path, speed=speed)

# Australian accent

output_path = 'en-au.wav'

model.tts_to_file(text, speaker_ids['EN-AU'], output_path, speed=speed)

# Default accent

output_path = 'en-default.wav'

model.tts_to_file(text, speaker_ids['EN-Default'], output_path, speed=speed)

```

## Join the Community

**Open Source AI Grant**

We are actively sponsoring open-source AI projects. The sponsorship includes GPU resources, fundings and intellectual support (collaboration with top research labs). We welcome both reseach and engineering projects, as long as the open-source community needs them. Please contact [Zengyi Qin](https://www.qinzy.tech/) if you are interested.

**Contributing**

If you find this work useful, please consider contributing to the GitHub [repo](https://github.com/myshell-ai/MeloTTS).

- Many thanks to [@fakerybakery](https://github.com/fakerybakery) for adding the Web UI and CLI part.

## License

This library is under MIT License, which means it is free for both commercial and non-commercial use.

## Acknowledgements

This implementation is based on [TTS](https://github.com/coqui-ai/TTS), [VITS](https://github.com/jaywalnut310/vits), [VITS2](https://github.com/daniilrobnikov/vits2) and [Bert-VITS2](https://github.com/fishaudio/Bert-VITS2). We appreciate their awesome work.

|

Ashishkr/query_wellformedness_score | Ashishkr | "2024-03-30T11:51:12Z" | 1,518,872 | 26 | transformers | [

"transformers",

"pytorch",

"jax",

"safetensors",

"roberta",

"text-classification",

"dataset:google_wellformed_query",

"doi:10.57967/hf/1980",

"license:apache-2.0",

"autotrain_compatible",

"region:us"

] | text-classification | "2022-03-02T23:29:05Z" | ---

license: apache-2.0

inference: false

datasets: google_wellformed_query

---

```DOI

@misc {ashish_kumar_2024,

author = { {Ashish Kumar} },

title = { query_wellformedness_score (Revision 55a424c) },

year = 2024,

url = { https://huggingface.co/Ashishkr/query_wellformedness_score },

doi = { 10.57967/hf/1980 },

publisher = { Hugging Face }

}

```

**Intended Use Cases**

*Content Creation*: Validate the well-formedness of written content.

*Educational Platforms*: Helps students check the grammaticality of their sentences.

*Chatbots & Virtual Assistants*: To validate user queries or generate well-formed responses.

**contact: [email protected]**

**Model name**: Query Wellformedness Scoring

**Description** : Evaluate the well-formedness of sentences by checking grammatical correctness and completeness. Sensitive to case and penalizes sentences for incorrect grammar and case.

**Features**:

- *Wellformedness Score*: Provides a score indicating grammatical correctness and completeness.

- *Case Sensitivity*: Recognizes and penalizes incorrect casing in sentences.

- *Broad Applicability*: Can be used on a wide range of sentences.

**Example**:

1. Dogs are mammals.

2. she loves to read books on history.

3. When the rain in Spain.

4. Eating apples are healthy for you.

5. The Eiffel Tower is in Paris.

Among these sentences:

Sentences 1 and 5 are well-formed and have correct grammar and case.

Sentence 2 starts with a lowercase letter.

Sentence 3 is a fragment and is not well-formed.

Sentence 4 has a subject-verb agreement error.

**example_usage:**

*library: HuggingFace transformers*

```python

import torch

from transformers import AutoTokenizer, AutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained("Ashishkr/query_wellformedness_score")

model = AutoModelForSequenceClassification.from_pretrained("Ashishkr/query_wellformedness_score")

sentences = [

"The quarterly financial report are showing an increase.", # Incorrect

"Him has completed the audit for last fiscal year.", # Incorrect

"Please to inform the board about the recent developments.", # Incorrect

"The team successfully achieved all its targets for the last quarter.", # Correct

"Our company is exploring new ventures in the European market." # Correct

]

features = tokenizer(sentences, padding=True, truncation=True, return_tensors="pt")

model.eval()

with torch.no_grad():

scores = model(**features).logits

print(scores)

```

Cite Ashishkr/query_wellformedness_score

|

microsoft/layoutlmv2-base-uncased | microsoft | "2022-09-16T03:40:56Z" | 1,488,813 | 48 | transformers | [

"transformers",

"pytorch",

"layoutlmv2",

"en",

"arxiv:2012.14740",

"license:cc-by-nc-sa-4.0",

"endpoints_compatible",

"region:us"

] | null | "2022-03-02T23:29:05Z" | ---

language: en

license: cc-by-nc-sa-4.0

---

# LayoutLMv2

**Multimodal (text + layout/format + image) pre-training for document AI**

The documentation of this model in the Transformers library can be found [here](https://huggingface.co/docs/transformers/model_doc/layoutlmv2).

[Microsoft Document AI](https://www.microsoft.com/en-us/research/project/document-ai/) | [GitHub](https://github.com/microsoft/unilm/tree/master/layoutlmv2)

## Introduction

LayoutLMv2 is an improved version of LayoutLM with new pre-training tasks to model the interaction among text, layout, and image in a single multi-modal framework. It outperforms strong baselines and achieves new state-of-the-art results on a wide variety of downstream visually-rich document understanding tasks, including , including FUNSD (0.7895 → 0.8420), CORD (0.9493 → 0.9601), SROIE (0.9524 → 0.9781), Kleister-NDA (0.834 → 0.852), RVL-CDIP (0.9443 → 0.9564), and DocVQA (0.7295 → 0.8672).

[LayoutLMv2: Multi-modal Pre-training for Visually-Rich Document Understanding](https://arxiv.org/abs/2012.14740)

Yang Xu, Yiheng Xu, Tengchao Lv, Lei Cui, Furu Wei, Guoxin Wang, Yijuan Lu, Dinei Florencio, Cha Zhang, Wanxiang Che, Min Zhang, Lidong Zhou, ACL 2021

|

vennify/t5-base-grammar-correction | vennify | "2022-01-14T16:35:23Z" | 1,464,699 | 138 | transformers | [

"transformers",

"pytorch",

"t5",

"text2text-generation",

"grammar",

"en",

"dataset:jfleg",

"arxiv:1702.04066",

"license:cc-by-nc-sa-4.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text2text-generation | "2022-03-02T23:29:05Z" | ---

language: en

tags:

- grammar

- text2text-generation

license: cc-by-nc-sa-4.0

datasets:

- jfleg

---

# T5 Grammar Correction

This model generates a revised version of inputted text with the goal of containing fewer grammatical errors.

It was trained with [Happy Transformer](https://github.com/EricFillion/happy-transformer)

using a dataset called [JFLEG](https://arxiv.org/abs/1702.04066). Here's a [full article](https://www.vennify.ai/fine-tune-grammar-correction/) on how to train a similar model.

## Usage

`pip install happytransformer `

```python

from happytransformer import HappyTextToText, TTSettings

happy_tt = HappyTextToText("T5", "vennify/t5-base-grammar-correction")

args = TTSettings(num_beams=5, min_length=1)

# Add the prefix "grammar: " before each input

result = happy_tt.generate_text("grammar: This sentences has has bads grammar.", args=args)

print(result.text) # This sentence has bad grammar.

``` |

unikei/t5-base-split-and-rephrase | unikei | "2024-01-26T10:15:16Z" | 1,432,413 | 14 | transformers | [

"transformers",

"pytorch",

"t5",

"text2text-generation",

"split and rephrase",

"en",

"dataset:wiki_split",

"dataset:web_split",

"license:bigscience-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text2text-generation | "2023-05-19T11:25:06Z" | ---

license: bigscience-openrail-m

tags:

- split and rephrase

widget:

- text: >-

Cystic Fibrosis (CF) is an autosomal recessive disorder that affects

multiple organs, which is common in the Caucasian population,

symptomatically affecting 1 in 2500 newborns in the UK, and more than 80,000

individuals globally.

datasets:

- wiki_split

- web_split

language:

- en

---

# T5 model for splitting complex sentences to simple sentences in English

Split-and-rephrase is the task of splitting a complex input sentence into shorter sentences while preserving meaning. (Narayan et al., 2017)

E.g.:

```

Cystic Fibrosis (CF) is an autosomal recessive disorder that affects multiple organs,

which is common in the Caucasian population, symptomatically affecting 1 in 2500 newborns in the UK,

and more than 80,000 individuals globally.

```

could be split into

```

Cystic Fibrosis is an autosomal recessive disorder that affects multiple organs.

```

```

Cystic Fibrosis is common in the Caucasian population.

```

```

Cystic Fibrosis affects 1 in 2500 newborns in the UK.

```

```

Cystic Fibrosis affects more than 80,000 individuals globally.

```

## How to use it in your code:

```python

from transformers import T5Tokenizer, T5ForConditionalGeneration

checkpoint="unikei/t5-base-split-and-rephrase"

tokenizer = T5Tokenizer.from_pretrained(checkpoint)

model = T5ForConditionalGeneration.from_pretrained(checkpoint)

complex_sentence = "Cystic Fibrosis (CF) is an autosomal recessive disorder that \

affects multiple organs, which is common in the Caucasian \

population, symptomatically affecting 1 in 2500 newborns in \

the UK, and more than 80,000 individuals globally."

complex_tokenized = tokenizer(complex_sentence,

padding="max_length",

truncation=True,

max_length=256,

return_tensors='pt')

simple_tokenized = model.generate(complex_tokenized['input_ids'], attention_mask = complex_tokenized['attention_mask'], max_length=256, num_beams=5)

simple_sentences = tokenizer.batch_decode(simple_tokenized, skip_special_tokens=True)

print(simple_sentences)

"""

Output:

Cystic Fibrosis is an autosomal recessive disorder that affects multiple organs. Cystic Fibrosis is common in the Caucasian population. Cystic Fibrosis affects 1 in 2500 newborns in the UK. Cystic Fibrosis affects more than 80,000 individuals globally.

"""

```

|

sentence-transformers/distiluse-base-multilingual-cased-v2 | sentence-transformers | "2024-03-27T10:31:01Z" | 1,431,195 | 142 | sentence-transformers | [

"sentence-transformers",

"pytorch",

"tf",

"safetensors",

"distilbert",

"feature-extraction",

"sentence-similarity",

"multilingual",

"ar",

"bg",

"ca",

"cs",

"da",

"de",

"el",

"en",

"es",

"et",

"fa",

"fi",

"fr",

"gl",

"gu",

"he",

"hi",

"hr",

"hu",

"hy",

"id",

"it",

"ja",

"ka",

"ko",

"ku",

"lt",

"lv",

"mk",

"mn",

"mr",

"ms",

"my",

"nb",

"nl",

"pl",

"pt",

"ro",

"ru",

"sk",

"sl",

"sq",

"sr",

"sv",

"th",

"tr",

"uk",

"ur",

"vi",

"arxiv:1908.10084",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | sentence-similarity | "2022-03-02T23:29:05Z" | ---

language:

- multilingual

- ar

- bg

- ca

- cs

- da

- de

- el

- en

- es

- et

- fa

- fi

- fr

- gl

- gu

- he

- hi

- hr

- hu

- hy

- id

- it

- ja

- ka

- ko

- ku

- lt

- lv

- mk

- mn

- mr

- ms

- my

- nb

- nl

- pl

- pt

- ro

- ru

- sk

- sl

- sq

- sr

- sv

- th

- tr

- uk

- ur

- vi

license: apache-2.0

library_name: sentence-transformers

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

language_bcp47:

- fr-ca

- pt-br

- zh-cn

- zh-tw

pipeline_tag: sentence-similarity

---

# sentence-transformers/distiluse-base-multilingual-cased-v2

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 512 dimensional dense vector space and can be used for tasks like clustering or semantic search.

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('sentence-transformers/distiluse-base-multilingual-cased-v2')

embeddings = model.encode(sentences)

print(embeddings)

```

## Evaluation Results

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=sentence-transformers/distiluse-base-multilingual-cased-v2)

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 128, 'do_lower_case': False}) with Transformer model: DistilBertModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

(2): Dense({'in_features': 768, 'out_features': 512, 'bias': True, 'activation_function': 'torch.nn.modules.activation.Tanh'})

)

```

## Citing & Authors

This model was trained by [sentence-transformers](https://www.sbert.net/).

If you find this model helpful, feel free to cite our publication [Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks](https://arxiv.org/abs/1908.10084):

```bibtex

@inproceedings{reimers-2019-sentence-bert,

title = "Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks",

author = "Reimers, Nils and Gurevych, Iryna",

booktitle = "Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing",

month = "11",

year = "2019",

publisher = "Association for Computational Linguistics",

url = "http://arxiv.org/abs/1908.10084",

}

``` |

tiiuae/falcon-7b-instruct | tiiuae | "2023-09-29T14:32:23Z" | 1,428,959 | 879 | transformers | [

"transformers",

"pytorch",

"coreml",

"falcon",

"text-generation",

"custom_code",

"en",

"dataset:tiiuae/falcon-refinedweb",

"arxiv:2205.14135",

"arxiv:1911.02150",

"arxiv:2005.14165",

"arxiv:2104.09864",

"arxiv:2306.01116",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | "2023-04-25T06:21:01Z" | ---

datasets:

- tiiuae/falcon-refinedweb

language:

- en

inference: true

widget:

- text: "Hey Falcon! Any recommendations for my holidays in Abu Dhabi?"

example_title: "Abu Dhabi Trip"

- text: "What's the Everett interpretation of quantum mechanics?"

example_title: "Q/A: Quantum & Answers"

- text: "Give me a list of the top 10 dive sites you would recommend around the world."

example_title: "Diving Top 10"

- text: "Can you tell me more about deep-water soloing?"

example_title: "Extreme sports"

- text: "Can you write a short tweet about the Apache 2.0 release of our latest AI model, Falcon LLM?"

example_title: "Twitter Helper"

- text: "What are the responsabilities of a Chief Llama Officer?"

example_title: "Trendy Jobs"

license: apache-2.0

---

# ✨ Falcon-7B-Instruct

**Falcon-7B-Instruct is a 7B parameters causal decoder-only model built by [TII](https://www.tii.ae) based on [Falcon-7B](https://huggingface.co/tiiuae/falcon-7b) and finetuned on a mixture of chat/instruct datasets. It is made available under the Apache 2.0 license.**

*Paper coming soon 😊.*

🤗 To get started with Falcon (inference, finetuning, quantization, etc.), we recommend reading [this great blogpost fron HF](https://huggingface.co/blog/falcon)!

## Why use Falcon-7B-Instruct?

* **You are looking for a ready-to-use chat/instruct model based on [Falcon-7B](https://huggingface.co/tiiuae/falcon-7b).**

* **Falcon-7B is a strong base model, outperforming comparable open-source models** (e.g., [MPT-7B](https://huggingface.co/mosaicml/mpt-7b), [StableLM](https://github.com/Stability-AI/StableLM), [RedPajama](https://huggingface.co/togethercomputer/RedPajama-INCITE-Base-7B-v0.1) etc.), thanks to being trained on 1,500B tokens of [RefinedWeb](https://huggingface.co/datasets/tiiuae/falcon-refinedweb) enhanced with curated corpora. See the [OpenLLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).

* **It features an architecture optimized for inference**, with FlashAttention ([Dao et al., 2022](https://arxiv.org/abs/2205.14135)) and multiquery ([Shazeer et al., 2019](https://arxiv.org/abs/1911.02150)).

💬 **This is an instruct model, which may not be ideal for further finetuning.** If you are interested in building your own instruct/chat model, we recommend starting from [Falcon-7B](https://huggingface.co/tiiuae/falcon-7b).

🔥 **Looking for an even more powerful model?** [Falcon-40B-Instruct](https://huggingface.co/tiiuae/falcon-40b-instruct) is Falcon-7B-Instruct's big brother!

```python

from transformers import AutoTokenizer, AutoModelForCausalLM

import transformers

import torch

model = "tiiuae/falcon-7b-instruct"

tokenizer = AutoTokenizer.from_pretrained(model)

pipeline = transformers.pipeline(

"text-generation",

model=model,