Thank you for support my work.

https://www.buymeacoffee.com/bdsqlsz

Support list will show in main page.

Support List

DiamondShark

Yashamon

t4ggno

Someone

kgmkm_mkgm

yacong

Pre-trained models and output samples of ControlNet-LLLite form bdsqlsz

Inference with ComfyUI: https://github.com/kohya-ss/ControlNet-LLLite-ComfyUI Not Controlnet Nodes!

For 1111's Web UI, sd-webui-controlnet extension supports ControlNet-LLLite.

Training: https://github.com/kohya-ss/sd-scripts/blob/sdxl/docs/train_lllite_README.md

The recommended preprocessing for the animeface model is Anime-Face-Segmentation

Models

Trained on anime model

AnimeFaceSegment、Normal、T2i-Color/Shuffle、lineart_anime_denoise、recolor_luminance

Base Model useKohaku-XL

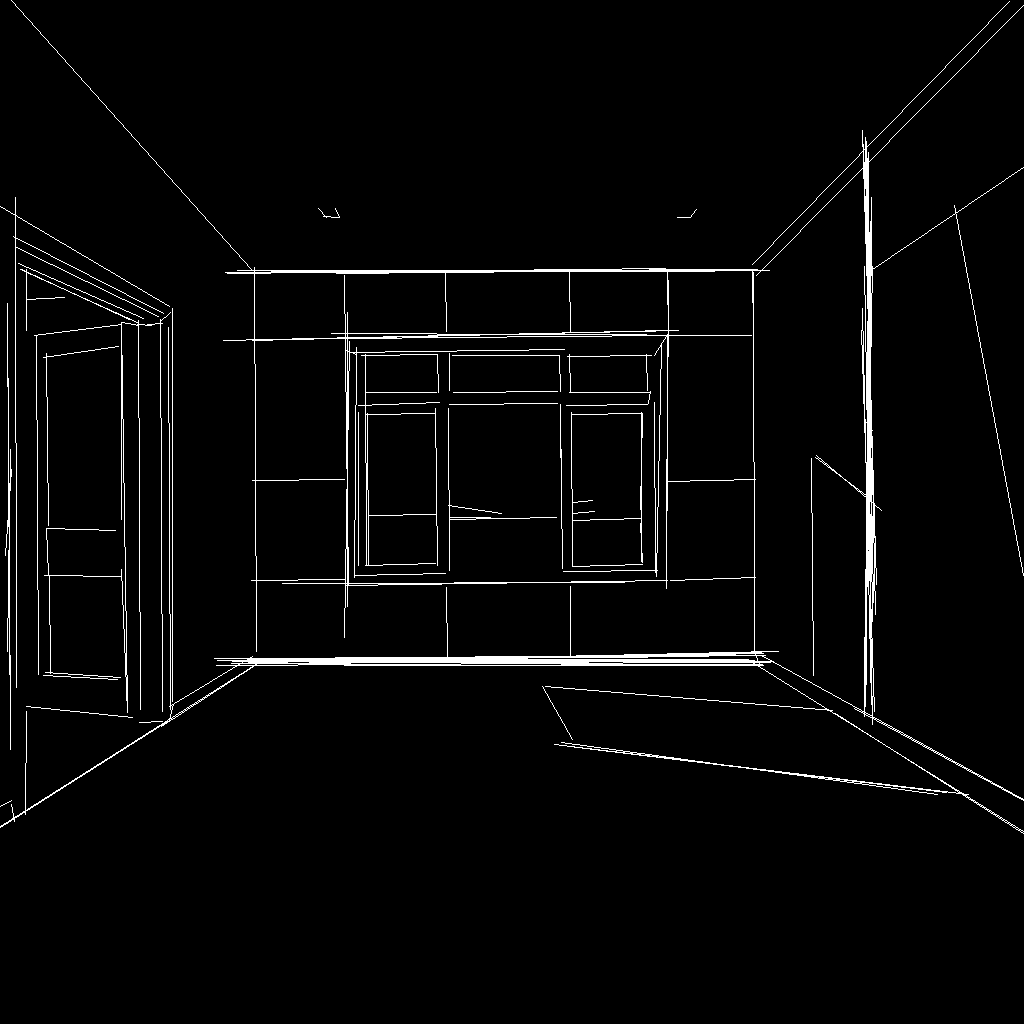

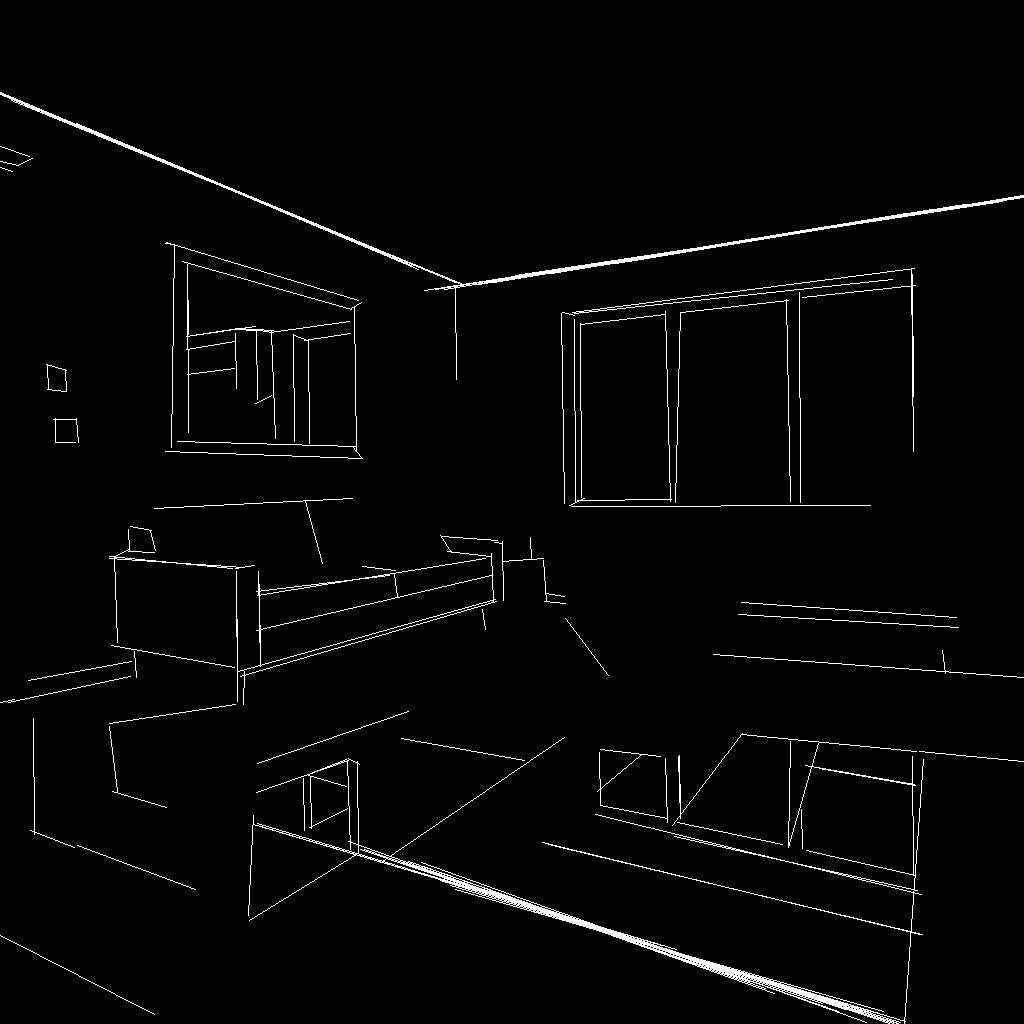

MLSD

Base Model useProtoVision XL - High Fidelity 3D

Japanese Introduction

https://note.com/kagami_kami/n/nf71099b6abe3

Thank kgmkm_mkgm for introducing these controlllite models and testing.

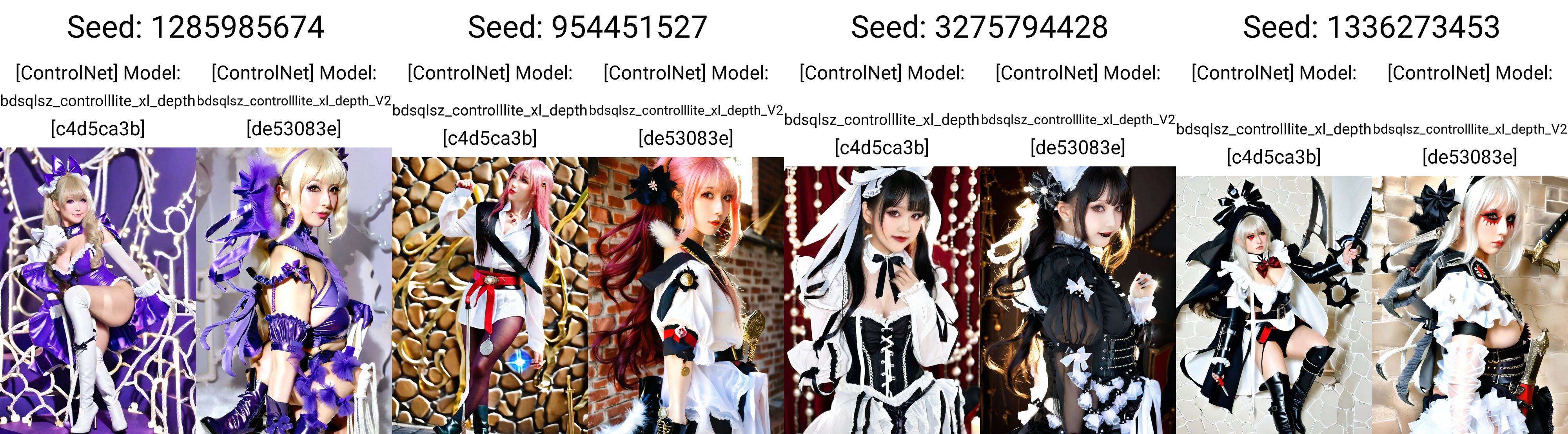

Samples

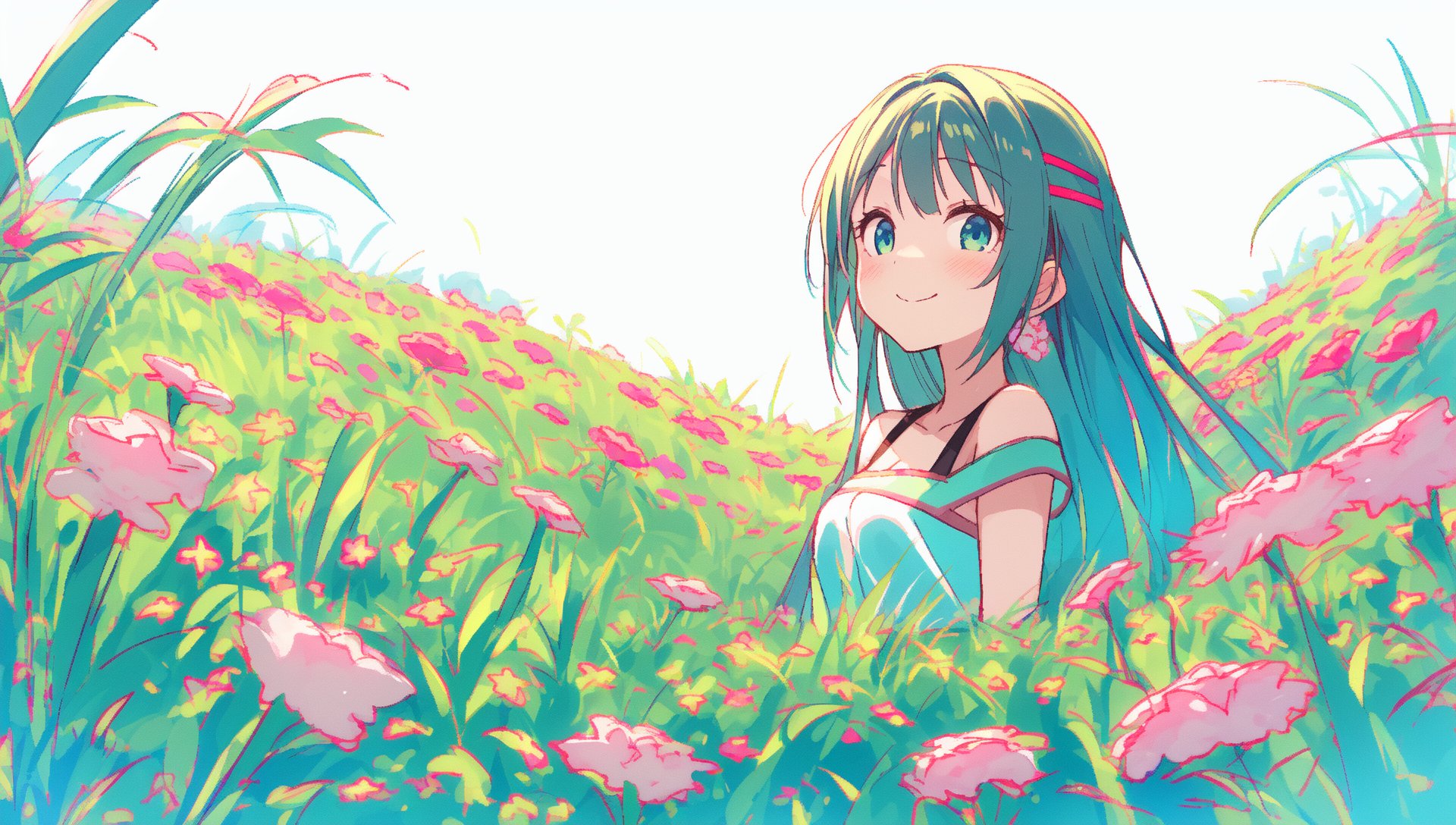

AnimeFaceSegmentV2

DepthV2_(Marigold)

MLSDV2

Normal_Dsine

T2i-Color/Shuffle

Lineart_Anime_Denoise

Recolor_Luminance

Canny

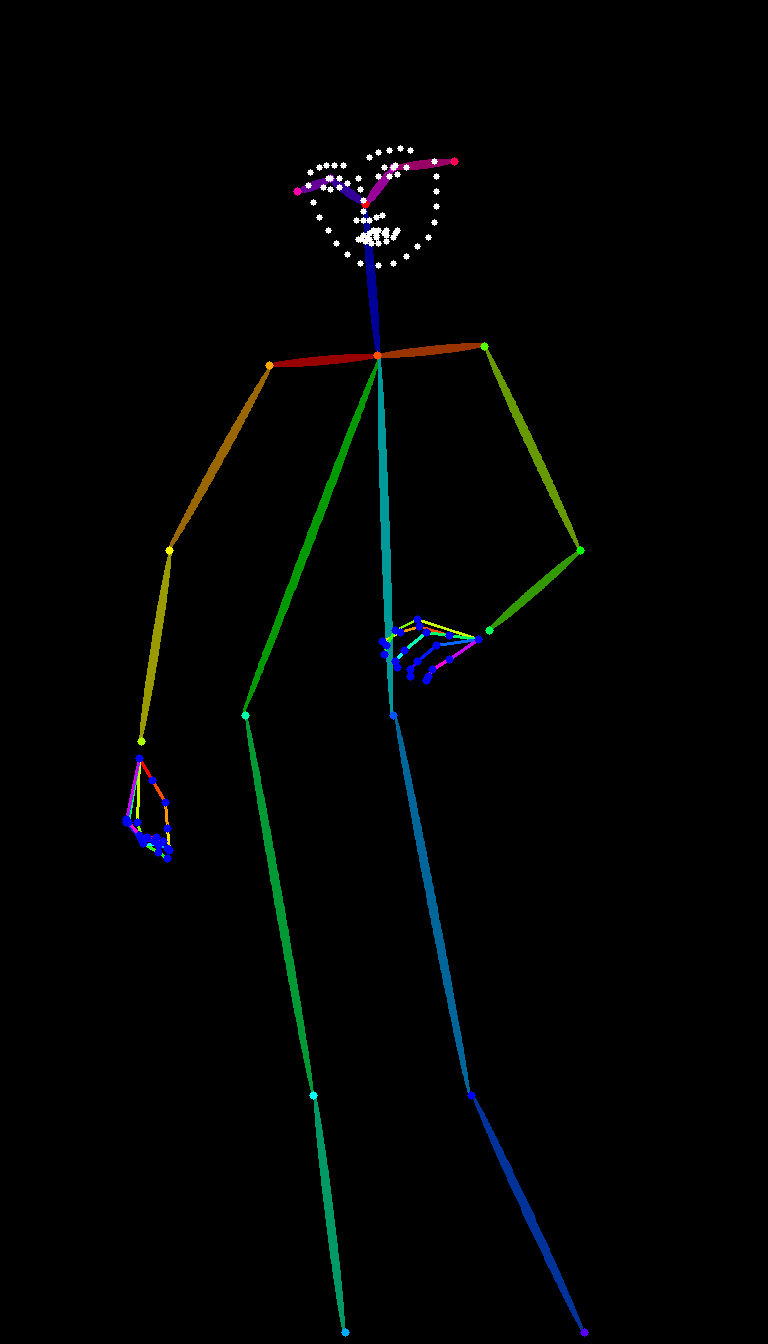

DW_OpenPose

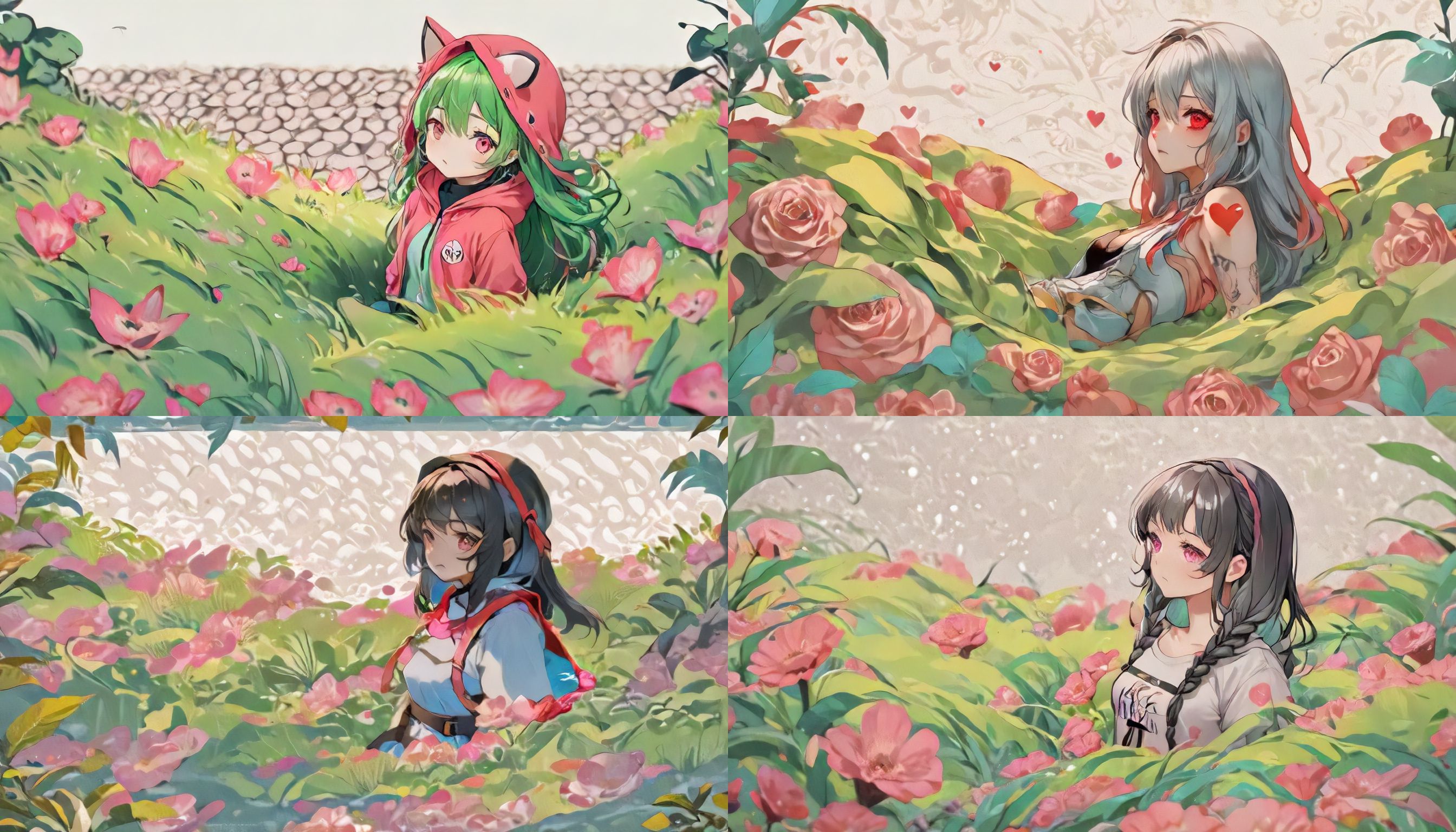

Tile_Anime

和其他模型不同,我需要简单解释一下tile模型的用法。 总的来说,tile模型有三个用法, 1、不输入任何提示词,它可以直接还原参考图的大致效果,然后略微重新修改局部细节,可以用于V2V。(图2) 2、权重设定为0.55~0.75,它可以保持原本构图和姿势的基础上,接受提示词和LoRA的修改。(图3) 3、使用配合放大效果,对每个tiling进行细节增加的同时保持一致性。(图4)

因为训练时使用的数据集为动漫2D/2.5D模型,所以目前对真实摄影风格的重绘效果并不好,需要等待完成最终版本。

Unlike other models, I need to briefly explain the usage of the tile model. In general, there are three uses for the tile model,

- Without entering any prompt words, it can directly restore the approximate effect of the reference image and then slightly modify local details. It can be used for V2V (Figure 2).

- With a weight setting of 0.55~0.75, it can maintain the original composition and pose while accepting modifications from prompt words and LoRA (Figure 3).

- Use in conjunction with magnification effects to increase detail for each tiling while maintaining consistency (Figure 4).

Since the dataset used during training is an anime 2D/2.5D model, currently, its repainting effect on real photography styles is not good; we will have to wait until completing its final version.

目前释放出了α和β两个版本,分别对应1、2以及1、3的用法。 其中α用于姿势、构图迁移,它的泛化性很强,可以和其他LoRA结合使用。 而β用于保持一致性和高清放大,它对条件图片更敏感。 好吧,α是prompt更重要的版本,而β是controlnet更重要的版本。

Currently, two versions, α and β, have been released, corresponding to the usage of 1、2 and 1、3 respectively.

The α version is used for pose and composition transfer, with strong generalization capabilities that can be combined with other LoRA systems.

On the other hand, the β version is used for maintaining consistency and high-definition magnification; it is more sensitive to conditional images.

In summary, α is a more important version for prompts while β is a more important version for controlnet.

Tile_Realistic

Thank for all my supporter.

DiamondShark

Yashamon

t4ggno

Someone

kgmkm_mkgm

Even though I broke my foot last week, I still insisted on training the realistic version out.

You can compared with SD1.5 tile below here↓

For base model using juggernautXL series,so i recommend use their model or merge with it.

Here is comparing with other SDXL model.

- Downloads last month

- 20,087