extra_gated_heading: Acknowledge to follow corresponding license to access the repository

extra_gated_button_content: Agree and access repository

extra_gated_fields:

First Name: text

Last Name: text

Country: country

Affiliation: text

license: cc-by-nc-4.0

[Homepage] | [Paper] | [Discord] | [Dataset] | [Github]

Welcome to the xLAM model family! Large Action Models (LAMs) are advanced large language models designed to enhance decision-making and translate user intentions into executable actions that interact with the world. LAMs autonomously plan and execute tasks to achieve specific goals, serving as the brains of AI agents. They have the potential to automate workflow processes across various domains, making them invaluable for a wide range of applications.

Model Summary

This repo provides the GGUF format for the xLAM-7b-fc-r model. Here's a link to original model xLAM-7b-fc-r This model is designed for function composition and tool utilization tasks, providing fast, accurate, and structured responses based on the input queries and available tools. We use llama.cpp framework to convert models to GGUF. GGUF model files offer significant advantages in terms of interoperability, efficiency, scalability, flexibility, and ease of use. They are particularly valuable in applications requiring efficient model deployment, sharing, and optimization across diverse platforms and hardware environments.

Model Series

We provide a series of xLAMs in different sizes to cater to various applications, including those optimized for function-calling and general agent applications:

| Model | # Total Params | Context Length | Download Model | Download GGUF files |

|---|---|---|---|---|

| xLAM-1b-fc-r | 1.35B | 16384 | 🤗 Link | 🤗 Link |

| xLAM-7b-fc-r | 6.91B | 4096 | 🤗 Link | 🤗 Link |

The fc series of models are optimized for function-calling capability, providing fast, accurate, and structured responses based on input queries and available APIs. These models are fine-tuned based on the deepseek-coder models and are designed to be small enough for deployment on personal devices like phones or computers.

See our paper and Github repo for more detailed analysis.

Repository Overview

This repository is focused on our small xLAM-7b-fc-r model, which is optimized for function-calling and can be easily deployed on personal devices.

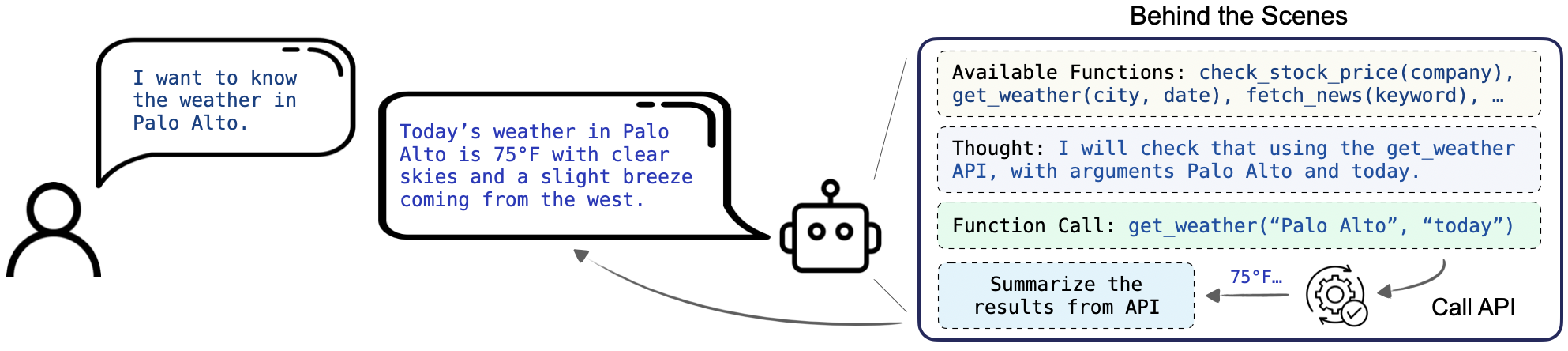

Function-calling, or tool use, is one of the key capabilities for AI agents. It requires the model not only understand and generate human-like text but also to execute functional API calls based on natural language instructions. This extends the utility of LLMs beyond simple conversation tasks to dynamic interactions with a variety of digital services and applications, such as retrieving weather information, managing social media platforms, and handling financial services.

The instructions will guide you through the setup, usage, and integration of xLAM-7b-fc-r-gguf with HuggingFace and llama-cpp.

How to download GGUF files

- Install Hugging Face CLI:

pip install huggingface-hub>=0.17.1

- Login to Hugging Face:

huggingface-cli login

- Download the GGUF model:

huggingface-cli download https://huggingface.co/Salesforce/xLAM-7b-fc-r-gguf xLAM-7b-fc-r.Q2_K.gguf --local-dir . --local-dir-use-symlinks False

Prompt template

You are an AI assistant for function calling.For politically sensitive questions, security and privacy issues, and other non-computer science questions, you will refuse to answer

### Instruction:

[BEGIN OF TASK INSTRUCTION]

{task_instruction}

[END OF TASK INSTRUCTION]

[BEGIN OF AVAILABLE TOOLS]

{xlam_format_tools}

[END OF AVAILABLE TOOLS]

[BEGIN OF FORMAT INSTRUCTION]

{format_instruction}

[END OF FORMAT INSTRUCTION]

[BEGIN OF QUERY]

{query}

[END OF QUERY]

### Response:

We highly recommend using our provided task_instruction, format_instruction, and tools format to achieve the best performance.

For more information, refer to prompt-documentation.

Usage

Command Line

- Install llama.cpp framework from the source here

- Run the inference task as below, to configure generation related paramter, refer to llama.cpp

./llama-cli -m [PATH-TO-LOCAL-GGUF] -p "[PROMPT]"

- Example

./llama-cli -m xLAM-7b-fc-r.Q8_0.gguf -p "You are an AI assistant for function calling.For politically sensitive questions, security and privacy issues, and other non-computer science questions, you will refuse to answer\n### Instruction:\n[BEGIN OF TASK INSTRUCTION]\nYou are an expert in composing functions. You are given a question and a set of possible functions.\nBased on the question, you will need to make one or more function/tool calls to achieve the purpose.\nIf none of the functions can be used, point it out and refuse to answer.\nIf the given question lacks the parameters required by the function, also point it out.\n[END OF TASK INSTRUCTION]\n\n[BEGIN OF AVAILABLE TOOLS]\n{\"name\": \"get_weather\", \"description\": \"Get the current weather for a location\", \"parameters\": {\"location\": {\"type\": \"string\", \"description\": \"The city and state, e.g. San Francisco, CA\"}, \"unit\": {\"type\": \"string\", \"enum\": [\"celsius\", \"fahrenheit\"], \"description\": \"The unit of temperature to return\"}}}\n[END OF AVAILABLE TOOLS]\n\n[BEGIN OF FORMAT INSTRUCTION]\nThe output MUST strictly adhere to the following JSON format, and NO other text MUST be included.\nThe example format is as follows. Please make sure the parameter type is correct. If no function call is needed, please make tool_calls an empty list '[]'\n```\n{\n \"tool_calls\": [\n {\"name\": \"func_name1\", \"arguments\": {\"argument1\": \"value1\", \"argument2\": \"value2\"}},\n ... (more tool calls as required)\n ]\n}\n```\n[END OF FORMAT INSTRUCTION]\n\n[BEGIN OF QUERY]\nWhat's the weather forecast for Tokyo?\n[END OF QUERY]\n\n\n### Response:"

Python framwork

- Install llama-cpp-python

pip install llama-cpp-python

- Refer to llama-cpp-API, here's a example below

from llama_cpp import Llama

llm = Llama(

model_path="[PATH-TO-MODEL]"

)

output = llm(

"You are an AI assistant for function calling.For politically sensitive questions, security and privacy issues, and other non-computer science questions, you will refuse to answer\n### Instruction:\n[BEGIN OF TASK INSTRUCTION]\nYou are an expert in composing functions. You are given a question and a set of possible functions.\nBased on the question, you will need to make one or more function/tool calls to achieve the purpose.\nIf none of the functions can be used, point it out and refuse to answer.\nIf the given question lacks the parameters required by the function, also point it out.\n[END OF TASK INSTRUCTION]\n\n[BEGIN OF AVAILABLE TOOLS]\n{\"name\": \"get_weather\", \"description\": \"Get the current weather for a location\", \"parameters\": {\"location\": {\"type\": \"string\", \"description\": \"The city and state, e.g. San Francisco, CA\"}, \"unit\": {\"type\": \"string\", \"enum\": [\"celsius\", \"fahrenheit\"], \"description\": \"The unit of temperature to return\"}}}\n[END OF AVAILABLE TOOLS]\n\n[BEGIN OF FORMAT INSTRUCTION]\nThe output MUST strictly adhere to the following JSON format, and NO other text MUST be included.\nThe example format is as follows. Please make sure the parameter type is correct. If no function call is needed, please make tool_calls an empty list '[]'\n```\n{\n \"tool_calls\": [\n {\"name\": \"func_name1\", \"arguments\": {\"argument1\": \"value1\", \"argument2\": \"value2\"}},\n ... (more tool calls as required)\n ]\n}\n```\n[END OF FORMAT INSTRUCTION]\n\n[BEGIN OF QUERY]\nWhat's the weather forecast for Tokyo?\n[END OF QUERY]\n\n\n### Response:",

echo=True # Echo the prompt back in the output

) # Generate a completion, can also call create_completion

print(output)

Benchmark Results

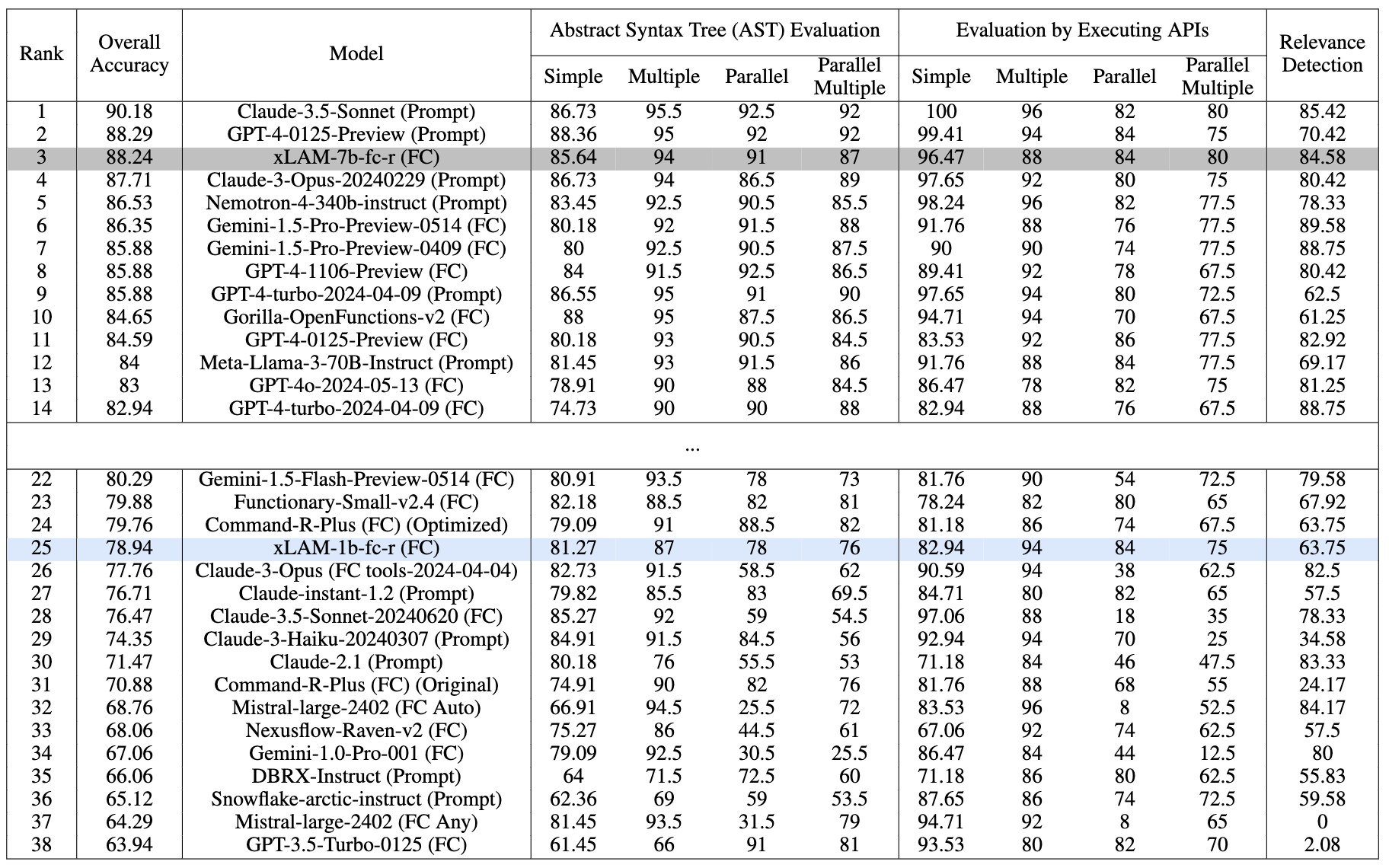

We mainly test our function-calling models on the Berkeley Function-Calling Leaderboard (BFCL), which offers a comprehensive evaluation framework for assessing LLMs' function-calling capabilities across various programming languages and application domains like Java, JavaScript, and Python.

Performance comparison on the BFCL benchmark as of date 07/18/2024. Evaluated with temperature=0.001 and top_p=1

Our xLAM-7b-fc-r secures the 3rd place with an overall accuracy of 88.24% on the leaderboard, outperforming many strong models. Notably, our xLAM-1b-fc-r model is the only tiny model with less than 2B parameters on the leaderboard, but still achieves a competitive overall accuracy of 78.94% and outperforming GPT3-Turbo and many larger models.

Both models exhibit balanced performance across various categories, showing their strong function-calling capabilities despite their small sizes.

See our paper for more detailed analysis.

License

xLAM-7b-fc-r-gguf is distributed under the CC-BY-NC-4.0 license, with additional terms specified in the Deepseek license.

Citation

If you find this repo helpful, please cite our paper:

@article{liu2024apigen,

title={APIGen: Automated Pipeline for Generating Verifiable and Diverse Function-Calling Datasets},

author={Liu, Zuxin and Hoang, Thai and Zhang, Jianguo and Zhu, Ming and Lan, Tian and Kokane, Shirley and Tan, Juntao and Yao, Weiran and Liu, Zhiwei and Feng, Yihao and others},

journal={arXiv preprint arXiv:2406.18518},

year={2024}

}