Ziya-Visual-Lyrics-14B

- Main Page:Fengshenbang

- Github: Fengshenbang-LM

简介 Brief Introduction

Lyrics 是IDEA CCNL研发的大规模视觉语言模型(Large Vision Language Model, LVLM)。Lyrics在预训练(视觉语言的表征对齐)和指令微调(视觉到语言的生成学习)的两阶段训练过程中,构建了视觉细化器来提取局部视觉特征和具化的空间表征,其由图像标记(RAM)、目标检测(Grounding DINO)和语义分割(SAM)模块组成。该方法可以防止细粒度的视觉对象的缺失,造成模型产生不可修复的视觉幻觉和事实错误。

Lyrics 可以以图像、文本、视觉对象作为输入,并以文本和视觉对象的空间表征作为输出。Lyrics模型具有强大的细粒度视觉特征提取和理解能力,能够完成各种以视觉为中心的任务,包括多回合视觉对话、视觉场景理解和推理、基于常识的图像描述、指向性问答。

Lyrics is a Large Vision Language Model (LVLM) developed by IDEA CCNL. In the two-stage training process of pre-training (representation alignment of vision-language) and instruction fine-tuning (generative learning from vision to language), Lyrics construct a visual refiner to extract local visual features and embodied spatial representations. It consists of image tagging (RAM), object detection (Grounding DINO) and semantic segmentation (SAM) modules. This method can prevent the absence of fine-grained visual objects, causing irreparable visual hallucinations and factual errors in the model.

Lyrics can take images, text, and visual objects as input, and text and spatial representations of visual objects as output. The Lyrics model has a powerful ability of fine-grained visual feature extraction and understanding, and is capable of various visual-centric tasks, including multi-turn visual conversation, visual scene understanding and reasoning, commonsense-grounded image description, referential dialogue.

模型结构 Brief Introduction

Lyrics 提出了MQ-Former作为视觉信号感知器来弥合多尺度的视觉和文本信息,其通过四种预训练任务来对齐ViT提供的全局视觉特征、视觉细化器提供的局部视觉特征、视觉对象的空间表征和图像摘要,使得模型具备提取语义感知的视觉对象的能力。

Lyrics proposed MQ-Former as a visual signal perceptron to bridge multi-scale visual and text information, which aligns the global visual features provided by ViT, the local visual features provided by the visual refiner, the spatial representation of visual objects and the image caption through four pre-training tasks. The model has the ability to extract semantically perceived visual objects.

安装要求 (Requirements)

- python 3.8及以上版本

- pytorch 1.12及以上版本

- 建议使用CUDA 11.3及以上(GPU用户需考虑此选项)

- python 3.8 and above

- pytorch 1.12 and above

- CUDA 11.3 and above are recommended (this is for GPU users)

使用 Usage

- 在运行代码前,需要把 config.json 中相关文件的配置路径改为模型断点所在路径,或是将运行代码放在 config.json 相同目录下。

- Before running the code, you need to change the configuration path of the relevant file in config.json to the path where the model breakpoint is located, or put the running code in the same directory as config.json.

import gradio as gr

from PIL import Image

import torch

import random

from fengshen.models.Lyrics.modeling_lyrics import LyricsLMForConditionalGeneration

from torchvision.transforms import Compose, ToTensor, Resize, Normalize

from transformers import InstructBlipProcessor, LlamaTokenizer, BertTokenizer, GenerationConfig

from torchvision.transforms import Normalize, Compose, RandomResizedCrop, InterpolationMode, ToTensor, RandomHorizontalFlip

import fengshen.models.Lyrics.groundingdino.transforms as T

from transformers import InstructBlipForConditionalGeneration

from peft import PeftModel

OPENAI_DATASET_MEAN = (0.48145466, 0.4578275, 0.40821073)

OPENAI_DATASET_STD = (0.26862954, 0.26130258, 0.27577711)

device = "cuda" if torch.cuda.is_available() else "cpu"

_MODEL_PATH = "your_model_path"

processor = InstructBlipProcessor.from_pretrained(os.path.join(_MODEL_PATH, "vicuna-13b_processor"), padding_side = "left")

grounding_transforms = T.Compose(

[

T.RandomResize([800], max_size=1333),

# T.RandomResize([800]),

T.ToTensor(),

T.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]),

]

)

ram_transforms = Compose([

Resize((384, 384)),

ToTensor(),

Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

])

model = LyricsLMForConditionalGeneration.from_pretrained(_MODEL_PATH).to(device).eval().float()

model = PeftModel.from_pretrained(model, _MODEL_PATH).to(device).eval().float()

model.requires_grad_=False

prompt = [

"Question A",

"Question B",

]

image_url = [

'Img Path A',

'Img Path B',

]

imgs = []

for image, text in zip(image_url, prompt):

image = Image.open(image).convert("RGB")

ram_pixel_values = ram_transforms(image).unsqueeze(0).to(device)

grounding_pixel_values = [grounding_transforms(image, None)[0]]

inputs = processor(images=image, text=text, return_tensors="pt").to(device)

outputs = model.generate(

# **inputs,

pixel_values=inputs.pixel_values,

ram_pixel_values=ram_pixel_values,

grounding_pixel_values=grounding_pixel_values,

input_ids=inputs.input_ids,

attention_mask=inputs.attention_mask,

qformer_input_ids=inputs.qformer_input_ids,

qformer_attention_mask=inputs.qformer_attention_mask,

do_sample=False,

num_beams=5,

max_length=256,

min_length=4,

# repetition_penalty=1.5,

length_penalty=1.0,

# temperature=0.3,

# top_p=0.1,

# pad_token_id=32000,

)

generated_text = processor.batch_decode(outputs, skip_special_tokens=True)[0].strip()

print(generated_text, '\n')

零样本图像描述 & 通用视觉问答 (Zero-shot Image Captioning & General VQA)

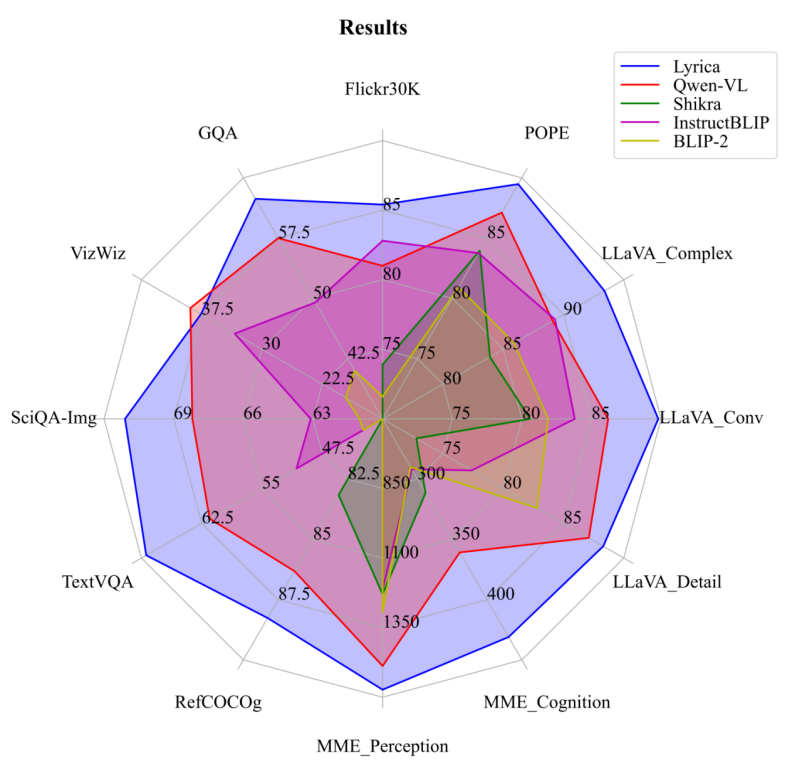

- 在 Image Captioning 中,Lyrics 在 COCO, Nocaps (0-shot) 和 Flickr30K (0-shot) 数据集上超过了同等规模的 LVLM 模型,取得了 SOTA 的结果。

- 在 General VQA 中,Lyrics 在四个数据集取得了 SOTA 的结果,并在 Vizwiz 数据集上与 Qwen-VL 旗鼓相当。

- In Image Captioning, Lyrics on COCO, Nocaps (0-shot), and Flickr30K (0-shot) datasets outperform LVLM models of the same size, achieving SOTA results.

- In General VQA, Lyrics achieved SOTA results across four datasets and tied with Qwen-VL on the Vizwiz dataset.

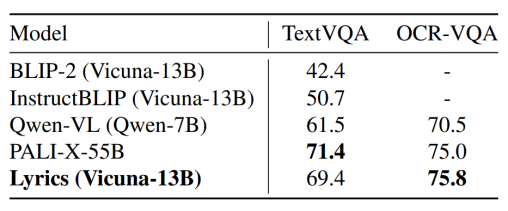

文本导向的视觉问答 (Text-oriented VQA)

- 在文字相关的识别/问答评测上,Lyrics 取得了当前规模下通用 LVLM 的 SOTA 准确率,与 PaLI-X-55B 的性能不相上下。

- 对文字的检测和理解精度对这类任务的测评有很大的影响,Lyrics 引入的目标检测和语义分割模块有助于模型更精确地从图像中分离出文字信息,这比起使用更高分辨率 ViT (448 × 448) 的 Qwen-VL 具有更高的性能。

- Lyrics achieves the highest SOTA accuracy of a general-purpose LVLM with the same scale, matching the performance of the PaLI-X-55B, on text-oriented recognition/question-answer evaluations.

- Text detection and understanding accuracy have a great impact on the evaluation of such tasks. The object detection and semantic segmentation modules introduced by Lyrics help the model to more accurately separate text information from images, which has higher performance than Qwen-VL with higher resolution ViT (448 × 448).

细粒度视觉定位 (Referring Expression Comprehension)

- 在视觉对象的定位任务上,Lyrics 在少量数据拟合后,小幅度超过 Qwen-VL 和 Shikra-13B,取得了目前 Generalist LVLM 模型在 Refcoco, Refcoco+ 和 Refcocog 数据集上的 SOTA 的平均性能。

- In the REC task of visual objects, Lyrics slightly exceeds Qwen-VL and Shikra-13B after fitting a small amount of data. The SOTA average performance of the current Geaneralist LVLM model on the Refcoco, Refcoco+ and Refcocog datasets is obtained.

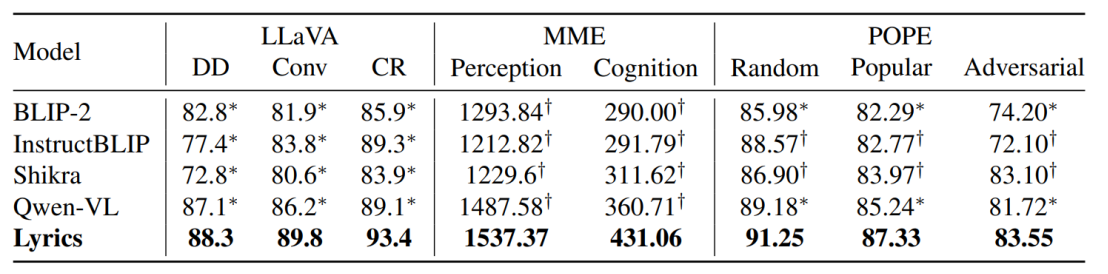

现实场景对话测评 (Real-word Dialogue Evaluation)

- LLaVA 排行榜在30张图片上测评模型的对话,详细描述和复杂推理能力,其通过 GPT-4 来评估模型的生成能力跟 GPT-4 之间的分数差距。

- MME 排行榜覆盖了感知和认知能力的14个子任务,前者包括细粒度和粗粒度对象的检测(对象的存在、数量、位置、颜色),电影海报、名人、场景、地标和艺术品的识别;后者包括常识推理,数值计算,文本翻译,代码推理。

- POPE 排行榜采用了随机采样、高频采样和对比采样三种策略来验证 LVLM 是否容易产生特定物体的幻觉。

- 在三个排行榜上,Lyrics 均超过了主流的 LVLMs。

预测样例 (Case Study)

引用 Citation

如果您在您的工作中使用了我们的模型,可以引用我们的论文:

If you are using the resource for your work, please cite the our paper:

@misc{lu2023lyrics,

title={Lyrics: Boosting Fine-grained Language-Vision Alignment and Comprehension via Semantic-aware Visual Objects},

author={Junyu Lu and Ruyi Gan and Dixiang Zhang and Xiaojun Wu and Ziwei Wu and Renliang Sun and Jiaxing Zhang and Pingjian Zhang and Yan Song},

year={2023},

eprint={2312.05278},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

You can also cite our website:

欢迎引用我们的网站:

@misc{Fengshenbang-LM,

title={Fengshenbang-LM},

author={IDEA-CCNL},

year={2021},

howpublished={\url{https://github.com/IDEA-CCNL/Fengshenbang-LM}},

}

- Downloads last month

- 9