update

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- app.py +370 -125

- checkpoints/epoch=000041-step=000010999.ckpt +3 -0

- stable_diffusion/LICENSE +82 -0

- stable_diffusion/README.md +215 -0

- stable_diffusion/Stable_Diffusion_v1_Model_Card.md +144 -0

- stable_diffusion/assets/a-painting-of-a-fire.png +0 -0

- stable_diffusion/assets/a-photograph-of-a-fire.png +0 -0

- stable_diffusion/assets/a-shirt-with-a-fire-printed-on-it.png +0 -0

- stable_diffusion/assets/a-shirt-with-the-inscription-'fire'.png +0 -0

- stable_diffusion/assets/a-watercolor-painting-of-a-fire.png +0 -0

- stable_diffusion/assets/birdhouse.png +0 -0

- stable_diffusion/assets/fire.png +0 -0

- stable_diffusion/assets/inpainting.png +0 -0

- stable_diffusion/assets/modelfigure.png +0 -0

- stable_diffusion/assets/rdm-preview.jpg +0 -0

- stable_diffusion/assets/reconstruction1.png +0 -0

- stable_diffusion/assets/reconstruction2.png +0 -0

- stable_diffusion/assets/results.gif.REMOVED.git-id +1 -0

- stable_diffusion/assets/rick.jpeg +0 -0

- stable_diffusion/assets/stable-samples/img2img/mountains-1.png +0 -0

- stable_diffusion/assets/stable-samples/img2img/mountains-2.png +0 -0

- stable_diffusion/assets/stable-samples/img2img/mountains-3.png +0 -0

- stable_diffusion/assets/stable-samples/img2img/sketch-mountains-input.jpg +0 -0

- stable_diffusion/assets/stable-samples/img2img/upscaling-in.png.REMOVED.git-id +1 -0

- stable_diffusion/assets/stable-samples/img2img/upscaling-out.png.REMOVED.git-id +1 -0

- stable_diffusion/assets/stable-samples/txt2img/000002025.png +0 -0

- stable_diffusion/assets/stable-samples/txt2img/000002035.png +0 -0

- stable_diffusion/assets/stable-samples/txt2img/merged-0005.png.REMOVED.git-id +1 -0

- stable_diffusion/assets/stable-samples/txt2img/merged-0006.png.REMOVED.git-id +1 -0

- stable_diffusion/assets/stable-samples/txt2img/merged-0007.png.REMOVED.git-id +1 -0

- stable_diffusion/assets/the-earth-is-on-fire,-oil-on-canvas.png +0 -0

- stable_diffusion/assets/txt2img-convsample.png +0 -0

- stable_diffusion/assets/txt2img-preview.png.REMOVED.git-id +1 -0

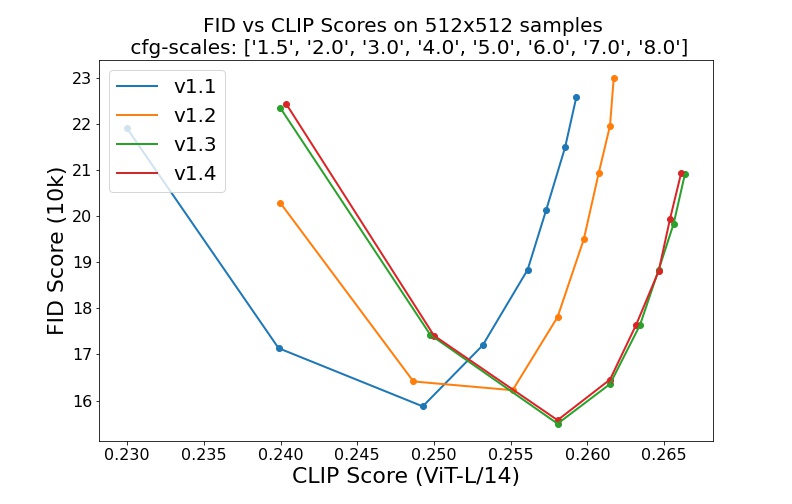

- stable_diffusion/assets/v1-variants-scores.jpg +0 -0

- stable_diffusion/configs/autoencoder/autoencoder_kl_16x16x16.yaml +54 -0

- stable_diffusion/configs/autoencoder/autoencoder_kl_32x32x4.yaml +53 -0

- stable_diffusion/configs/autoencoder/autoencoder_kl_64x64x3.yaml +54 -0

- stable_diffusion/configs/autoencoder/autoencoder_kl_8x8x64.yaml +53 -0

- stable_diffusion/configs/latent-diffusion/celebahq-ldm-vq-4.yaml +86 -0

- stable_diffusion/configs/latent-diffusion/cin-ldm-vq-f8.yaml +98 -0

- stable_diffusion/configs/latent-diffusion/cin256-v2.yaml +68 -0

- stable_diffusion/configs/latent-diffusion/ffhq-ldm-vq-4.yaml +85 -0

- stable_diffusion/configs/latent-diffusion/lsun_bedrooms-ldm-vq-4.yaml +85 -0

- stable_diffusion/configs/latent-diffusion/lsun_churches-ldm-kl-8.yaml +91 -0

- stable_diffusion/configs/latent-diffusion/txt2img-1p4B-eval.yaml +71 -0

- stable_diffusion/configs/retrieval-augmented-diffusion/768x768.yaml +68 -0

- stable_diffusion/configs/stable-diffusion/v1-inference.yaml +70 -0

- stable_diffusion/data/DejaVuSans.ttf +0 -0

- stable_diffusion/data/example_conditioning/superresolution/sample_0.jpg +0 -0

- stable_diffusion/data/example_conditioning/text_conditional/sample_0.txt +1 -0

app.py

CHANGED

|

@@ -1,146 +1,391 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

import gradio as gr

|

|

|

|

| 2 |

import numpy as np

|

| 3 |

-

import random

|

| 4 |

-

from diffusers import DiffusionPipeline

|

| 5 |

import torch

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 6 |

|

| 7 |

-

|

| 8 |

|

| 9 |

-

|

| 10 |

-

torch.cuda.max_memory_allocated(device=device)

|

| 11 |

-

pipe = DiffusionPipeline.from_pretrained("stabilityai/sdxl-turbo", torch_dtype=torch.float16, variant="fp16", use_safetensors=True)

|

| 12 |

-

pipe.enable_xformers_memory_efficient_attention()

|

| 13 |

-

pipe = pipe.to(device)

|

| 14 |

-

else:

|

| 15 |

-

pipe = DiffusionPipeline.from_pretrained("stabilityai/sdxl-turbo", use_safetensors=True)

|

| 16 |

-

pipe = pipe.to(device)

|

| 17 |

|

| 18 |

-

|

| 19 |

-

|

|

|

|

|

|

|

| 20 |

|

| 21 |

-

def

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 22 |

|

| 23 |

-

|

| 24 |

-

|

| 25 |

-

|

| 26 |

-

|

| 27 |

-

|

| 28 |

-

|

| 29 |

-

|

| 30 |

-

|

| 31 |

-

|

| 32 |

-

|

| 33 |

-

|

| 34 |

-

|

| 35 |

-

|

| 36 |

-

)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 37 |

|

| 38 |

-

|

| 39 |

-

|

| 40 |

-

examples = [

|

| 41 |

-

"Astronaut in a jungle, cold color palette, muted colors, detailed, 8k",

|

| 42 |

-

"An astronaut riding a green horse",

|

| 43 |

-

"A delicious ceviche cheesecake slice",

|

| 44 |

-

]

|

| 45 |

-

|

| 46 |

-

css="""

|

| 47 |

-

#col-container {

|

| 48 |

-

margin: 0 auto;

|

| 49 |

-

max-width: 520px;

|

| 50 |

-

}

|

| 51 |

-

"""

|

| 52 |

-

|

| 53 |

-

if torch.cuda.is_available():

|

| 54 |

-

power_device = "GPU"

|

| 55 |

-

else:

|

| 56 |

-

power_device = "CPU"

|

| 57 |

-

|

| 58 |

-

with gr.Blocks(css=css) as demo:

|

| 59 |

|

| 60 |

-

|

| 61 |

-

|

| 62 |

-

#

|

| 63 |

-

|

| 64 |

-

""")

|

| 65 |

|

| 66 |

-

|

| 67 |

-

|

| 68 |

-

|

| 69 |

-

|

| 70 |

-

|

| 71 |

-

|

| 72 |

-

|

| 73 |

-

|

| 74 |

-

|

| 75 |

-

|

| 76 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 77 |

|

| 78 |

-

|

| 79 |

-

|

| 80 |

-

|

| 81 |

-

|

| 82 |

-

|

| 83 |

-

|

| 84 |

-

|

| 85 |

-

|

| 86 |

-

|

| 87 |

-

|

| 88 |

-

|

| 89 |

-

|

| 90 |

-

|

| 91 |

-

|

| 92 |

-

|

| 93 |

-

|

| 94 |

-

|

| 95 |

-

|

| 96 |

-

|

| 97 |

-

|

| 98 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 99 |

with gr.Row():

|

| 100 |

-

|

| 101 |

-

|

| 102 |

-

|

| 103 |

-

minimum=256,

|

| 104 |

-

maximum=MAX_IMAGE_SIZE,

|

| 105 |

-

step=32,

|

| 106 |

-

value=512,

|

| 107 |

-

)

|

| 108 |

-

|

| 109 |

-

height = gr.Slider(

|

| 110 |

-

label="Height",

|

| 111 |

-

minimum=256,

|

| 112 |

-

maximum=MAX_IMAGE_SIZE,

|

| 113 |

-

step=32,

|

| 114 |

-

value=512,

|

| 115 |

-

)

|

| 116 |

-

|

| 117 |

with gr.Row():

|

| 118 |

-

|

| 119 |

-

|

| 120 |

-

|

| 121 |

-

|

| 122 |

-

|

| 123 |

-

|

| 124 |

-

|

|

|

|

| 125 |

)

|

| 126 |

-

|

| 127 |

-

|

| 128 |

-

|

| 129 |

-

|

| 130 |

-

|

| 131 |

-

|

| 132 |

-

|

|

|

|

| 133 |

)

|

| 134 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 135 |

gr.Examples(

|

| 136 |

-

examples

|

| 137 |

-

|

|

|

|

|

|

|

|

|

|

| 138 |

)

|

| 139 |

-

|

| 140 |

-

|

| 141 |

-

fn

|

| 142 |

-

inputs

|

| 143 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 144 |

)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 145 |

|

| 146 |

demo.queue().launch()

|

|

|

|

| 1 |

+

from __future__ import annotations

|

| 2 |

+

|

| 3 |

+

import math

|

| 4 |

+

import random

|

| 5 |

+

import sys

|

| 6 |

+

from argparse import ArgumentParser

|

| 7 |

+

|

| 8 |

+

from tqdm.auto import trange

|

| 9 |

+

import einops

|

| 10 |

import gradio as gr

|

| 11 |

+

import k_diffusion as K

|

| 12 |

import numpy as np

|

|

|

|

|

|

|

| 13 |

import torch

|

| 14 |

+

import torch.nn as nn

|

| 15 |

+

from einops import rearrange

|

| 16 |

+

from omegaconf import OmegaConf

|

| 17 |

+

from PIL import Image, ImageOps, ImageFilter

|

| 18 |

+

from torch import autocast

|

| 19 |

+

import cv2

|

| 20 |

+

import imageio

|

| 21 |

|

| 22 |

+

sys.path.append("./stable_diffusion")

|

| 23 |

|

| 24 |

+

from stable_diffusion.ldm.util import instantiate_from_config

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 25 |

|

| 26 |

+

class CFGDenoiser(nn.Module):

|

| 27 |

+

def __init__(self, model):

|

| 28 |

+

super().__init__()

|

| 29 |

+

self.inner_model = model

|

| 30 |

|

| 31 |

+

def forward(self, z_0, z_1, sigma, cond, uncond, text_cfg_scale, image_cfg_scale):

|

| 32 |

+

cfg_z_0 = einops.repeat(z_0, "1 ... -> n ...", n=3)

|

| 33 |

+

cfg_z_1 = einops.repeat(z_1, "1 ... -> n ...", n=3)

|

| 34 |

+

cfg_sigma = einops.repeat(sigma, "1 ... -> n ...", n=3)

|

| 35 |

+

cfg_cond = {

|

| 36 |

+

"c_crossattn": [torch.cat([cond["c_crossattn"][0], uncond["c_crossattn"][0], uncond["c_crossattn"][0]])],

|

| 37 |

+

"c_concat": [torch.cat([cond["c_concat"][0], cond["c_concat"][0], uncond["c_concat"][0]])],

|

| 38 |

+

}

|

| 39 |

+

output_0, output_1 = self.inner_model(cfg_z_0, cfg_z_1, cfg_sigma, cond=cfg_cond)

|

| 40 |

+

out_cond_0, out_img_cond_0, out_uncond_0 = output_0.chunk(3)

|

| 41 |

+

out_cond_1, _, _ = output_1.chunk(3)

|

| 42 |

+

return out_uncond_0 + text_cfg_scale * (out_cond_0 - out_img_cond_0) + image_cfg_scale * (out_img_cond_0 - out_uncond_0), \

|

| 43 |

+

out_cond_1

|

| 44 |

|

| 45 |

+

def load_model_from_config(config, ckpt, vae_ckpt=None, verbose=False):

|

| 46 |

+

print(f"Loading model from {ckpt}")

|

| 47 |

+

pl_sd = torch.load(ckpt, map_location="cpu")

|

| 48 |

+

if "global_step" in pl_sd:

|

| 49 |

+

print(f"Global Step: {pl_sd['global_step']}")

|

| 50 |

+

sd = pl_sd["state_dict"]

|

| 51 |

+

if vae_ckpt is not None:

|

| 52 |

+

print(f"Loading VAE from {vae_ckpt}")

|

| 53 |

+

vae_sd = torch.load(vae_ckpt, map_location="cpu")["state_dict"]

|

| 54 |

+

sd = {

|

| 55 |

+

k: vae_sd[k[len("first_stage_model.") :]] if k.startswith("first_stage_model.") else v

|

| 56 |

+

for k, v in sd.items()

|

| 57 |

+

}

|

| 58 |

+

model = instantiate_from_config(config.model)

|

| 59 |

+

m, u = model.load_state_dict(sd, strict=True)

|

| 60 |

+

if len(m) > 0 and verbose:

|

| 61 |

+

print("missing keys:")

|

| 62 |

+

print(m)

|

| 63 |

+

if len(u) > 0 and verbose:

|

| 64 |

+

print("unexpected keys:")

|

| 65 |

+

print(u)

|

| 66 |

+

return model

|

| 67 |

+

|

| 68 |

+

def append_dims(x, target_dims):

|

| 69 |

+

"""Appends dimensions to the end of a tensor until it has target_dims dimensions."""

|

| 70 |

+

dims_to_append = target_dims - x.ndim

|

| 71 |

+

if dims_to_append < 0:

|

| 72 |

+

raise ValueError(f'input has {x.ndim} dims but target_dims is {target_dims}, which is less')

|

| 73 |

+

return x[(...,) + (None,) * dims_to_append]

|

| 74 |

+

|

| 75 |

+

class CompVisDenoiser(K.external.CompVisDenoiser):

|

| 76 |

+

def __init__(self, model, quantize=False, device='cpu'):

|

| 77 |

+

super().__init__( model, quantize, device)

|

| 78 |

|

| 79 |

+

def get_eps(self, *args, **kwargs):

|

| 80 |

+

return self.inner_model.apply_model(*args, **kwargs)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 81 |

|

| 82 |

+

def forward(self, input_0, input_1, sigma, **kwargs):

|

| 83 |

+

c_out, c_in = [append_dims(x, input_0.ndim) for x in self.get_scalings(sigma)]

|

| 84 |

+

# eps_0, eps_1 = self.get_eps(input_0 * c_in, input_1 * c_in, self.sigma_to_t(sigma), **kwargs)

|

| 85 |

+

eps_0, eps_1 = self.get_eps(input_0 * c_in, self.sigma_to_t(sigma), **kwargs)

|

|

|

|

| 86 |

|

| 87 |

+

return input_0 + eps_0 * c_out, eps_1

|

| 88 |

+

|

| 89 |

+

def to_d(x, sigma, denoised):

|

| 90 |

+

"""Converts a denoiser output to a Karras ODE derivative."""

|

| 91 |

+

return (x - denoised) / append_dims(sigma, x.ndim)

|

| 92 |

+

|

| 93 |

+

def default_noise_sampler(x):

|

| 94 |

+

return lambda sigma, sigma_next: torch.randn_like(x)

|

| 95 |

+

|

| 96 |

+

def get_ancestral_step(sigma_from, sigma_to, eta=1.):

|

| 97 |

+

"""Calculates the noise level (sigma_down) to step down to and the amount

|

| 98 |

+

of noise to add (sigma_up) when doing an ancestral sampling step."""

|

| 99 |

+

if not eta:

|

| 100 |

+

return sigma_to, 0.

|

| 101 |

+

sigma_up = min(sigma_to, eta * (sigma_to ** 2 * (sigma_from ** 2 - sigma_to ** 2) / sigma_from ** 2) ** 0.5)

|

| 102 |

+

sigma_down = (sigma_to ** 2 - sigma_up ** 2) ** 0.5

|

| 103 |

+

return sigma_down, sigma_up

|

| 104 |

+

|

| 105 |

+

def decode_mask(mask, height = 256, width = 256):

|

| 106 |

+

mask = nn.functional.interpolate(mask, size=(height, width), mode="bilinear", align_corners=False)

|

| 107 |

+

mask = torch.where(mask > 0, 1, -1) # Thresholding step

|

| 108 |

+

mask = torch.clamp((mask + 1.0) / 2.0, min=0.0, max=1.0)

|

| 109 |

+

mask = 255.0 * rearrange(mask, "1 c h w -> h w c")

|

| 110 |

+

mask = torch.cat([mask, mask, mask], dim=-1)

|

| 111 |

+

mask = mask.type(torch.uint8).cpu().numpy()

|

| 112 |

+

return mask

|

| 113 |

+

|

| 114 |

+

@torch.no_grad()

|

| 115 |

+

def sample_euler_ancestral(model, x_0, x_1, sigmas, height, width, extra_args=None, disable=None, eta=1., s_noise=1., noise_sampler=None):

|

| 116 |

+

"""Ancestral sampling with Euler method steps."""

|

| 117 |

+

extra_args = {} if extra_args is None else extra_args

|

| 118 |

+

noise_sampler = default_noise_sampler(x_0) if noise_sampler is None else noise_sampler

|

| 119 |

+

s_in = x_0.new_ones([x_0.shape[0]])

|

| 120 |

+

|

| 121 |

+

mask_list = []

|

| 122 |

+

image_list = []

|

| 123 |

+

for i in trange(len(sigmas) - 1, disable=disable):

|

| 124 |

+

denoised_0, denoised_1 = model(x_0, x_1, sigmas[i] * s_in, **extra_args)

|

| 125 |

+

image_list.append(denoised_0)

|

| 126 |

+

|

| 127 |

+

sigma_down, sigma_up = get_ancestral_step(sigmas[i], sigmas[i + 1], eta=eta)

|

| 128 |

+

d_0 = to_d(x_0, sigmas[i], denoised_0)

|

| 129 |

+

|

| 130 |

+

# Euler method

|

| 131 |

+

dt = sigma_down - sigmas[i]

|

| 132 |

+

x_0 = x_0 + d_0 * dt

|

| 133 |

+

|

| 134 |

+

if sigmas[i + 1] > 0:

|

| 135 |

+

x_0 = x_0 + noise_sampler(sigmas[i], sigmas[i + 1]) * s_noise * sigma_up

|

| 136 |

+

|

| 137 |

+

x_1 = denoised_1

|

| 138 |

+

mask_list.append(decode_mask(x_1, height, width))

|

| 139 |

+

|

| 140 |

+

image_list = torch.cat(image_list, dim=0)

|

| 141 |

+

|

| 142 |

+

return x_0, x_1, image_list, mask_list

|

| 143 |

+

|

| 144 |

+

parser = ArgumentParser()

|

| 145 |

+

parser.add_argument("--resolution", default=512, type=int)

|

| 146 |

+

parser.add_argument("--config", default="configs/generate_diffree.yaml", type=str)

|

| 147 |

+

parser.add_argument("--ckpt", default="checkpoints/epoch=000041-step=000010999.ckpt", type=str)

|

| 148 |

+

parser.add_argument("--vae-ckpt", default=None, type=str)

|

| 149 |

+

args = parser.parse_args()

|

| 150 |

+

|

| 151 |

+

config = OmegaConf.load(args.config)

|

| 152 |

+

model = load_model_from_config(config, args.ckpt, args.vae_ckpt)

|

| 153 |

+

model.eval().cuda()

|

| 154 |

+

model_wrap = CompVisDenoiser(model)

|

| 155 |

+

model_wrap_cfg = CFGDenoiser(model_wrap)

|

| 156 |

+

null_token = model.get_learned_conditioning([""])

|

| 157 |

+

|

| 158 |

+

def generate(

|

| 159 |

+

input_image: Image.Image,

|

| 160 |

+

instruction: str,

|

| 161 |

+

steps: int,

|

| 162 |

+

randomize_seed: bool,

|

| 163 |

+

seed: int,

|

| 164 |

+

randomize_cfg: bool,

|

| 165 |

+

text_cfg_scale: float,

|

| 166 |

+

image_cfg_scale: float,

|

| 167 |

+

):

|

| 168 |

+

seed = random.randint(0, 100000) if randomize_seed else seed

|

| 169 |

+

text_cfg_scale = round(random.uniform(6.0, 9.0), ndigits=2) if randomize_cfg else text_cfg_scale

|

| 170 |

+

image_cfg_scale = round(random.uniform(1.2, 1.8), ndigits=2) if randomize_cfg else image_cfg_scale

|

| 171 |

+

|

| 172 |

+

width, height = input_image.size

|

| 173 |

+

factor = args.resolution / max(width, height)

|

| 174 |

+

factor = math.ceil(min(width, height) * factor / 64) * 64 / min(width, height)

|

| 175 |

+

width = int((width * factor) // 64) * 64

|

| 176 |

+

height = int((height * factor) // 64) * 64

|

| 177 |

+

input_image = ImageOps.fit(input_image, (width, height), method=Image.Resampling.LANCZOS)

|

| 178 |

+

input_image_copy = input_image.convert("RGB")

|

| 179 |

+

|

| 180 |

+

if instruction == "":

|

| 181 |

+

return [input_image, seed]

|

| 182 |

+

|

| 183 |

+

with torch.no_grad(), autocast("cuda"), model.ema_scope():

|

| 184 |

+

cond = {}

|

| 185 |

+

cond["c_crossattn"] = [model.get_learned_conditioning([instruction])]

|

| 186 |

+

input_image = 2 * torch.tensor(np.array(input_image)).float() / 255 - 1

|

| 187 |

+

input_image = rearrange(input_image, "h w c -> 1 c h w").to(model.device)

|

| 188 |

+

cond["c_concat"] = [model.encode_first_stage(input_image).mode()]

|

| 189 |

+

|

| 190 |

+

uncond = {}

|

| 191 |

+

uncond["c_crossattn"] = [null_token]

|

| 192 |

+

uncond["c_concat"] = [torch.zeros_like(cond["c_concat"][0])]

|

| 193 |

+

|

| 194 |

+

sigmas = model_wrap.get_sigmas(steps)

|

| 195 |

+

|

| 196 |

+

extra_args = {

|

| 197 |

+

"cond": cond,

|

| 198 |

+

"uncond": uncond,

|

| 199 |

+

"text_cfg_scale": text_cfg_scale,

|

| 200 |

+

"image_cfg_scale": image_cfg_scale,

|

| 201 |

+

}

|

| 202 |

+

torch.manual_seed(seed)

|

| 203 |

+

z_0 = torch.randn_like(cond["c_concat"][0]) * sigmas[0]

|

| 204 |

+

z_1 = torch.randn_like(cond["c_concat"][0]) * sigmas[0]

|

| 205 |

+

|

| 206 |

+

z_0, z_1, image_list, mask_list = sample_euler_ancestral(model_wrap_cfg, z_0, z_1, sigmas, height, width, extra_args=extra_args)

|

| 207 |

+

|

| 208 |

+

x_0 = model.decode_first_stage(z_0)

|

| 209 |

+

|

| 210 |

+

if model.first_stage_downsample:

|

| 211 |

+

x_1 = nn.functional.interpolate(z_1, size=(height, width), mode="bilinear", align_corners=False)

|

| 212 |

+

x_1 = torch.where(x_1 > 0, 1, -1) # Thresholding step

|

| 213 |

+

else:

|

| 214 |

+

x_1 = model.decode_first_stage(z_1)

|

| 215 |

+

|

| 216 |

+

x_0 = torch.clamp((x_0 + 1.0) / 2.0, min=0.0, max=1.0)

|

| 217 |

+

x_1 = torch.clamp((x_1 + 1.0) / 2.0, min=0.0, max=1.0)

|

| 218 |

+

x_0 = 255.0 * rearrange(x_0, "1 c h w -> h w c")

|

| 219 |

+

x_1 = 255.0 * rearrange(x_1, "1 c h w -> h w c")

|

| 220 |

+

x_1 = torch.cat([x_1, x_1, x_1], dim=-1)

|

| 221 |

+

edited_image = Image.fromarray(x_0.type(torch.uint8).cpu().numpy())

|

| 222 |

+

edited_mask = Image.fromarray(x_1.type(torch.uint8).cpu().numpy())

|

| 223 |

+

|

| 224 |

+

|

| 225 |

+

image_video = []

|

| 226 |

+

|

| 227 |

+

batch_size = 50

|

| 228 |

+

for i in range(0, len(image_list), batch_size):

|

| 229 |

+

if i + batch_size < len(image_list):

|

| 230 |

+

tmp_image_list = image_list[i:i+batch_size]

|

| 231 |

+

else:

|

| 232 |

+

tmp_image_list = image_list[i:]

|

| 233 |

+

tmp_image_list = model.decode_first_stage(tmp_image_list)

|

| 234 |

+

tmp_image_list = torch.clamp((tmp_image_list + 1.0) / 2.0, min=0.0, max=1.0)

|

| 235 |

+

tmp_image_list = 255.0 * rearrange(tmp_image_list, "b c h w -> b h w c")

|

| 236 |

+

tmp_image_list = tmp_image_list.type(torch.uint8).cpu().numpy()

|

| 237 |

+

# image list to image

|

| 238 |

+

for image in tmp_image_list:

|

| 239 |

+

image_video.append(image)

|

| 240 |

+

|

| 241 |

|

| 242 |

+

# for i,image in enumerate(mask_list):

|

| 243 |

+

# Image.fromarray(image).save(f"test/mask_{i}.png")

|

| 244 |

+

|

| 245 |

+

image_video_path = "image.mp4"

|

| 246 |

+

fps = 30

|

| 247 |

+

with imageio.get_writer(image_video_path, fps=fps) as video:

|

| 248 |

+

for image in image_video:

|

| 249 |

+

video.append_data(image)

|

| 250 |

+

|

| 251 |

+

|

| 252 |

+

# 对edited_mask做膨胀

|

| 253 |

+

|

| 254 |

+

edited_mask_copy = edited_mask.copy()

|

| 255 |

+

kernel = np.ones((3, 3), np.uint8)

|

| 256 |

+

edited_mask = cv2.dilate(np.array(edited_mask), kernel, iterations=3)

|

| 257 |

+

edited_mask = Image.fromarray(edited_mask)

|

| 258 |

+

|

| 259 |

+

|

| 260 |

+

m_img = edited_mask.filter(ImageFilter.GaussianBlur(radius=3))

|

| 261 |

+

m_img = np.asarray(m_img).astype('float') / 255.0

|

| 262 |

+

img_np = np.asarray(input_image_copy).astype('float') / 255.0

|

| 263 |

+

ours_np = np.asarray(edited_image).astype('float') / 255.0

|

| 264 |

+

|

| 265 |

+

mix_image_np = m_img * ours_np + (1 - m_img) * img_np

|

| 266 |

+

mix_image = Image.fromarray((mix_image_np * 255).astype(np.uint8)).convert('RGB')

|

| 267 |

+

|

| 268 |

+

|

| 269 |

+

red = np.array(mix_image).astype('float') * 1

|

| 270 |

+

red[:, :, 0] = 180.0

|

| 271 |

+

red[:, :, 2] = 0

|

| 272 |

+

red[:, :, 1] = 0

|

| 273 |

+

mix_result_with_red_mask = np.array(mix_image)

|

| 274 |

+

mix_result_with_red_mask = Image.fromarray(

|

| 275 |

+

(mix_result_with_red_mask.astype('float') * (1 - m_img.astype('float') / 2.0) +

|

| 276 |

+

m_img.astype('float') / 2.0 * red).astype('uint8'))

|

| 277 |

+

|

| 278 |

+

|

| 279 |

+

|

| 280 |

+

mask_video_path = "mask.mp4"

|

| 281 |

+

fps = 30

|

| 282 |

+

with imageio.get_writer(mask_video_path, fps=fps) as video:

|

| 283 |

+

for image in mask_list:

|

| 284 |

+

video.append_data(image)

|

| 285 |

+

|

| 286 |

+

return [int(seed), text_cfg_scale, image_cfg_scale, edited_image, mix_image, edited_mask_copy, mask_video_path, image_video_path, input_image_copy, mix_result_with_red_mask]

|

| 287 |

+

|

| 288 |

+

def reset():

|

| 289 |

+

return [100, "Randomize Seed", 1372, "Fix CFG", 7.5, 1.5, None, None, None, None, None, None, None]

|

| 290 |

+

|

| 291 |

+

def get_example():

|

| 292 |

+

return [

|

| 293 |

+

["test/dufu.png", "sunglasses", 100, "Fix Seed", 1372, "Fix CFG", 7.5, 1.5],

|

| 294 |

+

["test/dufu.png", "black and white suit", 100, "Fix Seed", 1372, "Fix CFG", 7.5, 1.5],

|

| 295 |

+

["test/dufu.png", "blue medical mask", 100, "Fix Seed", 1372, "Fix CFG", 7.5, 1.5],

|

| 296 |

+

["test/girl.jpeg", "diamond necklace", 100, "Fix Seed", 1372, "Fix CFG", 7.5, 1.5],

|

| 297 |

+

["test/girl.jpeg", "shiny golden crown", 100, "Fix Seed", 1372, "Fix CFG", 7.5, 1.5],

|

| 298 |

+

["test/girl.jpeg", "swimming duckling", 100, "Fix Seed", 1372, "Fix CFG", 7.5, 1.5],

|

| 299 |

+

["test/girl.jpeg", "reflective sunglasses", 100, "Fix Seed", 1372, "Fix CFG", 7.5, 1.5],

|

| 300 |

+

["test/girl.jpeg", "the queen's crown", 100, "Fix Seed", 1372, "Fix CFG", 7.5, 1.5],

|

| 301 |

+

["test/girl.jpeg", "gorgeous yellow gown", 100, "Fix Seed", 1372, "Fix CFG", 7.5, 1.5],

|

| 302 |

+

["test/iron_man.jpg", "sunglasses", 100, "Fix Seed", 1372, "Fix CFG", 7.5, 1.5]

|

| 303 |

+

]

|

| 304 |

+

|

| 305 |

+

with gr.Blocks(css="footer {visibility: hidden}") as demo:

|

| 306 |

+

with gr.Row():

|

| 307 |

+

gr.Markdown(

|

| 308 |

+

"<div align='center'><font size='14'>Diffree: Text-Guided Shape Free Object Inpainting with Diffusion Model</font></div>" # noqa

|

| 309 |

+

)

|

| 310 |

+

|

| 311 |

+

with gr.Row():

|

| 312 |

+

with gr.Column(scale=1, min_width=100):

|

| 313 |

with gr.Row():

|

| 314 |

+

input_image = gr.Image(label="Input Image", type="pil", interactive=True)

|

| 315 |

+

with gr.Row():

|

| 316 |

+

instruction = gr.Textbox(lines=1, label="Object description", interactive=True)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 317 |

with gr.Row():

|

| 318 |

+

steps = gr.Number(value=100, precision=0, label="Steps", interactive=True)

|

| 319 |

+

randomize_seed = gr.Radio(

|

| 320 |

+

["Fix Seed", "Randomize Seed"],

|

| 321 |

+

value="Randomize Seed",

|

| 322 |

+

type="index",

|

| 323 |

+

label="Seed Selection",

|

| 324 |

+

show_label=False,

|

| 325 |

+

interactive=True,

|

| 326 |

)

|

| 327 |

+

seed = gr.Number(value=1372, precision=0, label="Seed", interactive=True)

|

| 328 |

+

randomize_cfg = gr.Radio(

|

| 329 |

+

["Fix CFG", "Randomize CFG"],

|

| 330 |

+

value="Fix CFG",

|

| 331 |

+

type="index",

|

| 332 |

+

label="CFG Selection",

|

| 333 |

+

show_label=False,

|

| 334 |

+

interactive=True,

|

| 335 |

)

|

| 336 |

+

text_cfg_scale = gr.Number(value=7.5, label=f"Text CFG", interactive=True)

|

| 337 |

+

image_cfg_scale = gr.Number(value=1.5, label=f"Image CFG", interactive=True)

|

| 338 |

+

with gr.Row():

|

| 339 |

+

generate_button = gr.Button("Generate")

|

| 340 |

+

reset_button = gr.Button("Reset")

|

| 341 |

+

with gr.Column(scale=1, min_width=100):

|

| 342 |

+

with gr.Column():

|

| 343 |

+

mix_image = gr.Image(label=f"Mix Image", type="pil", interactive=False)

|

| 344 |

+

with gr.Column():

|

| 345 |

+

edited_mask = gr.Image(label=f"Output Mask", type="pil", interactive=False)

|

| 346 |

+

|

| 347 |

+

|

| 348 |

+

with gr.Accordion('More outputs', open=False):

|

| 349 |

+

with gr.Row():

|

| 350 |

+

image_video = gr.Video(label="Real-time Image Output")

|

| 351 |

+

mask_video = gr.Video(label="Real-time Mask Output")

|

| 352 |

+

with gr.Row():

|

| 353 |

+

original_image = gr.Image(label=f"Original Image", type="pil", interactive=False)

|

| 354 |

+

edited_image = gr.Image(label=f"Output Image", type="pil", interactive=False)

|

| 355 |

+

mix_result_with_red_mask = gr.Image(label=f"Mix Image With Red Mask", type="pil", interactive=False)

|

| 356 |

+

|

| 357 |

+

with gr.Row():

|

| 358 |

gr.Examples(

|

| 359 |

+

examples=get_example(),

|

| 360 |

+

fn=generate,

|

| 361 |

+

inputs=[input_image, instruction, steps, randomize_seed, seed, randomize_cfg, text_cfg_scale, image_cfg_scale],

|

| 362 |

+

outputs=[seed, text_cfg_scale, image_cfg_scale, edited_image, mix_image, edited_mask, mask_video, image_video, original_image, mix_result_with_red_mask],

|

| 363 |

+

cache_examples=True,

|

| 364 |

)

|

| 365 |

+

|

| 366 |

+

generate_button.click(

|

| 367 |

+

fn=generate,

|

| 368 |

+

inputs=[

|

| 369 |

+

input_image,

|

| 370 |

+

instruction,

|

| 371 |

+

steps,

|

| 372 |

+

randomize_seed,

|

| 373 |

+

seed,

|

| 374 |

+

randomize_cfg,

|

| 375 |

+

text_cfg_scale,

|

| 376 |

+

image_cfg_scale,

|

| 377 |

+

],

|

| 378 |

+

outputs=[seed, text_cfg_scale, image_cfg_scale, edited_image, mix_image, edited_mask, mask_video, image_video, original_image, mix_result_with_red_mask],

|

| 379 |

)

|

| 380 |

+

reset_button.click(

|

| 381 |

+

fn=reset,

|

| 382 |

+

inputs=[],

|

| 383 |

+

outputs=[steps, randomize_seed, seed, randomize_cfg, text_cfg_scale, image_cfg_scale, edited_image, mix_image, edited_mask, mask_video, image_video, original_image, mix_result_with_red_mask],

|

| 384 |

+

)

|

| 385 |

+

|

| 386 |

+

|

| 387 |

+

# demo.queue(concurrency_count=1)

|

| 388 |

+

# demo.launch(share=True)

|

| 389 |

+

|

| 390 |

|

| 391 |

demo.queue().launch()

|

checkpoints/epoch=000041-step=000010999.ckpt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d1147206d6dc17d94d2e1651231a4a9654a6e5b30fa920ec6cfa15964fa0d1b9

|

| 3 |

+

size 7720451618

|

stable_diffusion/LICENSE

ADDED

|

@@ -0,0 +1,82 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Copyright (c) 2022 Robin Rombach and Patrick Esser and contributors

|

| 2 |

+

|

| 3 |

+

CreativeML Open RAIL-M

|

| 4 |

+

dated August 22, 2022

|

| 5 |

+

|

| 6 |

+

Section I: PREAMBLE

|

| 7 |

+

|

| 8 |

+

Multimodal generative models are being widely adopted and used, and have the potential to transform the way artists, among other individuals, conceive and benefit from AI or ML technologies as a tool for content creation.

|

| 9 |

+

|

| 10 |

+

Notwithstanding the current and potential benefits that these artifacts can bring to society at large, there are also concerns about potential misuses of them, either due to their technical limitations or ethical considerations.

|

| 11 |

+

|

| 12 |

+

In short, this license strives for both the open and responsible downstream use of the accompanying model. When it comes to the open character, we took inspiration from open source permissive licenses regarding the grant of IP rights. Referring to the downstream responsible use, we added use-based restrictions not permitting the use of the Model in very specific scenarios, in order for the licensor to be able to enforce the license in case potential misuses of the Model may occur. At the same time, we strive to promote open and responsible research on generative models for art and content generation.

|

| 13 |

+

|

| 14 |

+

Even though downstream derivative versions of the model could be released under different licensing terms, the latter will always have to include - at minimum - the same use-based restrictions as the ones in the original license (this license). We believe in the intersection between open and responsible AI development; thus, this License aims to strike a balance between both in order to enable responsible open-science in the field of AI.

|

| 15 |

+

|

| 16 |

+

This License governs the use of the model (and its derivatives) and is informed by the model card associated with the model.

|

| 17 |

+

|

| 18 |

+

NOW THEREFORE, You and Licensor agree as follows:

|

| 19 |

+

|

| 20 |

+

1. Definitions

|

| 21 |

+

|

| 22 |

+

- "License" means the terms and conditions for use, reproduction, and Distribution as defined in this document.

|

| 23 |

+

- "Data" means a collection of information and/or content extracted from the dataset used with the Model, including to train, pretrain, or otherwise evaluate the Model. The Data is not licensed under this License.

|

| 24 |

+

- "Output" means the results of operating a Model as embodied in informational content resulting therefrom.

|

| 25 |

+

- "Model" means any accompanying machine-learning based assemblies (including checkpoints), consisting of learnt weights, parameters (including optimizer states), corresponding to the model architecture as embodied in the Complementary Material, that have been trained or tuned, in whole or in part on the Data, using the Complementary Material.

|

| 26 |

+

- "Derivatives of the Model" means all modifications to the Model, works based on the Model, or any other model which is created or initialized by transfer of patterns of the weights, parameters, activations or output of the Model, to the other model, in order to cause the other model to perform similarly to the Model, including - but not limited to - distillation methods entailing the use of intermediate data representations or methods based on the generation of synthetic data by the Model for training the other model.

|

| 27 |

+

- "Complementary Material" means the accompanying source code and scripts used to define, run, load, benchmark or evaluate the Model, and used to prepare data for training or evaluation, if any. This includes any accompanying documentation, tutorials, examples, etc, if any.

|

| 28 |

+

- "Distribution" means any transmission, reproduction, publication or other sharing of the Model or Derivatives of the Model to a third party, including providing the Model as a hosted service made available by electronic or other remote means - e.g. API-based or web access.

|

| 29 |

+

- "Licensor" means the copyright owner or entity authorized by the copyright owner that is granting the License, including the persons or entities that may have rights in the Model and/or distributing the Model.

|

| 30 |

+

- "You" (or "Your") means an individual or Legal Entity exercising permissions granted by this License and/or making use of the Model for whichever purpose and in any field of use, including usage of the Model in an end-use application - e.g. chatbot, translator, image generator.

|

| 31 |

+

- "Third Parties" means individuals or legal entities that are not under common control with Licensor or You.

|

| 32 |

+

- "Contribution" means any work of authorship, including the original version of the Model and any modifications or additions to that Model or Derivatives of the Model thereof, that is intentionally submitted to Licensor for inclusion in the Model by the copyright owner or by an individual or Legal Entity authorized to submit on behalf of the copyright owner. For the purposes of this definition, "submitted" means any form of electronic, verbal, or written communication sent to the Licensor or its representatives, including but not limited to communication on electronic mailing lists, source code control systems, and issue tracking systems that are managed by, or on behalf of, the Licensor for the purpose of discussing and improving the Model, but excluding communication that is conspicuously marked or otherwise designated in writing by the copyright owner as "Not a Contribution."

|

| 33 |

+

- "Contributor" means Licensor and any individual or Legal Entity on behalf of whom a Contribution has been received by Licensor and subsequently incorporated within the Model.

|

| 34 |

+

|

| 35 |

+

Section II: INTELLECTUAL PROPERTY RIGHTS

|

| 36 |

+

|

| 37 |

+

Both copyright and patent grants apply to the Model, Derivatives of the Model and Complementary Material. The Model and Derivatives of the Model are subject to additional terms as described in Section III.

|

| 38 |

+

|

| 39 |

+

2. Grant of Copyright License. Subject to the terms and conditions of this License, each Contributor hereby grants to You a perpetual, worldwide, non-exclusive, no-charge, royalty-free, irrevocable copyright license to reproduce, prepare, publicly display, publicly perform, sublicense, and distribute the Complementary Material, the Model, and Derivatives of the Model.

|

| 40 |

+

3. Grant of Patent License. Subject to the terms and conditions of this License and where and as applicable, each Contributor hereby grants to You a perpetual, worldwide, non-exclusive, no-charge, royalty-free, irrevocable (except as stated in this paragraph) patent license to make, have made, use, offer to sell, sell, import, and otherwise transfer the Model and the Complementary Material, where such license applies only to those patent claims licensable by such Contributor that are necessarily infringed by their Contribution(s) alone or by combination of their Contribution(s) with the Model to which such Contribution(s) was submitted. If You institute patent litigation against any entity (including a cross-claim or counterclaim in a lawsuit) alleging that the Model and/or Complementary Material or a Contribution incorporated within the Model and/or Complementary Material constitutes direct or contributory patent infringement, then any patent licenses granted to You under this License for the Model and/or Work shall terminate as of the date such litigation is asserted or filed.

|

| 41 |

+

|

| 42 |

+

Section III: CONDITIONS OF USAGE, DISTRIBUTION AND REDISTRIBUTION

|

| 43 |

+

|

| 44 |

+

4. Distribution and Redistribution. You may host for Third Party remote access purposes (e.g. software-as-a-service), reproduce and distribute copies of the Model or Derivatives of the Model thereof in any medium, with or without modifications, provided that You meet the following conditions:

|

| 45 |

+

Use-based restrictions as referenced in paragraph 5 MUST be included as an enforceable provision by You in any type of legal agreement (e.g. a license) governing the use and/or distribution of the Model or Derivatives of the Model, and You shall give notice to subsequent users You Distribute to, that the Model or Derivatives of the Model are subject to paragraph 5. This provision does not apply to the use of Complementary Material.

|

| 46 |

+

You must give any Third Party recipients of the Model or Derivatives of the Model a copy of this License;

|

| 47 |

+

You must cause any modified files to carry prominent notices stating that You changed the files;

|

| 48 |

+

You must retain all copyright, patent, trademark, and attribution notices excluding those notices that do not pertain to any part of the Model, Derivatives of the Model.

|

| 49 |

+

You may add Your own copyright statement to Your modifications and may provide additional or different license terms and conditions - respecting paragraph 4.a. - for use, reproduction, or Distribution of Your modifications, or for any such Derivatives of the Model as a whole, provided Your use, reproduction, and Distribution of the Model otherwise complies with the conditions stated in this License.

|

| 50 |

+

5. Use-based restrictions. The restrictions set forth in Attachment A are considered Use-based restrictions. Therefore You cannot use the Model and the Derivatives of the Model for the specified restricted uses. You may use the Model subject to this License, including only for lawful purposes and in accordance with the License. Use may include creating any content with, finetuning, updating, running, training, evaluating and/or reparametrizing the Model. You shall require all of Your users who use the Model or a Derivative of the Model to comply with the terms of this paragraph (paragraph 5).

|

| 51 |

+

6. The Output You Generate. Except as set forth herein, Licensor claims no rights in the Output You generate using the Model. You are accountable for the Output you generate and its subsequent uses. No use of the output can contravene any provision as stated in the License.

|

| 52 |

+

|

| 53 |

+

Section IV: OTHER PROVISIONS

|

| 54 |

+

|

| 55 |

+

7. Updates and Runtime Restrictions. To the maximum extent permitted by law, Licensor reserves the right to restrict (remotely or otherwise) usage of the Model in violation of this License, update the Model through electronic means, or modify the Output of the Model based on updates. You shall undertake reasonable efforts to use the latest version of the Model.

|

| 56 |

+

8. Trademarks and related. Nothing in this License permits You to make use of Licensors’ trademarks, trade names, logos or to otherwise suggest endorsement or misrepresent the relationship between the parties; and any rights not expressly granted herein are reserved by the Licensors.

|

| 57 |

+

9. Disclaimer of Warranty. Unless required by applicable law or agreed to in writing, Licensor provides the Model and the Complementary Material (and each Contributor provides its Contributions) on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied, including, without limitation, any warranties or conditions of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A PARTICULAR PURPOSE. You are solely responsible for determining the appropriateness of using or redistributing the Model, Derivatives of the Model, and the Complementary Material and assume any risks associated with Your exercise of permissions under this License.

|

| 58 |

+

10. Limitation of Liability. In no event and under no legal theory, whether in tort (including negligence), contract, or otherwise, unless required by applicable law (such as deliberate and grossly negligent acts) or agreed to in writing, shall any Contributor be liable to You for damages, including any direct, indirect, special, incidental, or consequential damages of any character arising as a result of this License or out of the use or inability to use the Model and the Complementary Material (including but not limited to damages for loss of goodwill, work stoppage, computer failure or malfunction, or any and all other commercial damages or losses), even if such Contributor has been advised of the possibility of such damages.

|

| 59 |

+

11. Accepting Warranty or Additional Liability. While redistributing the Model, Derivatives of the Model and the Complementary Material thereof, You may choose to offer, and charge a fee for, acceptance of support, warranty, indemnity, or other liability obligations and/or rights consistent with this License. However, in accepting such obligations, You may act only on Your own behalf and on Your sole responsibility, not on behalf of any other Contributor, and only if You agree to indemnify, defend, and hold each Contributor harmless for any liability incurred by, or claims asserted against, such Contributor by reason of your accepting any such warranty or additional liability.

|

| 60 |

+

12. If any provision of this License is held to be invalid, illegal or unenforceable, the remaining provisions shall be unaffected thereby and remain valid as if such provision had not been set forth herein.

|

| 61 |

+

|

| 62 |

+

END OF TERMS AND CONDITIONS

|

| 63 |

+

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

Attachment A

|

| 68 |

+

|

| 69 |

+

Use Restrictions

|

| 70 |

+

|

| 71 |

+

You agree not to use the Model or Derivatives of the Model:

|

| 72 |

+

- In any way that violates any applicable national, federal, state, local or international law or regulation;

|

| 73 |

+

- For the purpose of exploiting, harming or attempting to exploit or harm minors in any way;

|

| 74 |

+

- To generate or disseminate verifiably false information and/or content with the purpose of harming others;

|

| 75 |

+

- To generate or disseminate personal identifiable information that can be used to harm an individual;

|

| 76 |

+

- To defame, disparage or otherwise harass others;

|

| 77 |

+

- For fully automated decision making that adversely impacts an individual’s legal rights or otherwise creates or modifies a binding, enforceable obligation;

|

| 78 |

+

- For any use intended to or which has the effect of discriminating against or harming individuals or groups based on online or offline social behavior or known or predicted personal or personality characteristics;

|

| 79 |

+

- To exploit any of the vulnerabilities of a specific group of persons based on their age, social, physical or mental characteristics, in order to materially distort the behavior of a person pertaining to that group in a manner that causes or is likely to cause that person or another person physical or psychological harm;

|

| 80 |

+

- For any use intended to or which has the effect of discriminating against individuals or groups based on legally protected characteristics or categories;

|

| 81 |

+

- To provide medical advice and medical results interpretation;

|

| 82 |

+

- To generate or disseminate information for the purpose to be used for administration of justice, law enforcement, immigration or asylum processes, such as predicting an individual will commit fraud/crime commitment (e.g. by text profiling, drawing causal relationships between assertions made in documents, indiscriminate and arbitrarily-targeted use).

|

stable_diffusion/README.md

ADDED

|

@@ -0,0 +1,215 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Stable Diffusion

|

| 2 |

+

*Stable Diffusion was made possible thanks to a collaboration with [Stability AI](https://stability.ai/) and [Runway](https://runwayml.com/) and builds upon our previous work:*

|

| 3 |

+

|

| 4 |

+

[**High-Resolution Image Synthesis with Latent Diffusion Models**](https://ommer-lab.com/research/latent-diffusion-models/)<br/>

|

| 5 |

+

[Robin Rombach](https://github.com/rromb)\*,

|

| 6 |

+

[Andreas Blattmann](https://github.com/ablattmann)\*,

|

| 7 |

+

[Dominik Lorenz](https://github.com/qp-qp)\,

|

| 8 |

+

[Patrick Esser](https://github.com/pesser),

|

| 9 |

+

[Björn Ommer](https://hci.iwr.uni-heidelberg.de/Staff/bommer)<br/>

|

| 10 |

+

_[CVPR '22 Oral](https://openaccess.thecvf.com/content/CVPR2022/html/Rombach_High-Resolution_Image_Synthesis_With_Latent_Diffusion_Models_CVPR_2022_paper.html) |

|

| 11 |

+

[GitHub](https://github.com/CompVis/latent-diffusion) | [arXiv](https://arxiv.org/abs/2112.10752) | [Project page](https://ommer-lab.com/research/latent-diffusion-models/)_

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

[Stable Diffusion](#stable-diffusion-v1) is a latent text-to-image diffusion

|

| 15 |

+

model.

|

| 16 |

+

Thanks to a generous compute donation from [Stability AI](https://stability.ai/) and support from [LAION](https://laion.ai/), we were able to train a Latent Diffusion Model on 512x512 images from a subset of the [LAION-5B](https://laion.ai/blog/laion-5b/) database.

|

| 17 |

+

Similar to Google's [Imagen](https://arxiv.org/abs/2205.11487),

|

| 18 |

+

this model uses a frozen CLIP ViT-L/14 text encoder to condition the model on text prompts.

|

| 19 |

+

With its 860M UNet and 123M text encoder, the model is relatively lightweight and runs on a GPU with at least 10GB VRAM.

|

| 20 |

+

See [this section](#stable-diffusion-v1) below and the [model card](https://huggingface.co/CompVis/stable-diffusion).

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

## Requirements

|

| 24 |

+

A suitable [conda](https://conda.io/) environment named `ldm` can be created

|

| 25 |

+

and activated with:

|

| 26 |

+

|

| 27 |

+

```

|

| 28 |

+

conda env create -f environment.yaml

|

| 29 |

+

conda activate ldm

|

| 30 |

+

```

|

| 31 |

+

|

| 32 |

+

You can also update an existing [latent diffusion](https://github.com/CompVis/latent-diffusion) environment by running

|

| 33 |

+

|

| 34 |

+

```

|

| 35 |

+

conda install pytorch torchvision -c pytorch

|

| 36 |

+

pip install transformers==4.19.2 diffusers invisible-watermark

|

| 37 |

+

pip install -e .

|

| 38 |

+

```

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

## Stable Diffusion v1

|

| 42 |

+

|

| 43 |

+

Stable Diffusion v1 refers to a specific configuration of the model

|

| 44 |

+

architecture that uses a downsampling-factor 8 autoencoder with an 860M UNet

|

| 45 |

+

and CLIP ViT-L/14 text encoder for the diffusion model. The model was pretrained on 256x256 images and

|

| 46 |

+

then finetuned on 512x512 images.

|

| 47 |

+

|

| 48 |

+

*Note: Stable Diffusion v1 is a general text-to-image diffusion model and therefore mirrors biases and (mis-)conceptions that are present

|

| 49 |

+

in its training data.

|

| 50 |

+

Details on the training procedure and data, as well as the intended use of the model can be found in the corresponding [model card](Stable_Diffusion_v1_Model_Card.md).*

|

| 51 |

+

|

| 52 |

+

The weights are available via [the CompVis organization at Hugging Face](https://huggingface.co/CompVis) under [a license which contains specific use-based restrictions to prevent misuse and harm as informed by the model card, but otherwise remains permissive](LICENSE). While commercial use is permitted under the terms of the license, **we do not recommend using the provided weights for services or products without additional safety mechanisms and considerations**, since there are [known limitations and biases](Stable_Diffusion_v1_Model_Card.md#limitations-and-bias) of the weights, and research on safe and ethical deployment of general text-to-image models is an ongoing effort. **The weights are research artifacts and should be treated as such.**

|

| 53 |

+

|

| 54 |

+

[The CreativeML OpenRAIL M license](LICENSE) is an [Open RAIL M license](https://www.licenses.ai/blog/2022/8/18/naming-convention-of-responsible-ai-licenses), adapted from the work that [BigScience](https://bigscience.huggingface.co/) and [the RAIL Initiative](https://www.licenses.ai/) are jointly carrying in the area of responsible AI licensing. See also [the article about the BLOOM Open RAIL license](https://bigscience.huggingface.co/blog/the-bigscience-rail-license) on which our license is based.

|

| 55 |

+

|

| 56 |

+

### Weights

|

| 57 |

+

|

| 58 |

+

We currently provide the following checkpoints:

|

| 59 |

+

|

| 60 |

+

- `sd-v1-1.ckpt`: 237k steps at resolution `256x256` on [laion2B-en](https://huggingface.co/datasets/laion/laion2B-en).

|

| 61 |

+

194k steps at resolution `512x512` on [laion-high-resolution](https://huggingface.co/datasets/laion/laion-high-resolution) (170M examples from LAION-5B with resolution `>= 1024x1024`).

|

| 62 |

+

- `sd-v1-2.ckpt`: Resumed from `sd-v1-1.ckpt`.

|

| 63 |

+

515k steps at resolution `512x512` on [laion-aesthetics v2 5+](https://laion.ai/blog/laion-aesthetics/) (a subset of laion2B-en with estimated aesthetics score `> 5.0`, and additionally

|

| 64 |

+

filtered to images with an original size `>= 512x512`, and an estimated watermark probability `< 0.5`. The watermark estimate is from the [LAION-5B](https://laion.ai/blog/laion-5b/) metadata, the aesthetics score is estimated using the [LAION-Aesthetics Predictor V2](https://github.com/christophschuhmann/improved-aesthetic-predictor)).

|

| 65 |

+

- `sd-v1-3.ckpt`: Resumed from `sd-v1-2.ckpt`. 195k steps at resolution `512x512` on "laion-aesthetics v2 5+" and 10\% dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).