Releasing the largest multilingual open pretraining dataset

Many have claimed that training large language models requires copyrighted data, making truly open AI development impossible. Today, Pleias is proving otherwise with the release of Common Corpus (part of the AI Alliance Open Trusted Data Initiative)—the largest fully open multilingual dataset for training LLMs, containing over 2 trillion tokens of permissibly licensed content with provenance information (2,003,039,184,047 tokens).

As developers are responding to pressures from new regulations like the EU AI Act, Common Corpus goes beyond compliance by making our entire permissibly licensed dataset freely available on HuggingFace, with detailed documentation of every data source. We have taken extensive steps to ensure that the dataset is high-quality and is curated to train powerful models. Through this release, we are demonstrating that there doesn’t have to be such a [heavy] trade-off between openness and performance.

Common Corpus is:

- Truly Open: contains only data that is permissively licensed and provenance is documented

- Multilingual: mostly representing English and French data, but contains at least 1B tokens for over 30 languages

- Diverse: consisting of scientific articles, government and legal documents, code, and cultural heritage data, including books and newspapers

- Extensively Curated: spelling and formatting has been corrected from digitized texts, harmful and toxic content has been removed, and content with low educational content has also been removed.

We Need More and Better Open Training Data

Common corpus builds on a growing ecosystem of large, open datasets, such as Dolma, FineWeb, RefinedWeb. The Common Pile currently in preparation under the coordination of Eleuther is built around the same principle of using permissible content in English language and, unsurprisingly, there were many opportunities for collaborations and shared efforts. But even together, these datasets do not provide enough training data for models much larger than a few billion parameters. So in order to expand the options for open model training, we still need more open data.

And data being open isn’t enough. Some of the datasets raise issues, such as those derived from web-scraped text, which have powered many language models. Content origins are often impossible to trace, and the data itself can be disproportionately toxic or low-quality, and increasingly websites are restricting access to their data. Based on an analysis of 1 million user interactions with ChatGPT, the plurality of user requests are for creative compositions, with academic composition and code generation making up smaller but significant portions of requests. By comparison, news and general information relatively make up a smaller share of requests. This does not reflect the open datasets that are available and being used to train LLMs, which contain a lot of encyclopedic and textbook-like informational content. The kind of content we actually need—like creative writing—is usually tied up in copyright restrictions.

Introducing Common Corpus

Common Corpus tackles these challenges through five carefully curated collections:

- OpenCulture: our largest collection at 926,541,096,243 tokens, featuring public domain books, newspapers, and Wikisource content. We've developed innovative tools like OCROnos-Vintage to correct historical digitization errors, while implementing advanced toxicity filtering to ensure content meets modern ethical standards.

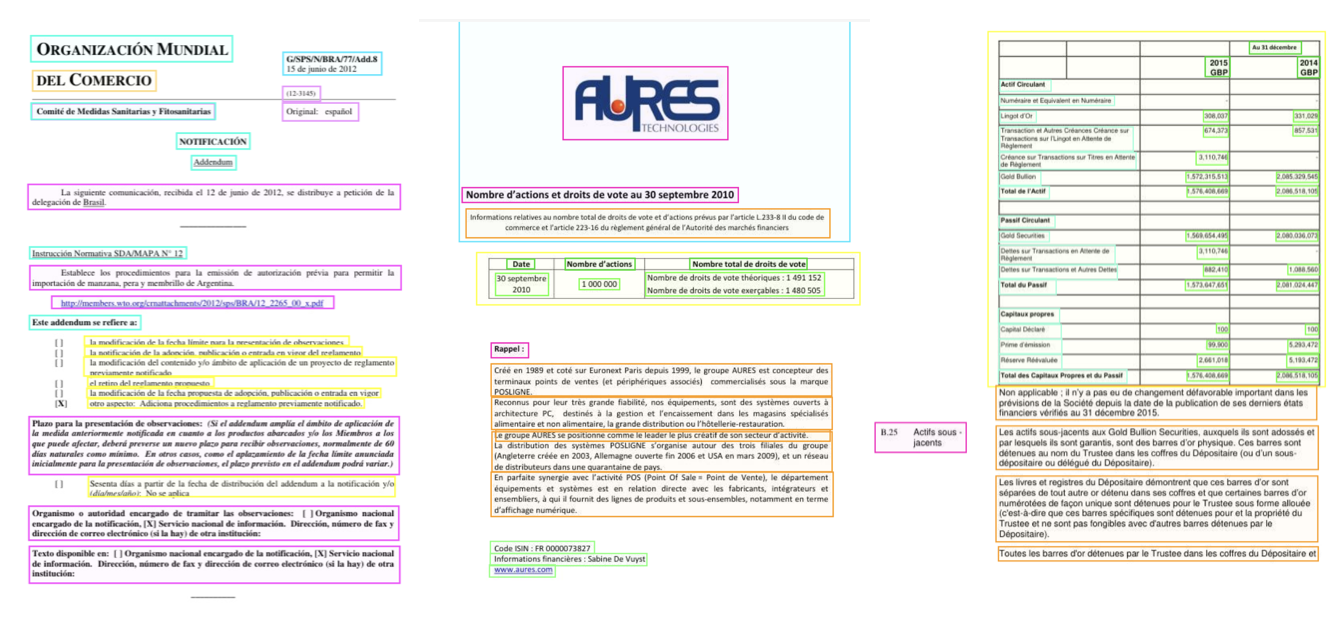

- OpenGovernment: 387,965,738,992 tokens of financial and legal documents, including Finance Commons (from sources like SEC and WTO) and Legal Commons (including Europarl and Caselaw Access Project), providing enterprise-grade training data from regulatory bodies and administrative sources.

- OpenSource: 334,658,896,533 tokens of high-quality code in open source from GitHub, filtered using ArmoRM to ensure only the top 80% of submissions by quality rating are included.

- OpenScience: 221,798,136,564 tokens of academic content from Open Alex and other open science repositories, processed using vision-language models to preserve crucial document structure and formatting.

- OpenWeb: 132,075,315,715 tokens from Wikipedia, YouTube Commons and other websites available under permissible licenses like Stack-Exchange.

Beyond English

While English remains our largest language at 867,033,096,123 tokens, Common Corpus takes significant steps toward linguistic diversity in AI training data. We provide substantial coverage of French (266 billion tokens) and German (112 billion tokens). Additionally, we maintain broad linguistic coverage with over 1B tokens in more than 30 languages, including significant collections in Spanish, Italian, and Dutch. This linguistic diversity, combined with our substantial code corpus (18.8% of total data), helps democratize AI development beyond English-speaking regions. By providing high-quality, permissibly licensed data across many languages, we're working to ensure the economic benefits of language AI can be shared more equitably across linguistic communities.

| Language | Token Count |

|---|---|

| English | 808 B |

| French | 266 B |

| German | 112 B |

| Spanish | 46 B |

| Latin | 34 B |

| Dutch | 29 B |

| Italian | 24 B |

| Polish | 11 B |

| Greek | 11 B |

| Portuguese | 9 B |

Innovation for Data Processing and Data Quality

High-quality training data directly impacts model performance, but achieving this quality requires more than the standard deduplication and filtering used for web-scraped datasets. We've developed a suite of specialized tools and approaches, each tailored to the unique challenges of different data types.

For OpenCulture's historical texts, we tackled two major challenges. First, we created OCRonos-Vintage, a lightweight but powerful OCR correction model that fixes digitization errors at scale. Running efficiently on both CPU and GPU, this 124M-parameter model corrects spacing issues, replaces incorrect words, and repairs broken text structures. Second, we developed a specialized toxicity detection system for multilingual historical content, which identifies and removes harmful language about minoritized groups without excessively removing data. Our toxicity classifier and associated tools are publicly available on HuggingFace. Academic content in PDFs required a different approach. Instead of simple text extraction, we employed vision-language models to preserve crucial document structure, maintaining the semantic relationship between headings, sections, and content.

For code quality, we integrated ArmoRM to evaluate complexity, style, and documentation, retaining only the code above a certain quality threshold.

Privacy and GDPR compliance were also key considerations. We developed region-specific personally identifiable information (PII) detection systems that account for varying formats of sensitive information like phone numbers and addresses across different countries, ensuring uniform compliance throughout our multilingual dataset.

All our curation tools and processes are open-source, setting new standards for transparency in dataset development.

Use Common Corpus

Common Corpus is available to use: [https://huggingface.co/datasets/PleIAs/common_corpus](https://huggingface.co/datasets/PleIAs/common_corpus(. We will accompany the dataset release with a comprehensive technical report detailing our methodologies and data sources will accompany the release, ensuring full transparency and reproducibility. We will release the individual sub-corpora in coming weeks for more fine-grained auditability for to expand uses

The corpus was stored and processed with the generous support of the AI Alliance, Jean Zay (Eviden, Idris), Nvidia Inception program, Nebius AI and Tracto AI. It was built up with the support and concerted efforts of the state start-up LANGU:IA (start-up d’Etat), supported by the French Ministry of Culture and DINUM, as part of the prefiguration of the service offering of the Alliance for Language technologies EDIC (ALT-EDIC). This dataset was also made in partnership with Wikimedia Enterprise for the Wikipedia part. The collection of the corpus has been largely facilitated thanks to the open science LLM community insights, cooperation and support (Eleuther AI, Allen AI, HuggingFace…).