Gediminas Vasiliauskas

commited on

Commit

•

9a7007f

1

Parent(s):

934ea97

Add Microsoft Emoji LoRA, trained with AI-toolkit

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +2 -0

- README.md +74 -3

- config.yaml +73 -0

- flux_microsoft_emoji_lora_v1.safetensors +3 -0

- optimizer.pt +3 -0

- samples/1726077711901__000000000_0.jpg +0 -0

- samples/1726077716083__000000000_1.jpg +0 -0

- samples/1726077720264__000000000_2.jpg +0 -0

- samples/1726077724445__000000000_3.jpg +0 -0

- samples/1726077728629__000000000_4.jpg +0 -0

- samples/1726077732814__000000000_5.jpg +0 -0

- samples/1726077736999__000000000_6.jpg +0 -0

- samples/1726077741187__000000000_7.jpg +0 -0

- samples/1726077745378__000000000_8.jpg +0 -0

- samples/1726077749587__000000000_9.jpg +0 -0

- samples/1726078007980__000000250_0.jpg +0 -0

- samples/1726078012186__000000250_1.jpg +0 -0

- samples/1726078016389__000000250_2.jpg +0 -0

- samples/1726078020599__000000250_3.jpg +0 -0

- samples/1726078024806__000000250_4.jpg +0 -0

- samples/1726078029011__000000250_5.jpg +0 -0

- samples/1726078033217__000000250_6.jpg +0 -0

- samples/1726078037419__000000250_7.jpg +0 -0

- samples/1726078041628__000000250_8.jpg +0 -0

- samples/1726078045839__000000250_9.jpg +0 -0

- samples/1726078304706__000000500_0.jpg +0 -0

- samples/1726078308907__000000500_1.jpg +0 -0

- samples/1726078313111__000000500_2.jpg +0 -0

- samples/1726078317317__000000500_3.jpg +0 -0

- samples/1726078321523__000000500_4.jpg +0 -0

- samples/1726078325731__000000500_5.jpg +0 -0

- samples/1726078329937__000000500_6.jpg +0 -0

- samples/1726078334142__000000500_7.jpg +0 -0

- samples/1726078338352__000000500_8.jpg +0 -0

- samples/1726078342563__000000500_9.jpg +0 -0

- samples/1726078599453__000000750_0.jpg +0 -0

- samples/1726078603657__000000750_1.jpg +0 -0

- samples/1726078607861__000000750_2.jpg +0 -0

- samples/1726078612065__000000750_3.jpg +0 -0

- samples/1726078616269__000000750_4.jpg +0 -0

- samples/1726078620473__000000750_5.jpg +0 -0

- samples/1726078624678__000000750_6.jpg +0 -0

- samples/1726078628883__000000750_7.jpg +0 -0

- samples/1726078633093__000000750_8.jpg +0 -0

- samples/1726078637304__000000750_9.jpg +0 -0

- samples/1726078893536__000001000_0.jpg +0 -0

- samples/1726078897738__000001000_1.jpg +0 -0

- samples/1726078901940__000001000_2.jpg +0 -0

- samples/1726078906140__000001000_3.jpg +0 -0

- samples/1726078910339__000001000_4.jpg +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,5 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

optimizer.pt filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -1,3 +1,74 @@

|

|

| 1 |

-

---

|

| 2 |

-

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

tags:

|

| 3 |

+

- text-to-image

|

| 4 |

+

- flux

|

| 5 |

+

- lora

|

| 6 |

+

- diffusers

|

| 7 |

+

- template:sd-lora

|

| 8 |

+

- ai-toolkit

|

| 9 |

+

widget:

|

| 10 |

+

- text: '[trigger] woman with red hair'

|

| 11 |

+

output:

|

| 12 |

+

url: samples/1726080981710__000002000_0.jpg

|

| 13 |

+

- text: '[trigger] a woman holding a coffee cup, in a beanie'

|

| 14 |

+

output:

|

| 15 |

+

url: samples/1726080985911__000002000_1.jpg

|

| 16 |

+

- text: '[trigger] a horse is a DJ at a night club'

|

| 17 |

+

output:

|

| 18 |

+

url: samples/1726080990112__000002000_2.jpg

|

| 19 |

+

- text: '[trigger] a man showing off his cool new t shirt at the beach'

|

| 20 |

+

output:

|

| 21 |

+

url: samples/1726080994314__000002000_3.jpg

|

| 22 |

+

- text: '[trigger] a bear building a log cabin'

|

| 23 |

+

output:

|

| 24 |

+

url: samples/1726080998517__000002000_4.jpg

|

| 25 |

+

- text: '[trigger] woman playing the guitar, on stage, singing a song'

|

| 26 |

+

output:

|

| 27 |

+

url: samples/1726081002722__000002000_5.jpg

|

| 28 |

+

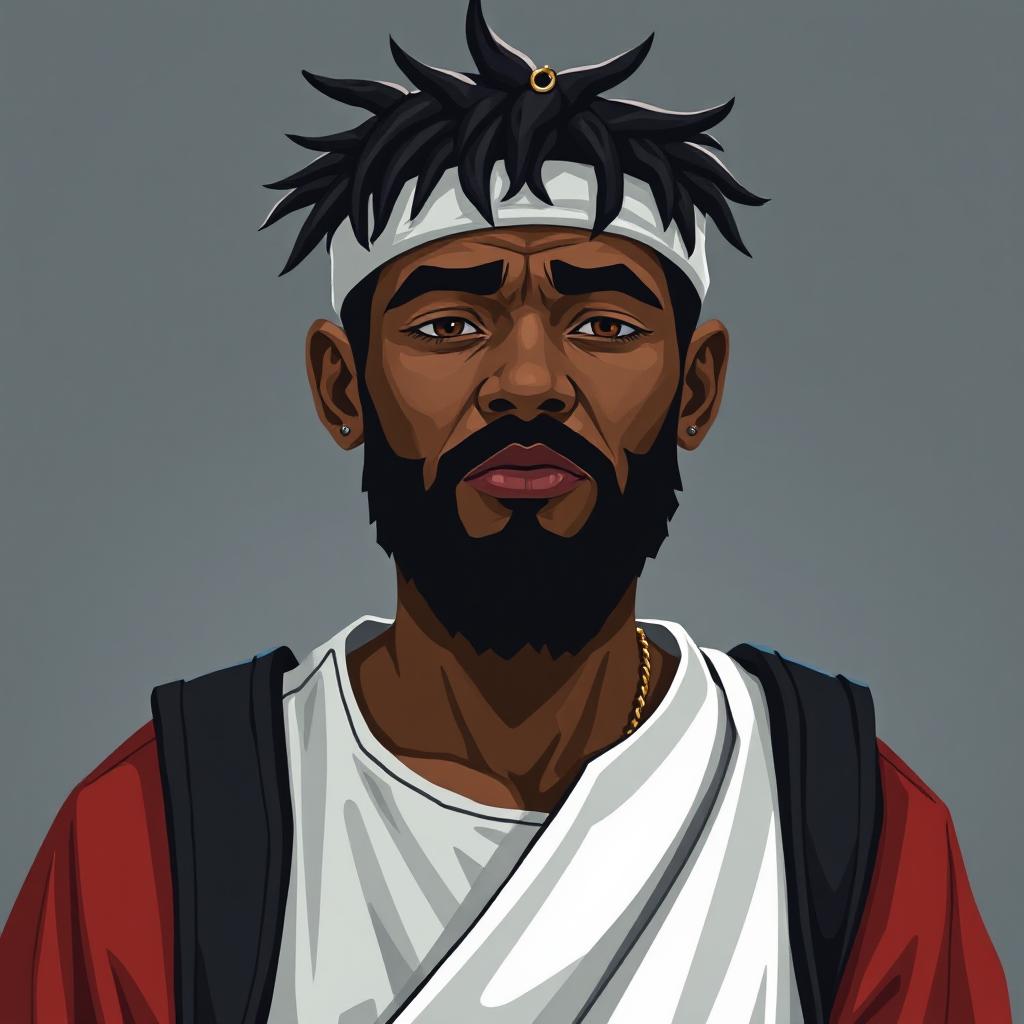

- text: '[trigger] hipster man with a beard'

|

| 29 |

+

output:

|

| 30 |

+

url: samples/1726081006926__000002000_6.jpg

|

| 31 |

+

- text: '[trigger] a man'

|

| 32 |

+

output:

|

| 33 |

+

url: samples/1726081011130__000002000_7.jpg

|

| 34 |

+

- text: '[trigger] a man holding a sign that says, ''this is a sign'''

|

| 35 |

+

output:

|

| 36 |

+

url: samples/1726081015337__000002000_8.jpg

|

| 37 |

+

- text: '[trigger] a bulldog with a shotgun, in a leather jacket with a motorcycle'

|

| 38 |

+

output:

|

| 39 |

+

url: samples/1726081019544__000002000_9.jpg

|

| 40 |

+

base_model: black-forest-labs/FLUX.1-schnell

|

| 41 |

+

instance_prompt: emojy

|

| 42 |

+

license: apache-2.0

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

---

|

| 46 |

+

|

| 47 |

+

# flux_microsoft_emoji_lora_v1

|

| 48 |

+

Model trained with [AI Toolkit by Ostris](https://github.com/ostris/ai-toolkit)

|

| 49 |

+

<Gallery />

|

| 50 |

+

|

| 51 |

+

## Trigger words

|

| 52 |

+

|

| 53 |

+

You should use `emojy` to trigger the image generation.

|

| 54 |

+

|

| 55 |

+

## Download model and use it with ComfyUI, AUTOMATIC1111, SD.Next, Invoke AI, etc.

|

| 56 |

+

|

| 57 |

+

Weights for this model are available in Safetensors format.

|

| 58 |

+

|

| 59 |

+

[Download](/Zedge/Flux-Schnell-Emoji-Weights/tree/main) them in the Files & versions tab.

|

| 60 |

+

|

| 61 |

+

## Use it with the [🧨 diffusers library](https://github.com/huggingface/diffusers)

|

| 62 |

+

|

| 63 |

+

```py

|

| 64 |

+

from diffusers import AutoPipelineForText2Image

|

| 65 |

+

import torch

|

| 66 |

+

|

| 67 |

+

pipeline = AutoPipelineForText2Image.from_pretrained('black-forest-labs/FLUX.1-schnell', torch_dtype=torch.bfloat16).to('cuda')

|

| 68 |

+

pipeline.load_lora_weights('Zedge/Flux-Schnell-Emoji-Weights', weight_name='flux_microsoft_emoji_lora_v1')

|

| 69 |

+

image = pipeline('[trigger] woman with red hair').images[0]

|

| 70 |

+

image.save("my_image.png")

|

| 71 |

+

```

|

| 72 |

+

|

| 73 |

+

For more details, including weighting, merging and fusing LoRAs, check the [documentation on loading LoRAs in diffusers](https://huggingface.co/docs/diffusers/main/en/using-diffusers/loading_adapters)

|

| 74 |

+

|

config.yaml

ADDED

|

@@ -0,0 +1,73 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

job: extension

|

| 2 |

+

config:

|

| 3 |

+

name: flux_microsoft_emoji_lora_v1

|

| 4 |

+

process:

|

| 5 |

+

- type: sd_trainer

|

| 6 |

+

training_folder: output

|

| 7 |

+

performance_log_every: 1000

|

| 8 |

+

device: cuda:0

|

| 9 |

+

trigger_word: emojy

|

| 10 |

+

network:

|

| 11 |

+

type: lora

|

| 12 |

+

linear: 16

|

| 13 |

+

linear_alpha: 16

|

| 14 |

+

save:

|

| 15 |

+

dtype: float16

|

| 16 |

+

save_every: 250

|

| 17 |

+

max_step_saves_to_keep: 4

|

| 18 |

+

push_to_hub: true

|

| 19 |

+

hf_repo_id: Zedge/Flux-Schnell-Emoji-Weights

|

| 20 |

+

hf_private: true

|

| 21 |

+

datasets:

|

| 22 |

+

- folder_path: ../../datasets/Microsoft-3D-Fluent

|

| 23 |

+

caption_ext: txt

|

| 24 |

+

caption_dropout_rate: 0.05

|

| 25 |

+

shuffle_tokens: false

|

| 26 |

+

cache_latents_to_disk: true

|

| 27 |

+

resolution:

|

| 28 |

+

- 512

|

| 29 |

+

- 768

|

| 30 |

+

- 1024

|

| 31 |

+

train:

|

| 32 |

+

batch_size: 1

|

| 33 |

+

steps: 2000

|

| 34 |

+

gradient_accumulation_steps: 1

|

| 35 |

+

train_unet: true

|

| 36 |

+

train_text_encoder: false

|

| 37 |

+

gradient_checkpointing: false

|

| 38 |

+

noise_scheduler: flowmatch

|

| 39 |

+

optimizer: adamw8bit

|

| 40 |

+

lr: 0.0001

|

| 41 |

+

ema_config:

|

| 42 |

+

use_ema: true

|

| 43 |

+

ema_decay: 0.99

|

| 44 |

+

dtype: bf16

|

| 45 |

+

model:

|

| 46 |

+

name_or_path: black-forest-labs/FLUX.1-schnell

|

| 47 |

+

assistant_lora_path: ostris/FLUX.1-schnell-training-adapter

|

| 48 |

+

is_flux: true

|

| 49 |

+

quantize: true

|

| 50 |

+

sample:

|

| 51 |

+

sampler: flowmatch

|

| 52 |

+

sample_every: 250

|

| 53 |

+

width: 1024

|

| 54 |

+

height: 1024

|

| 55 |

+

prompts:

|

| 56 |

+

- '[trigger] woman with red hair'

|

| 57 |

+

- '[trigger] a woman holding a coffee cup, in a beanie'

|

| 58 |

+

- '[trigger] a horse is a DJ at a night club'

|

| 59 |

+

- '[trigger] a man showing off his cool new t shirt at the beach'

|

| 60 |

+

- '[trigger] a bear building a log cabin'

|

| 61 |

+

- '[trigger] woman playing the guitar, on stage, singing a song'

|

| 62 |

+

- '[trigger] hipster man with a beard'

|

| 63 |

+

- '[trigger] a man'

|

| 64 |

+

- '[trigger] a man holding a sign that says, ''this is a sign'''

|

| 65 |

+

- '[trigger] a bulldog with a shotgun, in a leather jacket with a motorcycle'

|

| 66 |

+

neg: ''

|

| 67 |

+

seed: 42

|

| 68 |

+

walk_seed: true

|

| 69 |

+

guidance_scale: 1

|

| 70 |

+

sample_steps: 4

|

| 71 |

+

meta:

|

| 72 |

+

name: flux_microsoft_emoji_lora_v1

|

| 73 |

+

version: '1.0'

|

flux_microsoft_emoji_lora_v1.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9acbc3b5b4be5af92af84bd5185f55bf9b2e15f3c7b5f37128adcc9e3bb9403b

|

| 3 |

+

size 171969432

|

optimizer.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d31939ed302c71097bb374a94ecb6750fdf688c3e49755b7b649f05f988bf012

|

| 3 |

+

size 173272836

|

samples/1726077711901__000000000_0.jpg

ADDED

|

samples/1726077716083__000000000_1.jpg

ADDED

|

samples/1726077720264__000000000_2.jpg

ADDED

|

samples/1726077724445__000000000_3.jpg

ADDED

|

samples/1726077728629__000000000_4.jpg

ADDED

|

samples/1726077732814__000000000_5.jpg

ADDED

|

samples/1726077736999__000000000_6.jpg

ADDED

|

samples/1726077741187__000000000_7.jpg

ADDED

|

samples/1726077745378__000000000_8.jpg

ADDED

|

samples/1726077749587__000000000_9.jpg

ADDED

|

samples/1726078007980__000000250_0.jpg

ADDED

|

samples/1726078012186__000000250_1.jpg

ADDED

|

samples/1726078016389__000000250_2.jpg

ADDED

|

samples/1726078020599__000000250_3.jpg

ADDED

|

samples/1726078024806__000000250_4.jpg

ADDED

|

samples/1726078029011__000000250_5.jpg

ADDED

|

samples/1726078033217__000000250_6.jpg

ADDED

|

samples/1726078037419__000000250_7.jpg

ADDED

|

samples/1726078041628__000000250_8.jpg

ADDED

|

samples/1726078045839__000000250_9.jpg

ADDED

|

samples/1726078304706__000000500_0.jpg

ADDED

|

samples/1726078308907__000000500_1.jpg

ADDED

|

samples/1726078313111__000000500_2.jpg

ADDED

|

samples/1726078317317__000000500_3.jpg

ADDED

|

samples/1726078321523__000000500_4.jpg

ADDED

|

samples/1726078325731__000000500_5.jpg

ADDED

|

samples/1726078329937__000000500_6.jpg

ADDED

|

samples/1726078334142__000000500_7.jpg

ADDED

|

samples/1726078338352__000000500_8.jpg

ADDED

|

samples/1726078342563__000000500_9.jpg

ADDED

|

samples/1726078599453__000000750_0.jpg

ADDED

|

samples/1726078603657__000000750_1.jpg

ADDED

|

samples/1726078607861__000000750_2.jpg

ADDED

|

samples/1726078612065__000000750_3.jpg

ADDED

|

samples/1726078616269__000000750_4.jpg

ADDED

|

samples/1726078620473__000000750_5.jpg

ADDED

|

samples/1726078624678__000000750_6.jpg

ADDED

|

samples/1726078628883__000000750_7.jpg

ADDED

|

samples/1726078633093__000000750_8.jpg

ADDED

|

samples/1726078637304__000000750_9.jpg

ADDED

|

samples/1726078893536__000001000_0.jpg

ADDED

|

samples/1726078897738__000001000_1.jpg

ADDED

|

samples/1726078901940__000001000_2.jpg

ADDED

|

samples/1726078906140__000001000_3.jpg

ADDED

|

samples/1726078910339__000001000_4.jpg

ADDED

|