Upload new k-quant GGML quantised models.

Browse files

README.md

CHANGED

|

@@ -1,11 +1,8 @@

|

|

| 1 |

---

|

| 2 |

-

language:

|

| 3 |

-

- en

|

| 4 |

-

tags:

|

| 5 |

-

- causal-lm

|

| 6 |

-

- llama

|

| 7 |

inference: false

|

|

|

|

| 8 |

---

|

|

|

|

| 9 |

<!-- header start -->

|

| 10 |

<div style="width: 100%;">

|

| 11 |

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

|

|

@@ -19,48 +16,87 @@ inference: false

|

|

| 19 |

</div>

|

| 20 |

</div>

|

| 21 |

<!-- header end -->

|

| 22 |

-

# Wizard-Vicuna-13B-GGML

|

| 23 |

|

| 24 |

-

|

|

|

|

|

|

|

| 25 |

|

| 26 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 27 |

|

| 28 |

## Repositories available

|

| 29 |

|

| 30 |

-

* [

|

| 31 |

-

* [

|

| 32 |

-

* [

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

|

| 34 |

-

|

| 35 |

|

| 36 |

-

|

| 37 |

|

| 38 |

-

|

| 39 |

|

| 40 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 41 |

|

| 42 |

## Provided files

|

| 43 |

-

| Name | Quant method | Bits | Size | RAM required | Use case |

|

| 44 |

| ---- | ---- | ---- | ---- | ---- | ----- |

|

| 45 |

-

|

| 46 |

-

|

| 47 |

-

|

| 48 |

-

|

| 49 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 50 |

|

| 51 |

## How to run in `llama.cpp`

|

| 52 |

|

| 53 |

I use the following command line; adjust for your tastes and needs:

|

| 54 |

|

| 55 |

```

|

| 56 |

-

./main -t

|

| 57 |

```

|

|

|

|

| 58 |

|

| 59 |

-

Change `-

|

| 60 |

|

| 61 |

-

|

| 62 |

|

| 63 |

-

|

| 64 |

|

| 65 |

Further instructions here: [text-generation-webui/docs/llama.cpp-models.md](https://github.com/oobabooga/text-generation-webui/blob/main/docs/llama.cpp-models.md).

|

| 66 |

|

|

@@ -84,76 +120,14 @@ Donaters will get priority support on any and all AI/LLM/model questions and req

|

|

| 84 |

* Patreon: https://patreon.com/TheBlokeAI

|

| 85 |

* Ko-Fi: https://ko-fi.com/TheBlokeAI

|

| 86 |

|

| 87 |

-

**

|

| 88 |

-

|

| 89 |

-

Thank you to all my generous patrons and donaters!

|

| 90 |

-

<!-- footer end -->

|

| 91 |

-

# Original WizardVicuna-13B model card

|

| 92 |

-

|

| 93 |

-

Github page: https://github.com/melodysdreamj/WizardVicunaLM

|

| 94 |

-

|

| 95 |

-

# WizardVicunaLM

|

| 96 |

-

### Wizard's dataset + ChatGPT's conversation extension + Vicuna's tuning method

|

| 97 |

-

I am a big fan of the ideas behind WizardLM and VicunaLM. I particularly like the idea of WizardLM handling the dataset itself more deeply and broadly, as well as VicunaLM overcoming the limitations of single-turn conversations by introducing multi-round conversations. As a result, I combined these two ideas to create WizardVicunaLM. This project is highly experimental and designed for proof of concept, not for actual usage.

|

| 98 |

-

|

| 99 |

-

|

| 100 |

-

## Benchmark

|

| 101 |

-

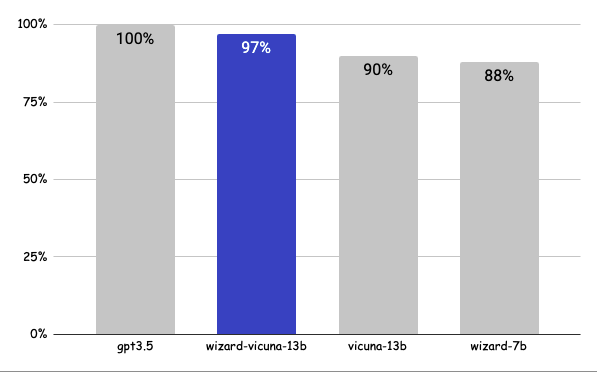

### Approximately 7% performance improvement over VicunaLM

|

| 102 |

-

|

| 103 |

|

|

|

|

| 104 |

|

| 105 |

-

|

| 106 |

-

|

| 107 |

-

The questions presented here are not from rigorous tests, but rather, I asked a few questions and requested GPT-4 to score them. The models compared were ChatGPT 3.5, WizardVicunaLM, VicunaLM, and WizardLM, in that order.

|

| 108 |

-

|

| 109 |

-

| | gpt3.5 | wizard-vicuna-13b | vicuna-13b | wizard-7b | link |

|

| 110 |

-

|-----|--------|-------------------|------------|-----------|----------|

|

| 111 |

-

| Q1 | 95 | 90 | 85 | 88 | [link](https://sharegpt.com/c/YdhIlby) |

|

| 112 |

-

| Q2 | 95 | 97 | 90 | 89 | [link](https://sharegpt.com/c/YOqOV4g) |

|

| 113 |

-

| Q3 | 85 | 90 | 80 | 65 | [link](https://sharegpt.com/c/uDmrcL9) |

|

| 114 |

-

| Q4 | 90 | 85 | 80 | 75 | [link](https://sharegpt.com/c/XBbK5MZ) |

|

| 115 |

-

| Q5 | 90 | 85 | 80 | 75 | [link](https://sharegpt.com/c/AQ5tgQX) |

|

| 116 |

-

| Q6 | 92 | 85 | 87 | 88 | [link](https://sharegpt.com/c/eVYwfIr) |

|

| 117 |

-

| Q7 | 95 | 90 | 85 | 92 | [link](https://sharegpt.com/c/Kqyeub4) |

|

| 118 |

-

| Q8 | 90 | 85 | 75 | 70 | [link](https://sharegpt.com/c/M0gIjMF) |

|

| 119 |

-

| Q9 | 92 | 85 | 70 | 60 | [link](https://sharegpt.com/c/fOvMtQt) |

|

| 120 |

-

| Q10 | 90 | 80 | 75 | 85 | [link](https://sharegpt.com/c/YYiCaUz) |

|

| 121 |

-

| Q11 | 90 | 85 | 75 | 65 | [link](https://sharegpt.com/c/HMkKKGU) |

|

| 122 |

-

| Q12 | 85 | 90 | 80 | 88 | [link](https://sharegpt.com/c/XbW6jgB) |

|

| 123 |

-

| Q13 | 90 | 95 | 88 | 85 | [link](https://sharegpt.com/c/JXZb7y6) |

|

| 124 |

-

| Q14 | 94 | 89 | 90 | 91 | [link](https://sharegpt.com/c/cTXH4IS) |

|

| 125 |

-

| Q15 | 90 | 85 | 88 | 87 | [link](https://sharegpt.com/c/GZiM0Yt) |

|

| 126 |

-

| | 91 | 88 | 82 | 80 | |

|

| 127 |

-

|

| 128 |

-

|

| 129 |

-

## Principle

|

| 130 |

-

|

| 131 |

-

We adopted the approach of WizardLM, which is to extend a single problem more in-depth. However, instead of using individual instructions, we expanded it using Vicuna's conversation format and applied Vicuna's fine-tuning techniques.

|

| 132 |

-

|

| 133 |

-

Turning a single command into a rich conversation is what we've done [here](https://sharegpt.com/c/6cmxqq0).

|

| 134 |

-

|

| 135 |

-

After creating the training data, I later trained it according to the Vicuna v1.1 [training method](https://github.com/lm-sys/FastChat/blob/main/scripts/train_vicuna_13b.sh).

|

| 136 |

-

|

| 137 |

-

|

| 138 |

-

## Detailed Method

|

| 139 |

-

|

| 140 |

-

First, we explore and expand various areas in the same topic using the 7K conversations created by WizardLM. However, we made it in a continuous conversation format instead of the instruction format. That is, it starts with WizardLM's instruction, and then expands into various areas in one conversation using ChatGPT 3.5.

|

| 141 |

-

|

| 142 |

-

After that, we applied the following model using Vicuna's fine-tuning format.

|

| 143 |

-

|

| 144 |

-

## Training Process

|

| 145 |

-

|

| 146 |

-

Trained with 8 A100 GPUs for 35 hours.

|

| 147 |

-

|

| 148 |

-

## Weights

|

| 149 |

-

You can see the [dataset](https://huggingface.co/datasets/junelee/wizard_vicuna_70k) we used for training and the [13b model](https://huggingface.co/junelee/wizard-vicuna-13b) in the huggingface.

|

| 150 |

-

|

| 151 |

-

## Conclusion

|

| 152 |

-

If we extend the conversation to gpt4 32K, we can expect a dramatic improvement, as we can generate 8x more, more accurate and richer conversations.

|

| 153 |

|

| 154 |

-

|

| 155 |

-

The model is licensed under the LLaMA model, and the dataset is licensed under the terms of OpenAI because it uses ChatGPT. Everything else is free.

|

| 156 |

|

| 157 |

-

|

| 158 |

|

| 159 |

-

|

|

|

|

| 1 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 2 |

inference: false

|

| 3 |

+

license: other

|

| 4 |

---

|

| 5 |

+

|

| 6 |

<!-- header start -->

|

| 7 |

<div style="width: 100%;">

|

| 8 |

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

|

|

|

|

| 16 |

</div>

|

| 17 |

</div>

|

| 18 |

<!-- header end -->

|

|

|

|

| 19 |

|

| 20 |

+

# June Lee's Wizard Vicuna 13B GGML

|

| 21 |

+

|

| 22 |

+

These files are GGML format model files for [June Lee's Wizard Vicuna 13B](https://huggingface.co/junelee/wizard-vicuna-13b).

|

| 23 |

|

| 24 |

+

GGML files are for CPU + GPU inference using [llama.cpp](https://github.com/ggerganov/llama.cpp) and libraries and UIs which support this format, such as:

|

| 25 |

+

* [text-generation-webui](https://github.com/oobabooga/text-generation-webui)

|

| 26 |

+

* [KoboldCpp](https://github.com/LostRuins/koboldcpp)

|

| 27 |

+

* [ParisNeo/GPT4All-UI](https://github.com/ParisNeo/gpt4all-ui)

|

| 28 |

+

* [llama-cpp-python](https://github.com/abetlen/llama-cpp-python)

|

| 29 |

+

* [ctransformers](https://github.com/marella/ctransformers)

|

| 30 |

|

| 31 |

## Repositories available

|

| 32 |

|

| 33 |

+

* [4-bit GPTQ models for GPU inference](https://huggingface.co/TheBloke/wizard-vicuna-13B-GPTQ)

|

| 34 |

+

* [2, 3, 4, 5, 6 and 8-bit GGML models for CPU+GPU inference](https://huggingface.co/TheBloke/wizard-vicuna-13B-GGML)

|

| 35 |

+

* [Unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/TheBloke/wizard-vicuna-13B-HF)

|

| 36 |

+

|

| 37 |

+

<!-- compatibility_ggml start -->

|

| 38 |

+

## Compatibility

|

| 39 |

+

|

| 40 |

+

### Original llama.cpp quant methods: `q4_0, q4_1, q5_0, q5_1, q8_0`

|

| 41 |

+

|

| 42 |

+

I have quantized these 'original' quantisation methods using an older version of llama.cpp so that they remain compatible with llama.cpp as of May 19th, commit `2d5db48`.

|

| 43 |

|

| 44 |

+

They should be compatible with all current UIs and libraries that use llama.cpp, such as those listed at the top of this README.

|

| 45 |

|

| 46 |

+

### New k-quant methods: `q2_K, q3_K_S, q3_K_M, q3_K_L, q4_K_S, q4_K_M, q5_K_S, q6_K`

|

| 47 |

|

| 48 |

+

These new quantisation methods are only compatible with llama.cpp as of June 6th, commit `2d43387`.

|

| 49 |

|

| 50 |

+

They will NOT be compatible with koboldcpp, text-generation-ui, and other UIs and libraries yet. Support is expected to come over the next few days.

|

| 51 |

+

|

| 52 |

+

## Explanation of the new k-quant methods

|

| 53 |

+

|

| 54 |

+

The new methods available are:

|

| 55 |

+

* GGML_TYPE_Q2_K - "type-1" 2-bit quantization in super-blocks containing 16 blocks, each block having 16 weight. Block scales and mins are quantized with 4 bits. This ends up effectively using 2.5625 bits per weight (bpw)

|

| 56 |

+

* GGML_TYPE_Q3_K - "type-0" 3-bit quantization in super-blocks containing 16 blocks, each block having 16 weights. Scales are quantized with 6 bits. This end up using 3.4375 bpw.

|

| 57 |

+

* GGML_TYPE_Q4_K - "type-1" 4-bit quantization in super-blocks containing 8 blocks, each block having 32 weights. Scales and mins are quantized with 6 bits. This ends up using 4.5 bpw.

|

| 58 |

+

* GGML_TYPE_Q5_K - "type-1" 5-bit quantization. Same super-block structure as GGML_TYPE_Q4_K resulting in 5.5 bpw

|

| 59 |

+

* GGML_TYPE_Q6_K - "type-0" 6-bit quantization. Super-blocks with 16 blocks, each block having 16 weights. Scales are quantized with 8 bits. This ends up using 6.5625 bpw

|

| 60 |

+

* GGML_TYPE_Q8_K - "type-0" 8-bit quantization. Only used for quantizing intermediate results. The difference to the existing Q8_0 is that the block size is 256. All 2-6 bit dot products are implemented for this quantization type.

|

| 61 |

+

|

| 62 |

+

Refer to the Provided Files table below to see what files use which methods, and how.

|

| 63 |

+

<!-- compatibility_ggml end -->

|

| 64 |

|

| 65 |

## Provided files

|

| 66 |

+

| Name | Quant method | Bits | Size | Max RAM required | Use case |

|

| 67 |

| ---- | ---- | ---- | ---- | ---- | ----- |

|

| 68 |

+

| wizard-vicuna-13B.ggmlv3.q2_K.bin | q2_K | 2 | 5.43 GB | 7.93 GB | New k-quant method. Uses GGML_TYPE_Q4_K for the attention.vw and feed_forward.w2 tensors, GGML_TYPE_Q2_K for the other tensors. |

|

| 69 |

+

| wizard-vicuna-13B.ggmlv3.q3_K_L.bin | q3_K_L | 3 | 6.87 GB | 9.37 GB | New k-quant method. Uses GGML_TYPE_Q5_K for the attention.wv, attention.wo, and feed_forward.w2 tensors, else GGML_TYPE_Q3_K |

|

| 70 |

+

| wizard-vicuna-13B.ggmlv3.q3_K_M.bin | q3_K_M | 3 | 6.25 GB | 8.75 GB | New k-quant method. Uses GGML_TYPE_Q4_K for the attention.wv, attention.wo, and feed_forward.w2 tensors, else GGML_TYPE_Q3_K |

|

| 71 |

+

| wizard-vicuna-13B.ggmlv3.q3_K_S.bin | q3_K_S | 3 | 5.59 GB | 8.09 GB | New k-quant method. Uses GGML_TYPE_Q3_K for all tensors |

|

| 72 |

+

| wizard-vicuna-13B.ggmlv3.q4_0.bin | q4_0 | 4 | 7.32 GB | 9.82 GB | Original llama.cpp quant method, 4-bit. |

|

| 73 |

+

| wizard-vicuna-13B.ggmlv3.q4_1.bin | q4_1 | 4 | 8.14 GB | 10.64 GB | Original llama.cpp quant method, 4-bit. Higher accuracy than q4_0 but not as high as q5_0. However has quicker inference than q5 models. |

|

| 74 |

+

| wizard-vicuna-13B.ggmlv3.q4_K_M.bin | q4_K_M | 4 | 7.82 GB | 10.32 GB | New k-quant method. Uses GGML_TYPE_Q6_K for half of the attention.wv and feed_forward.w2 tensors, else GGML_TYPE_Q4_K |

|

| 75 |

+

| wizard-vicuna-13B.ggmlv3.q4_K_S.bin | q4_K_S | 4 | 7.32 GB | 9.82 GB | New k-quant method. Uses GGML_TYPE_Q4_K for all tensors |

|

| 76 |

+

| wizard-vicuna-13B.ggmlv3.q5_0.bin | q5_0 | 5 | 8.95 GB | 11.45 GB | Original llama.cpp quant method, 5-bit. Higher accuracy, higher resource usage and slower inference. |

|

| 77 |

+

| wizard-vicuna-13B.ggmlv3.q5_1.bin | q5_1 | 5 | 9.76 GB | 12.26 GB | Original llama.cpp quant method, 5-bit. Even higher accuracy, resource usage and slower inference. |

|

| 78 |

+

| wizard-vicuna-13B.ggmlv3.q5_K_M.bin | q5_K_M | 5 | 9.21 GB | 11.71 GB | New k-quant method. Uses GGML_TYPE_Q6_K for half of the attention.wv and feed_forward.w2 tensors, else GGML_TYPE_Q5_K |

|

| 79 |

+

| wizard-vicuna-13B.ggmlv3.q5_K_S.bin | q5_K_S | 5 | 8.95 GB | 11.45 GB | New k-quant method. Uses GGML_TYPE_Q5_K for all tensors |

|

| 80 |

+

| wizard-vicuna-13B.ggmlv3.q6_K.bin | q6_K | 6 | 10.68 GB | 13.18 GB | New k-quant method. Uses GGML_TYPE_Q8_K - 6-bit quantization - for all tensors |

|

| 81 |

+

| wizard-vicuna-13B.ggmlv3.q8_0.bin | q8_0 | 8 | 13.83 GB | 16.33 GB | Original llama.cpp quant method, 8-bit. Almost indistinguishable from float16. High resource use and slow. Not recommended for most users. |

|

| 82 |

+

|

| 83 |

+

|

| 84 |

+

**Note**: the above RAM figures assume no GPU offloading. If layers are offloaded to the GPU, this will reduce RAM usage and use VRAM instead.

|

| 85 |

|

| 86 |

## How to run in `llama.cpp`

|

| 87 |

|

| 88 |

I use the following command line; adjust for your tastes and needs:

|

| 89 |

|

| 90 |

```

|

| 91 |

+

./main -t 10 -ngl 32 -m wizard-vicuna-13B.ggmlv3.q5_0.bin --color -c 2048 --temp 0.7 --repeat_penalty 1.1 -n -1 -p "### Instruction: Write a story about llamas\n### Response:"

|

| 92 |

```

|

| 93 |

+

Change `-t 10` to the number of physical CPU cores you have. For example if your system has 8 cores/16 threads, use `-t 8`.

|

| 94 |

|

| 95 |

+

Change `-ngl 32` to the number of layers to offload to GPU. Remove it if you don't have GPU acceleration.

|

| 96 |

|

| 97 |

+

If you want to have a chat-style conversation, replace the `-p <PROMPT>` argument with `-i -ins`

|

| 98 |

|

| 99 |

+

## How to run in `text-generation-webui`

|

| 100 |

|

| 101 |

Further instructions here: [text-generation-webui/docs/llama.cpp-models.md](https://github.com/oobabooga/text-generation-webui/blob/main/docs/llama.cpp-models.md).

|

| 102 |

|

|

|

|

| 120 |

* Patreon: https://patreon.com/TheBlokeAI

|

| 121 |

* Ko-Fi: https://ko-fi.com/TheBlokeAI

|

| 122 |

|

| 123 |

+

**Special thanks to**: Luke from CarbonQuill, Aemon Algiz, Dmitriy Samsonov.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 124 |

|

| 125 |

+

**Patreon special mentions**: Oscar Rangel, Eugene Pentland, Talal Aujan, Cory Kujawski, Luke, Asp the Wyvern, Ai Maven, Pyrater, Alps Aficionado, senxiiz, Willem Michiel, Junyu Yang, trip7s trip, Sebastain Graf, Joseph William Delisle, Lone Striker, Jonathan Leane, Johann-Peter Hartmann, David Flickinger, Spiking Neurons AB, Kevin Schuppel, Mano Prime, Dmitriy Samsonov, Sean Connelly, Nathan LeClaire, Alain Rossmann, Fen Risland, Derek Yates, Luke Pendergrass, Nikolai Manek, Khalefa Al-Ahmad, Artur Olbinski, John Detwiler, Ajan Kanaga, Imad Khwaja, Trenton Dambrowitz, Kalila, vamX, webtim, Illia Dulskyi.

|

| 126 |

|

| 127 |

+

Thank you to all my generous patrons and donaters!

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 128 |

|

| 129 |

+

<!-- footer end -->

|

|

|

|

| 130 |

|

| 131 |

+

# Original model card: June Lee's Wizard Vicuna 13B

|

| 132 |

|

| 133 |

+

https://github.com/melodysdreamj/WizardVicunaLM

|