Create README.md

Browse files

README.md

ADDED

|

@@ -0,0 +1,507 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

language:

|

| 4 |

+

- en

|

| 5 |

+

pipeline_tag: text-generation

|

| 6 |

+

tags:

|

| 7 |

+

- multimodal

|

| 8 |

+

base_model: Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int8

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

# Qwen2-VL-2B-Instruct-GPTQ-Int8

|

| 12 |

+

|

| 13 |

+

## Introduction

|

| 14 |

+

|

| 15 |

+

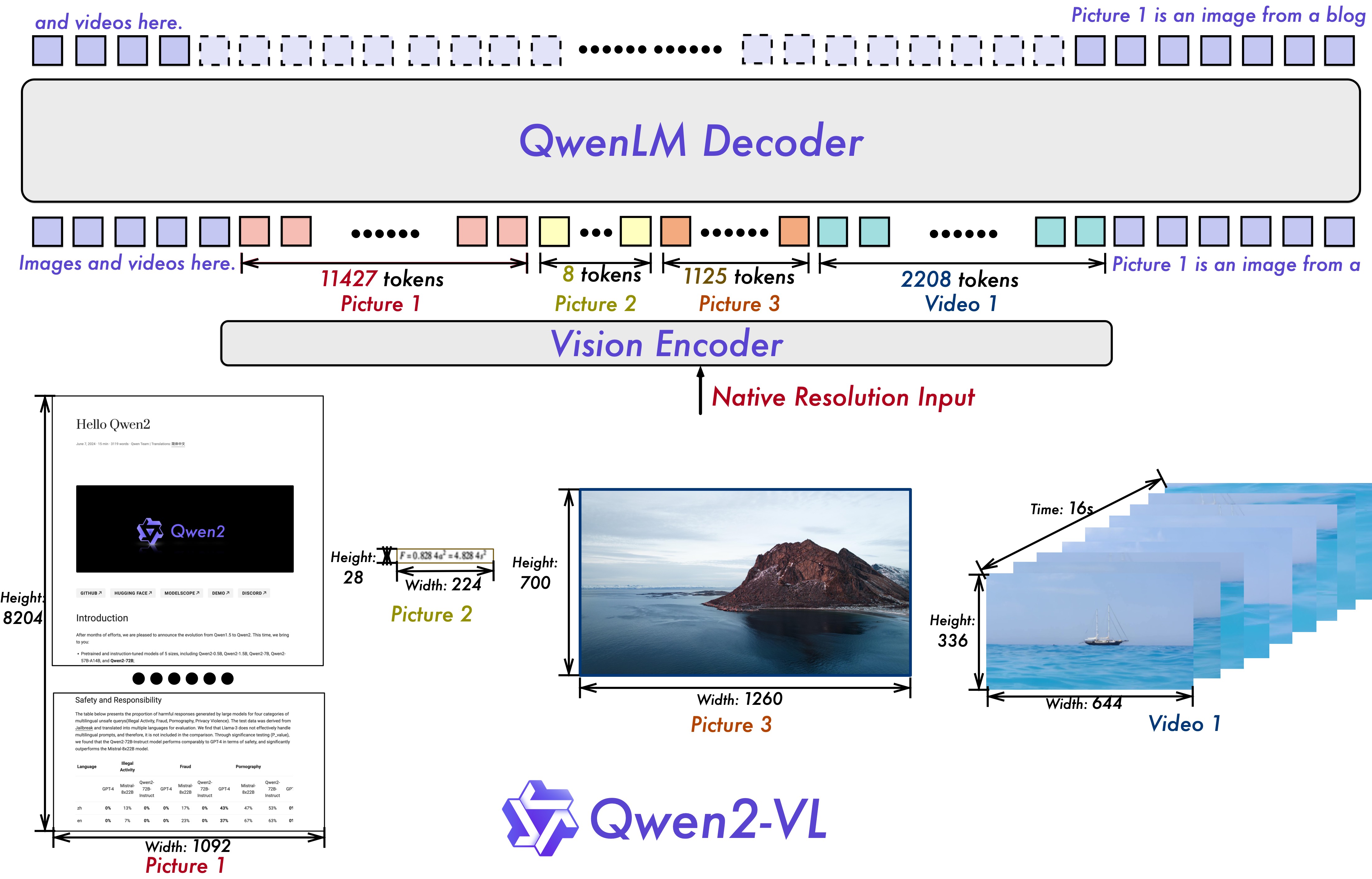

We're excited to unveil **Qwen2-VL**, the latest iteration of our Qwen-VL model, representing nearly a year of innovation.

|

| 16 |

+

|

| 17 |

+

### What’s New in Qwen2-VL?

|

| 18 |

+

|

| 19 |

+

#### Key Enhancements:

|

| 20 |

+

|

| 21 |

+

* **Enhanced Image Comprehension**: We've significantly improved the model's ability to understand and interpret visual information, setting new benchmarks across key performance metrics.

|

| 22 |

+

|

| 23 |

+

* **Advanced Video Understanding**: Qwen2-VL now features superior online streaming capabilities, enabling real-time analysis of dynamic video content with remarkable accuracy.

|

| 24 |

+

|

| 25 |

+

* **Integrated Visual Agent Functionality**: Our model now seamlessly incorporates sophisticated system integration, transforming Qwen2-VL into a powerful visual agent capable of complex reasoning and decision-making.

|

| 26 |

+

|

| 27 |

+

* **Expanded Multilingual Support**: We've broadened our language capabilities to better serve a diverse global user base, making Qwen2-VL more accessible and effective across different linguistic contexts.

|

| 28 |

+

|

| 29 |

+

#### Model Architecture Updates:

|

| 30 |

+

|

| 31 |

+

* **Naive Dynamic Resolution**: Unlike before, Qwen2-VL can handle arbitrary image resolutions, mapping them into a dynamic number of visual tokens, offering a more human-like visual processing experience.

|

| 32 |

+

|

| 33 |

+

* **Multimodal Rotary Position Embedding (M-ROPE)**: Decomposes positional embedding into parts to capture 1D textual, 2D visual, and 3D video positional information, enhancing its multimodal processing capabilities.

|

| 34 |

+

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

We have three models with 2, 7 and 72 billion parameters. This repo contains the quantized version of the instruction-tuned 2B Qwen2-VL model. For more information, visit our [Blog](https://qwenlm.github.io/blog/qwen2-vl/) and [GitHub](https://github.com/QwenLM/Qwen2-VL).

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

### Benchmark

|

| 42 |

+

#### Performance of Quantized Models

|

| 43 |

+

This section reports the generation performance of quantized models (including GPTQ and AWQ) of the Qwen2-VL series. Specifically, we report:

|

| 44 |

+

|

| 45 |

+

- MMMU_VAL (Accuracy)

|

| 46 |

+

- DocVQA_VAL (Accuracy)

|

| 47 |

+

- MMBench_DEV_EN (Accuracy)

|

| 48 |

+

- MathVista_MINI (Accuracy)

|

| 49 |

+

|

| 50 |

+

We use [VLMEvalkit](https://github.com/kq-chen/VLMEvalKit/tree/add_qwen2vl) to evaluate all models.

|

| 51 |

+

|

| 52 |

+

| Model Size | Quantization | MMMU | DocVQA | MMBench | MathVista |

|

| 53 |

+

| --- | --- | --- | --- | --- | --- |

|

| 54 |

+

Qwen2-VL-2B-Instruct | BF16<br><sup>([🤗](https://huggingface.co/Qwen/Qwen2-VL-2B-Instruct)[🤖](https://modelscope.cn/models/qwen/Qwen2-VL-2B-Instruct)) | 41.88 | 88.34 | 72.07 | 44.40 |

|

| 55 |

+

| | GPTQ-Int8<br><sup>([🤗](https://huggingface.co/Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int8)[🤖](https://modelscope.cn/models/qwen/Qwen2-VL-2B-Instruct-GPTQ-Int8)) | 41.55 | 88.28 | 71.99 | 44.60 |

|

| 56 |

+

| | GPTQ-Int4<br><sup>([🤗](https://huggingface.co/Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int4)[🤖](https://modelscope.cn/models/qwen/Qwen2-VL-2B-Instruct-GPTQ-Int4)) | 39.22 | 87.21 | 70.87 | 41.69 |

|

| 57 |

+

| | AWQ<br><sup>([🤗](https://huggingface.co/Qwen/Qwen2-VL-2B-Instruct-AWQ)[🤖](https://modelscope.cn/models/qwen/Qwen2-VL-2B-Instruct-AWQ)) | 41.33 | 86.96 | 71.64 | 39.90 |

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

#### Speed Benchmark

|

| 61 |

+

This section reports the speed performance of bf16 models, quantized models (including GPTQ-Int4, GPTQ-Int8 and AWQ) of the Qwen2-VL series. Specifically, we report the inference speed (tokens/s) as well as memory footprint (GB) under the conditions of different context lengths.

|

| 62 |

+

|

| 63 |

+

The environment of the evaluation with huggingface transformers is:

|

| 64 |

+

|

| 65 |

+

- NVIDIA A100 80GB

|

| 66 |

+

- CUDA 11.8

|

| 67 |

+

- Pytorch 2.2.1+cu118

|

| 68 |

+

- Flash Attention 2.6.1

|

| 69 |

+

- Transformers 4.38.2

|

| 70 |

+

- AutoGPTQ 0.6.0+cu118

|

| 71 |

+

- AutoAWQ 0.2.5+cu118 (autoawq_kernels 0.0.6+cu118)

|

| 72 |

+

|

| 73 |

+

Note:

|

| 74 |

+

|

| 75 |

+

- We use the batch size of 1 and the least number of GPUs as possible for the evalution.

|

| 76 |

+

- We test the speed and memory of generating 2048 tokens with the input lengths of 1, 6144, 14336, 30720, 63488, and 129024 tokens.

|

| 77 |

+

- 2B (transformers)

|

| 78 |

+

|

| 79 |

+

| Model | Input Length | Quantization | GPU Num | Speed(tokens/s) | GPU Memory(GB) |

|

| 80 |

+

| --- | --- | --- | --- | --- | --- |

|

| 81 |

+

| Qwen2-VL-2B-Instruct | 1 | BF16 | 1 | 35.29 | 4.68 |

|

| 82 |

+

| | | GPTQ-Int8 | 1 | 28.59 | 3.55 |

|

| 83 |

+

| | | GPTQ-Int4 | 1 | 39.76 | 2.91 |

|

| 84 |

+

| | | AWQ | 1 | 29.89 | 2.88 |

|

| 85 |

+

| | 6144 | BF16 | 1 | 36.58 | 10.01 |

|

| 86 |

+

| | | GPTQ-Int8 | 1 | 29.53 | 8.87 |

|

| 87 |

+

| | | GPTQ-Int4 | 1 | 39.27 | 8.21 |

|

| 88 |

+

| | | AWQ | 1 | 33.42 | 8.18 |

|

| 89 |

+

| | 14336 | BF16 | 1 | 36.31 | 17.20 |

|

| 90 |

+

| | | GPTQ-Int8 | 1 | 31.03 | 16.07 |

|

| 91 |

+

| | | GPTQ-Int4 | 1 | 39.89 | 15.40 |

|

| 92 |

+

| | | AWQ | 1 | 32.28 | 15.40 |

|

| 93 |

+

| | 30720 | BF16 | 1 | 32.53 | 31.64 |

|

| 94 |

+

| | | GPTQ-Int8 | 1 | 27.76 | 30.51 |

|

| 95 |

+

| | | GPTQ-Int4 | 1 | 30.73 | 29.84 |

|

| 96 |

+

| | | AWQ | 1 | 31.55 | 29.84 |

|

| 97 |

+

|

| 98 |

+

## Requirements

|

| 99 |

+

The code of Qwen2-VL has been in the latest Hugging face transformers and we advise you to build from source with command `pip install git+https://github.com/huggingface/transformers`, or you might encounter the following error:

|

| 100 |

+

```

|

| 101 |

+

KeyError: 'qwen2_vl'

|

| 102 |

+

```

|

| 103 |

+

|

| 104 |

+

## Quickstart

|

| 105 |

+

We offer a toolkit to help you handle various types of visual input more conveniently, as if you were using an API. This includes base64, URLs, and interleaved images and videos. You can install it using the following command:

|

| 106 |

+

|

| 107 |

+

```bash

|

| 108 |

+

pip install qwen-vl-utils

|

| 109 |

+

```

|

| 110 |

+

|

| 111 |

+

Here we show a code snippet to show you how to use the chat model with `transformers` and `qwen_vl_utils`:

|

| 112 |

+

|

| 113 |

+

```python

|

| 114 |

+

from transformers import Qwen2VLForConditionalGeneration, AutoTokenizer, AutoProcessor

|

| 115 |

+

from qwen_vl_utils import process_vision_info

|

| 116 |

+

|

| 117 |

+

# default: Load the model on the available device(s)

|

| 118 |

+

model = Qwen2VLForConditionalGeneration.from_pretrained(

|

| 119 |

+

"Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int8", torch_dtype="auto", device_map="auto"

|

| 120 |

+

)

|

| 121 |

+

|

| 122 |

+

# We recommend enabling flash_attention_2 for better acceleration and memory saving, especially in multi-image and video scenarios.

|

| 123 |

+

# model = Qwen2VLForConditionalGeneration.from_pretrained(

|

| 124 |

+

# "Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int8",

|

| 125 |

+

# torch_dtype=torch.bfloat16,

|

| 126 |

+

# attn_implementation="flash_attention_2",

|

| 127 |

+

# device_map="auto",

|

| 128 |

+

# )

|

| 129 |

+

|

| 130 |

+

# default processer

|

| 131 |

+

processor = AutoProcessor.from_pretrained("Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int8")

|

| 132 |

+

|

| 133 |

+

# The default range for the number of visual tokens per image in the model is 4-16384. You can set min_pixels and max_pixels according to your needs, such as a token count range of 256-1280, to balance speed and memory usage.

|

| 134 |

+

# min_pixels = 256*28*28

|

| 135 |

+

# max_pixels = 1280*28*28

|

| 136 |

+

# processor = AutoProcessor.from_pretrained("Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int8", min_pixels=min_pixels, max_pixels=max_pixels)

|

| 137 |

+

|

| 138 |

+

messages = [

|

| 139 |

+

{

|

| 140 |

+

"role": "user",

|

| 141 |

+

"content": [

|

| 142 |

+

{

|

| 143 |

+

"type": "image",

|

| 144 |

+

"image": "https://qianwen-res.oss-cn-beijing.aliyuncs.com/Qwen-VL/assets/demo.jpeg",

|

| 145 |

+

},

|

| 146 |

+

{"type": "text", "text": "Describe this image."},

|

| 147 |

+

],

|

| 148 |

+

}

|

| 149 |

+

]

|

| 150 |

+

|

| 151 |

+

# Preparation for inference

|

| 152 |

+

text = processor.apply_chat_template(

|

| 153 |

+

messages, tokenize=False, add_generation_prompt=True

|

| 154 |

+

)

|

| 155 |

+

image_inputs, video_inputs = process_vision_info(messages)

|

| 156 |

+

inputs = processor(

|

| 157 |

+

text=[text],

|

| 158 |

+

images=image_inputs,

|

| 159 |

+

videos=video_inputs,

|

| 160 |

+

padding=True,

|

| 161 |

+

return_tensors="pt",

|

| 162 |

+

)

|

| 163 |

+

inputs = inputs.to("cuda")

|

| 164 |

+

|

| 165 |

+

# Inference: Generation of the output

|

| 166 |

+

generated_ids = model.generate(**inputs, max_new_tokens=128)

|

| 167 |

+

generated_ids_trimmed = [

|

| 168 |

+

out_ids[len(in_ids) :] for in_ids, out_ids in zip(inputs.input_ids, generated_ids)

|

| 169 |

+

]

|

| 170 |

+

output_text = processor.batch_decode(

|

| 171 |

+

generated_ids_trimmed, skip_special_tokens=True, clean_up_tokenization_spaces=False

|

| 172 |

+

)

|

| 173 |

+

print(output_text)

|

| 174 |

+

```

|

| 175 |

+

<details>

|

| 176 |

+

<summary>Without qwen_vl_utils</summary>

|

| 177 |

+

|

| 178 |

+

```python

|

| 179 |

+

from PIL import Image

|

| 180 |

+

import requests

|

| 181 |

+

import torch

|

| 182 |

+

from torchvision import io

|

| 183 |

+

from typing import Dict

|

| 184 |

+

from transformers import Qwen2VLForConditionalGeneration, AutoTokenizer, AutoProcessor

|

| 185 |

+

|

| 186 |

+

# Load the model in half-precision on the available device(s)

|

| 187 |

+

model = Qwen2VLForConditionalGeneration.from_pretrained(

|

| 188 |

+

"Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int8", torch_dtype="auto", device_map="auto"

|

| 189 |

+

)

|

| 190 |

+

processor = AutoProcessor.from_pretrained("Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int8")

|

| 191 |

+

|

| 192 |

+

# Image

|

| 193 |

+

url = "https://qianwen-res.oss-cn-beijing.aliyuncs.com/Qwen-VL/assets/demo.jpeg"

|

| 194 |

+

image = Image.open(requests.get(url, stream=True).raw)

|

| 195 |

+

|

| 196 |

+

conversation = [

|

| 197 |

+

{

|

| 198 |

+

"role": "user",

|

| 199 |

+

"content": [

|

| 200 |

+

{

|

| 201 |

+

"type": "image",

|

| 202 |

+

},

|

| 203 |

+

{"type": "text", "text": "Describe this image."},

|

| 204 |

+

],

|

| 205 |

+

}

|

| 206 |

+

]

|

| 207 |

+

|

| 208 |

+

|

| 209 |

+

# Preprocess the inputs

|

| 210 |

+

text_prompt = processor.apply_chat_template(conversation, add_generation_prompt=True)

|

| 211 |

+

# Excepted output: '<|im_start|>system\nYou are a helpful assistant.<|im_end|>\n<|im_start|>user\n<|vision_start|><|image_pad|><|vision_end|>Describe this image.<|im_end|>\n<|im_start|>assistant\n'

|

| 212 |

+

|

| 213 |

+

inputs = processor(

|

| 214 |

+

text=[text_prompt], images=[image], padding=True, return_tensors="pt"

|

| 215 |

+

)

|

| 216 |

+

inputs = inputs.to("cuda")

|

| 217 |

+

|

| 218 |

+

# Inference: Generation of the output

|

| 219 |

+

output_ids = model.generate(**inputs, max_new_tokens=128)

|

| 220 |

+

generated_ids = [

|

| 221 |

+

output_ids[len(input_ids) :]

|

| 222 |

+

for input_ids, output_ids in zip(inputs.input_ids, output_ids)

|

| 223 |

+

]

|

| 224 |

+

output_text = processor.batch_decode(

|

| 225 |

+

generated_ids, skip_special_tokens=True, clean_up_tokenization_spaces=True

|

| 226 |

+

)

|

| 227 |

+

print(output_text)

|

| 228 |

+

```

|

| 229 |

+

</details>

|

| 230 |

+

|

| 231 |

+

<details>

|

| 232 |

+

<summary>Multi image inference</summary>

|

| 233 |

+

|

| 234 |

+

```python

|

| 235 |

+

# Messages containing multiple images and a text query

|

| 236 |

+

messages = [

|

| 237 |

+

{

|

| 238 |

+

"role": "user",

|

| 239 |

+

"content": [

|

| 240 |

+

{"type": "image", "image": "file:///path/to/image1.jpg"},

|

| 241 |

+

{"type": "image", "image": "file:///path/to/image2.jpg"},

|

| 242 |

+

{"type": "text", "text": "Identify the similarities between these images."},

|

| 243 |

+

],

|

| 244 |

+

}

|

| 245 |

+

]

|

| 246 |

+

|

| 247 |

+

# Preparation for inference

|

| 248 |

+

text = processor.apply_chat_template(

|

| 249 |

+

messages, tokenize=False, add_generation_prompt=True

|

| 250 |

+

)

|

| 251 |

+

image_inputs, video_inputs = process_vision_info(messages)

|

| 252 |

+

inputs = processor(

|

| 253 |

+

text=[text],

|

| 254 |

+

images=image_inputs,

|

| 255 |

+

videos=video_inputs,

|

| 256 |

+

padding=True,

|

| 257 |

+

return_tensors="pt",

|

| 258 |

+

)

|

| 259 |

+

inputs = inputs.to("cuda")

|

| 260 |

+

|

| 261 |

+

# Inference

|

| 262 |

+

generated_ids = model.generate(**inputs, max_new_tokens=128)

|

| 263 |

+

generated_ids_trimmed = [

|

| 264 |

+

out_ids[len(in_ids) :] for in_ids, out_ids in zip(inputs.input_ids, generated_ids)

|

| 265 |

+

]

|

| 266 |

+

output_text = processor.batch_decode(

|

| 267 |

+

generated_ids_trimmed, skip_special_tokens=True, clean_up_tokenization_spaces=False

|

| 268 |

+

)

|

| 269 |

+

print(output_text)

|

| 270 |

+

```

|

| 271 |

+

</details>

|

| 272 |

+

|

| 273 |

+

<details>

|

| 274 |

+

<summary>Video inference</summary>

|

| 275 |

+

|

| 276 |

+

```python

|

| 277 |

+

# Messages containing a images list as a video and a text query

|

| 278 |

+

messages = [

|

| 279 |

+

{

|

| 280 |

+

"role": "user",

|

| 281 |

+

"content": [

|

| 282 |

+

{

|

| 283 |

+

"type": "video",

|

| 284 |

+

"video": [

|

| 285 |

+

"file:///path/to/frame1.jpg",

|

| 286 |

+

"file:///path/to/frame2.jpg",

|

| 287 |

+

"file:///path/to/frame3.jpg",

|

| 288 |

+

"file:///path/to/frame4.jpg",

|

| 289 |

+

],

|

| 290 |

+

"fps": 1.0,

|

| 291 |

+

},

|

| 292 |

+

{"type": "text", "text": "Describe this video."},

|

| 293 |

+

],

|

| 294 |

+

}

|

| 295 |

+

]

|

| 296 |

+

# Messages containing a video and a text query

|

| 297 |

+

messages = [

|

| 298 |

+

{

|

| 299 |

+

"role": "user",

|

| 300 |

+

"content": [

|

| 301 |

+

{

|

| 302 |

+

"type": "video",

|

| 303 |

+

"video": "file:///path/to/video1.mp4",

|

| 304 |

+

"max_pixels": 360 * 420,

|

| 305 |

+

"fps": 1.0,

|

| 306 |

+

},

|

| 307 |

+

{"type": "text", "text": "Describe this video."},

|

| 308 |

+

],

|

| 309 |

+

}

|

| 310 |

+

]

|

| 311 |

+

|

| 312 |

+

# Preparation for inference

|

| 313 |

+

text = processor.apply_chat_template(

|

| 314 |

+

messages, tokenize=False, add_generation_prompt=True

|

| 315 |

+

)

|

| 316 |

+

image_inputs, video_inputs = process_vision_info(messages)

|

| 317 |

+

inputs = processor(

|

| 318 |

+

text=[text],

|

| 319 |

+

images=image_inputs,

|

| 320 |

+

videos=video_inputs,

|

| 321 |

+

padding=True,

|

| 322 |

+

return_tensors="pt",

|

| 323 |

+

)

|

| 324 |

+

inputs = inputs.to("cuda")

|

| 325 |

+

|

| 326 |

+

# Inference

|

| 327 |

+

generated_ids = model.generate(**inputs, max_new_tokens=128)

|

| 328 |

+

generated_ids_trimmed = [

|

| 329 |

+

out_ids[len(in_ids) :] for in_ids, out_ids in zip(inputs.input_ids, generated_ids)

|

| 330 |

+

]

|

| 331 |

+

output_text = processor.batch_decode(

|

| 332 |

+

generated_ids_trimmed, skip_special_tokens=True, clean_up_tokenization_spaces=False

|

| 333 |

+

)

|

| 334 |

+

print(output_text)

|

| 335 |

+

```

|

| 336 |

+

</details>

|

| 337 |

+

|

| 338 |

+

<details>

|

| 339 |

+

<summary>Batch inference</summary>

|

| 340 |

+

|

| 341 |

+

```python

|

| 342 |

+

# Sample messages for batch inference

|

| 343 |

+

messages1 = [

|

| 344 |

+

{

|

| 345 |

+

"role": "user",

|

| 346 |

+

"content": [

|

| 347 |

+

{"type": "image", "image": "file:///path/to/image1.jpg"},

|

| 348 |

+

{"type": "image", "image": "file:///path/to/image2.jpg"},

|

| 349 |

+

{"type": "text", "text": "What are the common elements in these pictures?"},

|

| 350 |

+

],

|

| 351 |

+

}

|

| 352 |

+

]

|

| 353 |

+

messages2 = [

|

| 354 |

+

{"role": "system", "content": "You are a helpful assistant."},

|

| 355 |

+

{"role": "user", "content": "Who are you?"},

|

| 356 |

+

]

|

| 357 |

+

# Combine messages for batch processing

|

| 358 |

+

messages = [messages1, messages1]

|

| 359 |

+

|

| 360 |

+

# Preparation for batch inference

|

| 361 |

+

texts = [

|

| 362 |

+

processor.apply_chat_template(msg, tokenize=False, add_generation_prompt=True)

|

| 363 |

+

for msg in messages

|

| 364 |

+

]

|

| 365 |

+

image_inputs, video_inputs = process_vision_info(messages)

|

| 366 |

+

inputs = processor(

|

| 367 |

+

text=texts,

|

| 368 |

+

images=image_inputs,

|

| 369 |

+

videos=video_inputs,

|

| 370 |

+

padding=True,

|

| 371 |

+

return_tensors="pt",

|

| 372 |

+

)

|

| 373 |

+

inputs = inputs.to("cuda")

|

| 374 |

+

|

| 375 |

+

# Batch Inference

|

| 376 |

+

generated_ids = model.generate(**inputs, max_new_tokens=128)

|

| 377 |

+

generated_ids_trimmed = [

|

| 378 |

+

out_ids[len(in_ids) :] for in_ids, out_ids in zip(inputs.input_ids, generated_ids)

|

| 379 |

+

]

|

| 380 |

+

output_texts = processor.batch_decode(

|

| 381 |

+

generated_ids_trimmed, skip_special_tokens=True, clean_up_tokenization_spaces=False

|

| 382 |

+

)

|

| 383 |

+

print(output_texts)

|

| 384 |

+

```

|

| 385 |

+

</details>

|

| 386 |

+

|

| 387 |

+

### More Usage Tips

|

| 388 |

+

|

| 389 |

+

For input images, we support local files, base64, and URLs. For videos, we currently only support local files.

|

| 390 |

+

|

| 391 |

+

```python

|

| 392 |

+

# You can directly insert a local file path, a URL, or a base64-encoded image into the position where you want in the text.

|

| 393 |

+

## Local file path

|

| 394 |

+

messages = [

|

| 395 |

+

{

|

| 396 |

+

"role": "user",

|

| 397 |

+

"content": [

|

| 398 |

+

{"type": "image", "image": "file:///path/to/your/image.jpg"},

|

| 399 |

+

{"type": "text", "text": "Describe this image."},

|

| 400 |

+

],

|

| 401 |

+

}

|

| 402 |

+

]

|

| 403 |

+

## Image URL

|

| 404 |

+

messages = [

|

| 405 |

+

{

|

| 406 |

+

"role": "user",

|

| 407 |

+

"content": [

|

| 408 |

+

{"type": "image", "image": "http://path/to/your/image.jpg"},

|

| 409 |

+

{"type": "text", "text": "Describe this image."},

|

| 410 |

+

],

|

| 411 |

+

}

|

| 412 |

+

]

|

| 413 |

+

## Base64 encoded image

|

| 414 |

+

messages = [

|

| 415 |

+

{

|

| 416 |

+

"role": "user",

|

| 417 |

+

"content": [

|

| 418 |

+

{"type": "image", "image": "data:image;base64,/9j/..."},

|

| 419 |

+

{"type": "text", "text": "Describe this image."},

|

| 420 |

+

],

|

| 421 |

+

}

|

| 422 |

+

]

|

| 423 |

+

```

|

| 424 |

+

#### Image Resolution for performance boost

|

| 425 |

+

|

| 426 |

+

The model supports a wide range of resolution inputs. By default, it uses the native resolution for input, but higher resolutions can enhance performance at the cost of more computation. Users can set the minimum and maximum number of pixels to achieve an optimal configuration for their needs, such as a token count range of 256-1280, to balance speed and memory usage.

|

| 427 |

+

|

| 428 |

+

```python

|

| 429 |

+

min_pixels = 256 * 28 * 28

|

| 430 |

+

max_pixels = 1280 * 28 * 28

|

| 431 |

+

processor = AutoProcessor.from_pretrained(

|

| 432 |

+

"Qwen/Qwen2-VL-2B-Instruct-GPTQ-Int8", min_pixels=min_pixels, max_pixels=max_pixels

|

| 433 |

+

)

|

| 434 |

+

```

|

| 435 |

+

|

| 436 |

+

Besides, We provide two methods for fine-grained control over the image size input to the model:

|

| 437 |

+

|

| 438 |

+

1. Define min_pixels and max_pixels: Images will be resized to maintain their aspect ratio within the range of min_pixels and max_pixels.

|

| 439 |

+

|

| 440 |

+

2. Specify exact dimensions: Directly set `resized_height` and `resized_width`. These values will be rounded to the nearest multiple of 28.

|

| 441 |

+

|

| 442 |

+

```python

|

| 443 |

+

# min_pixels and max_pixels

|

| 444 |

+

messages = [

|

| 445 |

+

{

|

| 446 |

+

"role": "user",

|

| 447 |

+

"content": [

|

| 448 |

+

{

|

| 449 |

+

"type": "image",

|

| 450 |

+

"image": "file:///path/to/your/image.jpg",

|

| 451 |

+

"resized_height": 280,

|

| 452 |

+

"resized_width": 420,

|

| 453 |

+

},

|

| 454 |

+

{"type": "text", "text": "Describe this image."},

|

| 455 |

+

],

|

| 456 |

+

}

|

| 457 |

+

]

|

| 458 |

+

# resized_height and resized_width

|

| 459 |

+

messages = [

|

| 460 |

+

{

|

| 461 |

+

"role": "user",

|

| 462 |

+

"content": [

|

| 463 |

+

{

|

| 464 |

+

"type": "image",

|

| 465 |

+

"image": "file:///path/to/your/image.jpg",

|

| 466 |

+

"min_pixels": 50176,

|

| 467 |

+

"max_pixels": 50176,

|

| 468 |

+

},

|

| 469 |

+

{"type": "text", "text": "Describe this image."},

|

| 470 |

+

],

|

| 471 |

+

}

|

| 472 |

+

]

|

| 473 |

+

```

|

| 474 |

+

|

| 475 |

+

## Limitations

|

| 476 |

+

|

| 477 |

+

While Qwen2-VL are applicable to a wide range of visual tasks, it is equally important to understand its limitations. Here are some known restrictions:

|

| 478 |

+

|

| 479 |

+

1. Lack of Audio Support: The current model does **not comprehend audio information** within videos.

|

| 480 |

+

2. Data timeliness: Our image dataset is **updated until June 2023**, and information subsequent to this date may not be covered.

|

| 481 |

+

3. Constraints in Individuals and Intellectual Property (IP): The model's capacity to recognize specific individuals or IPs is limited, potentially failing to comprehensively cover all well-known personalities or brands.

|

| 482 |

+

4. Limited Capacity for Complex Instruction: When faced with intricate multi-step instructions, the model's understanding and execution capabilities require enhancement.

|

| 483 |

+

5. Insufficient Counting Accuracy: Particularly in complex scenes, the accuracy of object counting is not high, necessitating further improvements.

|

| 484 |

+

6. Weak Spatial Reasoning Skills: Especially in 3D spaces, the model's inference of object positional relationships is inadequate, making it difficult to precisely judge the relative positions of objects.

|

| 485 |

+

|

| 486 |

+

These limitations serve as ongoing directions for model optimization and improvement, and we are committed to continually enhancing the model's performance and scope of application.

|

| 487 |

+

|

| 488 |

+

|

| 489 |

+

|

| 490 |

+

## Citation

|

| 491 |

+

|

| 492 |

+

If you find our work helpful, feel free to give us a cite.

|

| 493 |

+

|

| 494 |

+

```

|

| 495 |

+

@article{Qwen2-VL,

|

| 496 |

+

title={Qwen2-VL},

|

| 497 |

+

author={Qwen team},

|

| 498 |

+

year={2024}

|

| 499 |

+

}

|

| 500 |

+

|

| 501 |

+

@article{Qwen-VL,

|

| 502 |

+

title={Qwen-VL: A Versatile Vision-Language Model for Understanding, Localization, Text Reading, and Beyond},

|

| 503 |

+

author={Bai, Jinze and Bai, Shuai and Yang, Shusheng and Wang, Shijie and Tan, Sinan and Wang, Peng and Lin, Junyang and Zhou, Chang and Zhou, Jingren},

|

| 504 |

+

journal={arXiv preprint arXiv:2308.12966},

|

| 505 |

+

year={2023}

|

| 506 |

+

}

|

| 507 |

+

```

|