Upload README.md

Browse files

README.md

CHANGED

|

@@ -1,23 +1,57 @@

|

|

| 1 |

---

|

| 2 |

-

license:

|

| 3 |

-

datasets:

|

| 4 |

-

- AlexZheng/galactic-animation

|

| 5 |

-

language:

|

| 6 |

-

- en

|

| 7 |

-

pipeline_tag: text-to-image

|

| 8 |

tags:

|

| 9 |

-

-

|

|

|

|

| 10 |

---

|

|

|

|

|

|

|

|

|

|

| 11 |

|

| 12 |

-

|

| 13 |

-

|

| 14 |

-

|

| 15 |

-

|

| 16 |

-

|

| 17 |

-

|

| 18 |

-

|

| 19 |

-

|

| 20 |

-

|

| 21 |

-

|

| 22 |

-

|

| 23 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

license: creativeml-openrail-m

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 3 |

tags:

|

| 4 |

+

- stable-diffusion

|

| 5 |

+

- text-to-image

|

| 6 |

---

|

| 7 |

+

# Arcane Diffusion

|

| 8 |

+

This is the fine-tuned Stable Diffusion model trained on images from the TV Show Arcane.

|

| 9 |

+

Use the tokens **_arcane style_** in your prompts for the effect.

|

| 10 |

|

| 11 |

+

**If you enjoy my work, please consider supporting me**

|

| 12 |

+

[](https://patreon.com/user?u=79196446)

|

| 13 |

+

|

| 14 |

+

### 🧨 Diffusers

|

| 15 |

+

|

| 16 |

+

This model can be used just like any other Stable Diffusion model. For more information,

|

| 17 |

+

please have a look at the [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion).

|

| 18 |

+

|

| 19 |

+

You can also export the model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or [FLAX/JAX]().

|

| 20 |

+

|

| 21 |

+

```python

|

| 22 |

+

#!pip install diffusers transformers scipy torch

|

| 23 |

+

from diffusers import StableDiffusionPipeline

|

| 24 |

+

import torch

|

| 25 |

+

|

| 26 |

+

model_id = "nitrosocke/Arcane-Diffusion"

|

| 27 |

+

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

|

| 28 |

+

pipe = pipe.to("cuda")

|

| 29 |

+

|

| 30 |

+

prompt = "arcane style, a magical princess with golden hair"

|

| 31 |

+

image = pipe(prompt).images[0]

|

| 32 |

+

|

| 33 |

+

image.save("./magical_princess.png")

|

| 34 |

+

```

|

| 35 |

+

|

| 36 |

+

# Gradio & Colab

|

| 37 |

+

|

| 38 |

+

We also support a [Gradio](https://github.com/gradio-app/gradio) Web UI and Colab with Diffusers to run fine-tuned Stable Diffusion models:

|

| 39 |

+

[](https://huggingface.co/spaces/anzorq/finetuned_diffusion)

|

| 40 |

+

[](https://colab.research.google.com/drive/1j5YvfMZoGdDGdj3O3xRU1m4ujKYsElZO?usp=sharing)

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

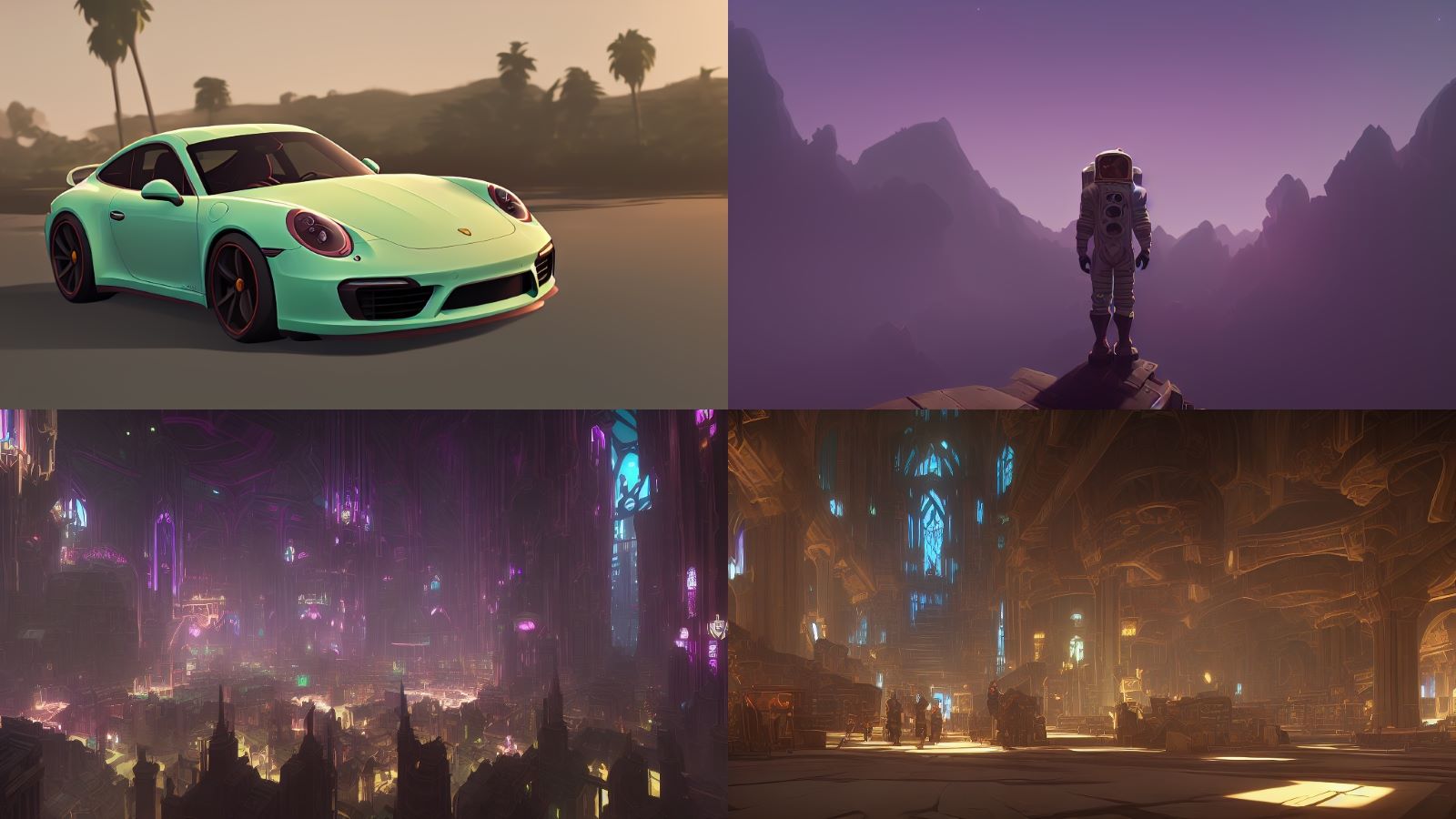

### Sample images from v3:

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

### Sample images from the model:

|

| 48 |

+

|

| 49 |

+

### Sample images used for training:

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

**Version 3** (arcane-diffusion-v3): This version uses the new _train-text-encoder_ setting and improves the quality and edibility of the model immensely. Trained on 95 images from the show in 8000 steps.

|

| 53 |

+

|

| 54 |

+

**Version 2** (arcane-diffusion-v2): This uses the diffusers based dreambooth training and prior-preservation loss is way more effective. The diffusers where then converted with a script to a ckpt file in order to work with automatics repo.

|

| 55 |

+

Training was done with 5k steps for a direct comparison to v1 and results show that it needs more steps for a more prominent result. Version 3 will be tested with 11k steps.

|

| 56 |

+

|

| 57 |

+

**Version 1** (arcane-diffusion-5k): This model was trained using _Unfrozen Model Textual Inversion_ utilizing the _Training with prior-preservation loss_ methods. There is still a slight shift towards the style, while not using the arcane token.

|